Paul

378 posts

Paul

@PaulOctoBot

Making crypto investment easier with @DrakkarsOctoBot

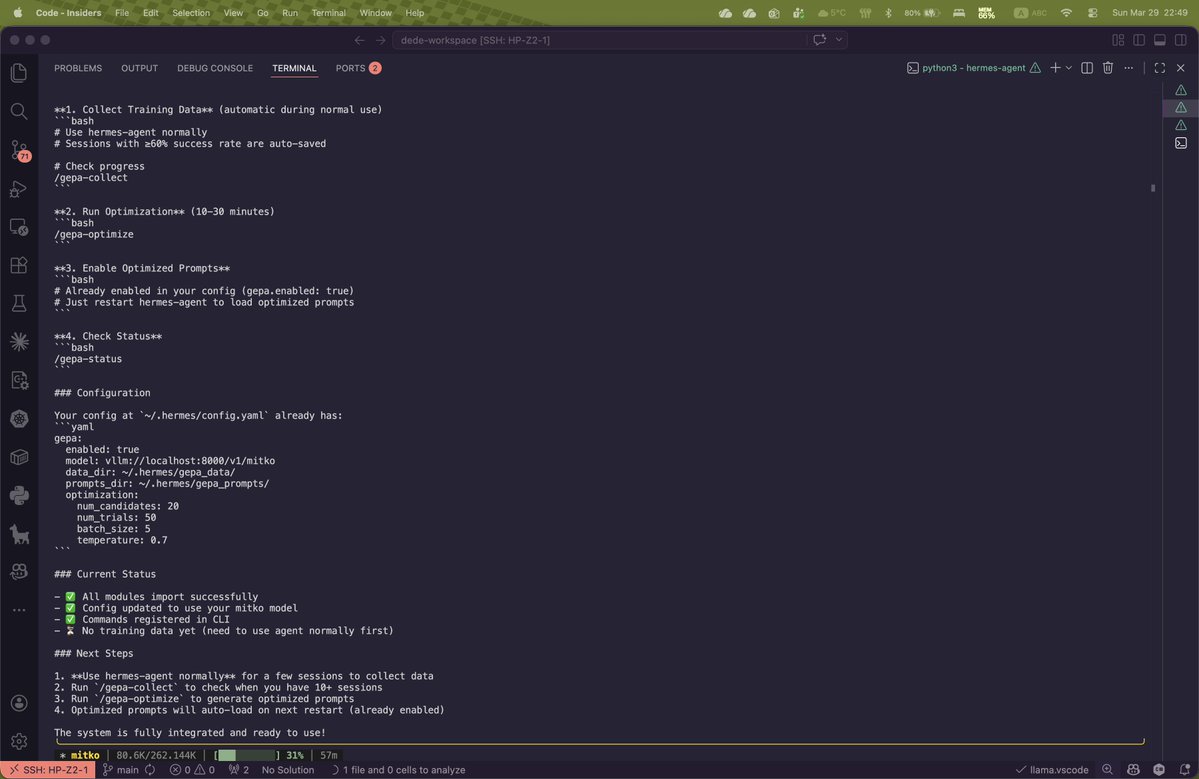

hey if you're considering nvidia's nemotron cascade 2 for agent coding on your 3090 this might save you time. here's what afew days of testing taught me. speed settled. 187 tok/s flat from 4K to 625K context. 67% faster than qwen 3.5 35B-A3B on the same card. mamba2 is context independent and needs zero flags to get there. for chat, bash scripting, API calls, simple tool use, this model at this speed is unmatched in the 3B active class. but i pushed it harder. gave it the same autonomous coding test i give every model. octopus invaders, a full space shooter game, pixel art enemies, particle systems, audio, HUD, game states. the kind of build that tests whether a model can hold architectural coherence across thousands of lines. i ran it five times. multi file, single file, thinking mode on. broken imports, blank screens, skeleton code that never rendered a single frame. on the same 3090 qwen's 9B dense built 2,699 lines and was playable on its first iteration. cascade 2 at 3B active never got there. 3 billion active parameters winning gold at the international math olympiad is real. but math competitions and autonomous coding are different problems. the speed is there. the reasoning is there for structured tasks. but holding coherence across thousands of lines of game logic, particle systems, audio, and collision detection? 3B active MoE hits a ceiling. cascade 2 is the fastest local model i've tested in its class. for complex agentic coding it's not ready at this size. test before you commit.