Pavel Shtykovskiy

158 posts

@framrus

Particle physics and astrophysics -» predicting ad clicks at Yandex -» spoken language understanding -» LLM-powered NPCs at https://t.co/A3vXLHYYGN

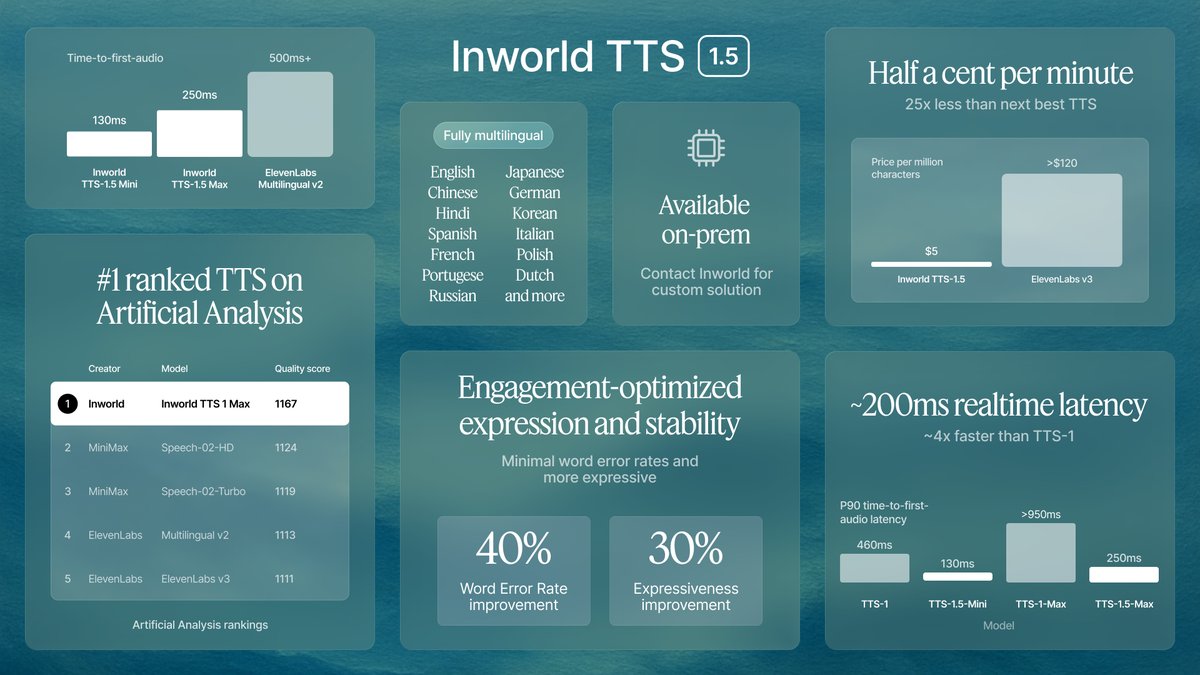

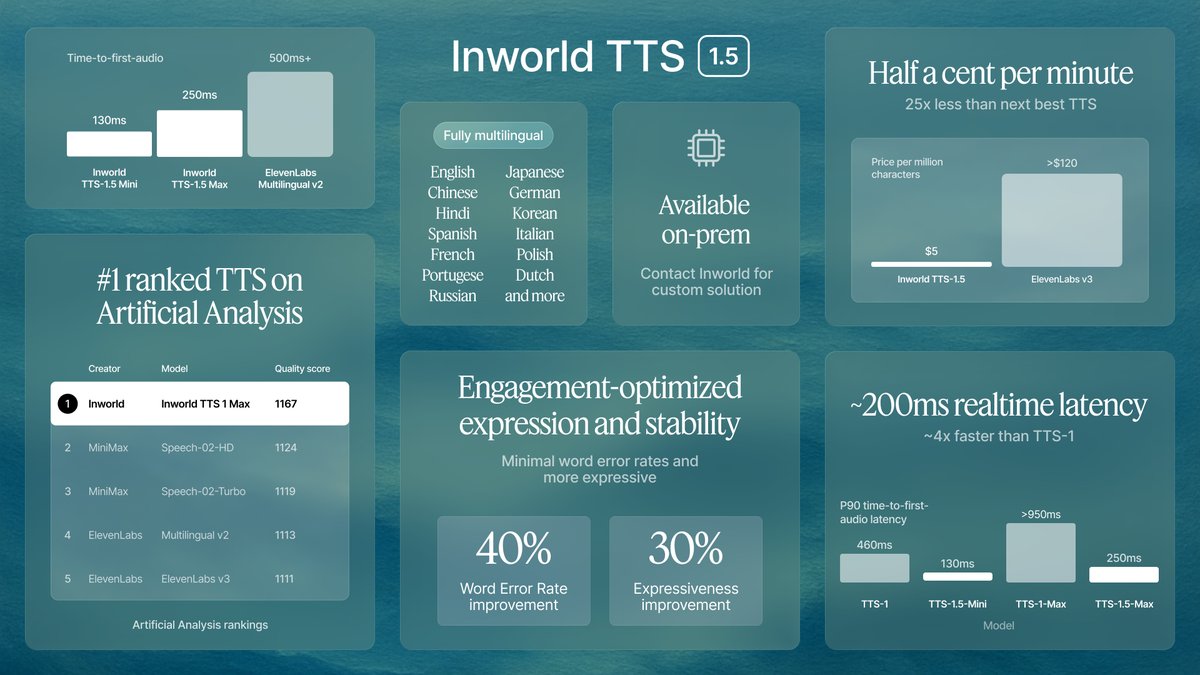

Inworld TTS 1 Max is the new leader on the Artificial Analysis Speech Arena Leaderboard, surpassing MiniMax’s Speech-02 series and OpenAI’s TTS-1 series The Artificial Analysis Speech Arena ranks leading Text to Speech models based on human preferences. In the arena, users compare two pieces of generated speech side by side and select their preferred output without knowing which models created them. The speech arena includes prompts across four real-world categories of prompts: Customer Service, Knowledge Sharing, Digital Assistants, and Entertainment. Inworld TTS 1 Max and Inworld TTS 1 both support 12 languages including English, Spanish, French, Korean, and Chinese, and voice cloning from 2-15 seconds of audio. Inworld TTS 1 processes ~153 characters per second of generation time on average, with the larger model, Inworld TTS 1 Max processing ~69 characters on average. Both models also support voice tags, allowing users to add emotion, delivery style, and non-verbal sounds, such as “whispering”, “cough”, and “surprised”. Both TTS-1 and TTS-1-Max are transformer-based, autoregressive models employing LLaMA-3.2-1B and LLaMA-3.1-8B respectively as their SpeechLM backbones. See the leading models in the Speech Arena, and listen to sample clips below 🎧

LLMs acing math olympiads? Cute. But BALROG is where agents fight dragons (and actual Balrogs)🐉😈 And today, Grok-4 (@grok) takes the gold 🥇 Welcome to the podium, champion!

Bananas and ML infrastructure: I've asked around about cloud workflows, and most of the feedback had unhappiness with cloud tooling. This prompted a discussion in @chipro's MLops community -- why are MLops frameworks so bad? (1/9)