deep Manifold

14.2K posts

@BetaTomorrow

mathematics Thief & Chief "through the window of differential equations, mathematics sees the light in the real world" / "通过微分方程的窗子,数学家看到现实世界的光" (Jiang Zehan)

Scientists discuss whether AI could surpass human contributions to physics by 2035 physicsworld.com/a/is-vibe-phys…

🚨 Simulation Theory: The Double Slit Experiment proves particles act like waves until observed then they snap into particles. What if our reality only "renders" when we're looking, just like a video game optimizing resources? Check out this episode from The Why Files breaking it down, tying it to Simulation Theory. Are we in a sim? This could be the key to unlocking the true nature of existence! The Why Files video did a great job on explaining the Double Slit Experiment & Simulation Theory What do YOU think—real or rendered? Drop your thoughts below!

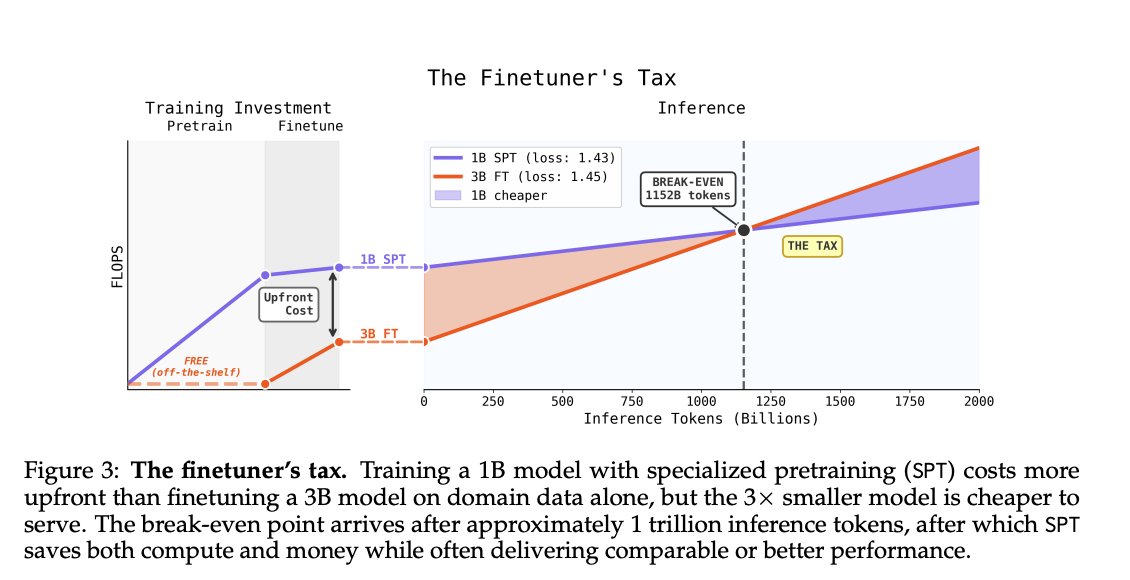

Models are typically specialized to new domains by finetuning on small, high-quality datasets. We find that repeating the same dataset 10–50× starting from pretraining leads to substantially better downstream performance, in some cases outperforming larger models. 🧵

[LG] M²RNN: Non-Linear RNNs with Matrix-Valued States for Scalable Language Modeling M Mishra, S Tan, I Stoica, J Gonzalez… [UC Berkeley & MIT-IBM Watson Lab] (2026) arxiv.org/abs/2603.14360

Stop Guessing the Market. Start Running Simulations Most models try to predict the future. MiroFish does it differently: it simulates it p ≈ 320 / 1000 → EV = pW − (1 − p)L Instead of giving you one forecast, MiroFish builds a digital world from news, policy drafts, earnings reports, and market signals then fills it with thousands of AI agents and lets them react > They argue > They set up camps > They amplify narratives > They change their beliefs under pressure And after hundreds or thousands of runs, you don’t get a prophecy you get scenario frequencies. > If one outcome happens 320 times out of 1000, then p ≈ 0.32 From there, it becomes a decision problem: - calculate Expected Value - compare your probability to the market’s - use Kelly to size the bet correct

一个中国大学生,花 10 天 搭出了一套多智能体预测引擎 MiroFish。 项目直接冲上 GitHub 热榜,当前已经到 23k+ stars,还拿到了 3000 万人民币 投资 这东西本质上不是普通 agent demo 它更像一个数字沙盘:把新闻、政策、金融信号丢进去,然后放出成千上万个带记忆、带行为逻辑的 AI agents,让它们像真实社会一样互动、争论、演化,再去推演结果。 做这件事的人叫 郭航江(BaiFu)。 公开报道里,他是大四学生,MiroFish 爆火后,获得了盛大集团创始人陈天桥的 3000 万人民币 投资。 这套东西能拿来干什么? 交易:把宏观消息、财报、市场信号喂进去,看模拟社会怎么反应。 公关:先跑一遍舆情,看看声明发出去会不会翻车。 创意实验:甚至可以拿小说设定做角色推演,看故事会怎么发展。 更狠的是,项目本身就支持 Docker 部署。 有 LLM API key,几分钟就能跑起来。 很多人还在手动猜市场。 已经有人开始搭 AI swarm,先在数字世界里把市场反应跑一遍,再决定真金白银怎么下 你觉得这种 “先模拟社会,再交易结果” 的玩法,会不会才是下一代 prediction market 的真正 edge?