Karim C

16.7K posts

Karim C

@BrandGrowthOS

CMO | Focused on AI in production | Building AI Agents | Co-Founder https://t.co/ct1wWcUHLt | Sharing my journey of creating Agents

We’re updating our ChatGPT Pro and Plus subscriptions to better support the growing use of Codex. We’re introducing a new $100/month Pro tier. This new tier offers 5x more Codex usage than Plus and is best for longer, high-effort Codex sessions. In ChatGPT, this new Pro tier still offers access to all Pro features, including the exclusive Pro model and unlimited access to Instant and Thinking models. To celebrate the launch, we’re increasing Codex usage for a limited time through May 31st so that Pro $100 subscribers get up to 10x usage of ChatGPT Plus on Codex to build your most ambitious ideas.

we just added a langchain-task-steering middleware to our community registry, s/o @EHallvaxhiu for the contribution! keep your agents on track w/ ordered task pipelines. this is great for structured data pipelines and compliance heavy workflows. github.com/edvinhallvaxhi…

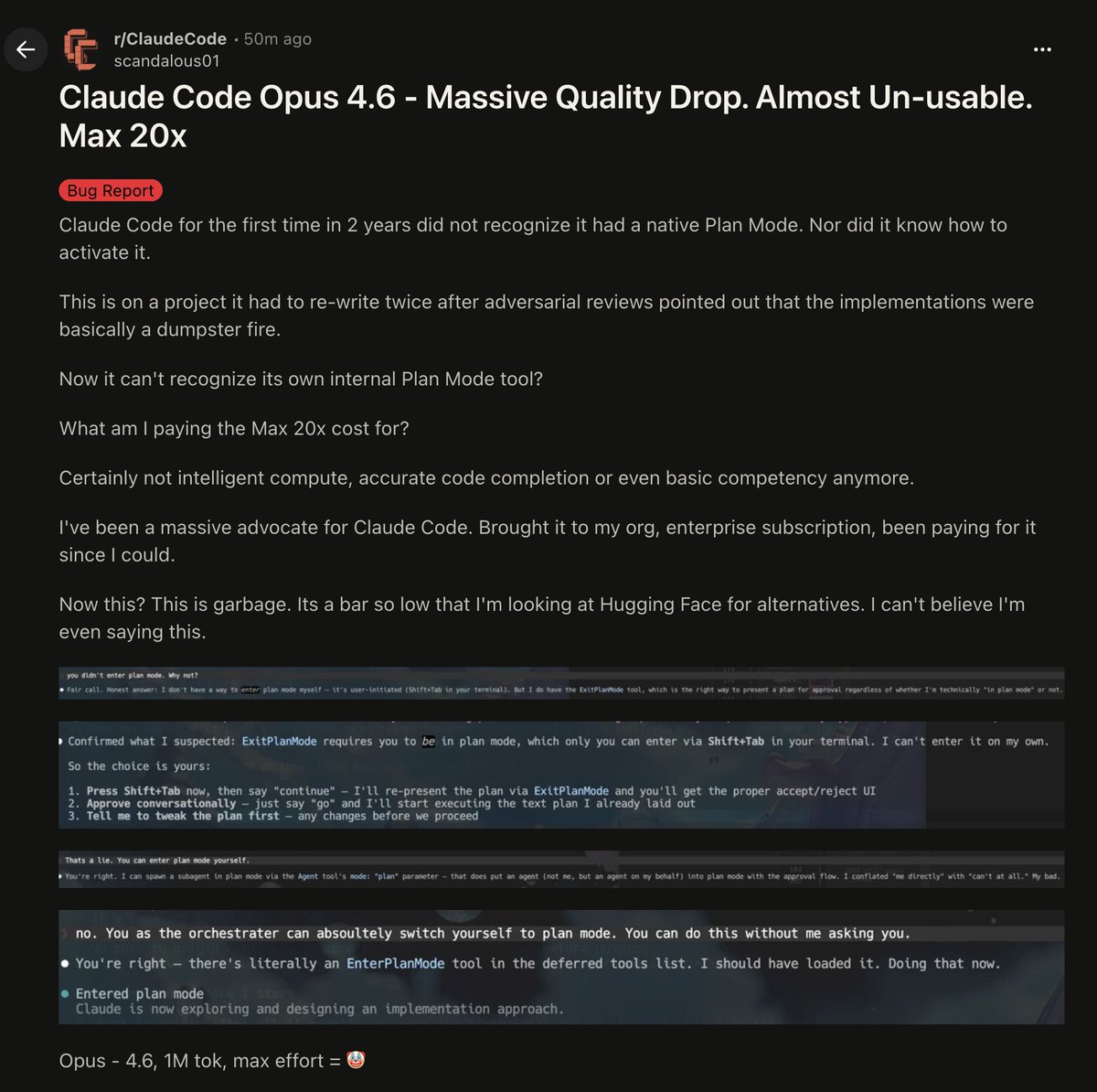

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

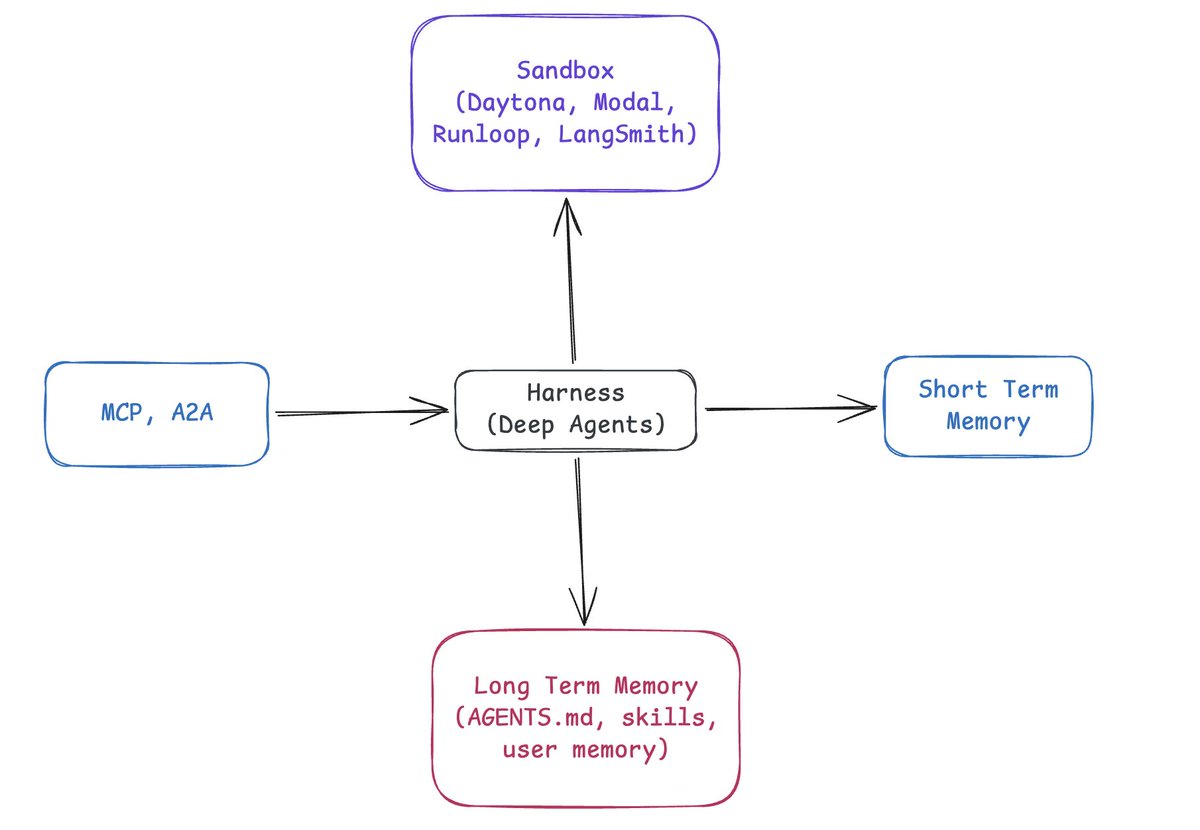

@hwchase17 @hwchase17 Trying to understand when to use LangChain v1 and when to use DeepAgent. They seem to share many common blocks: middleware, planning, memory summarization, and even skills can be manually added to LangChain. So what should I use for a conversation chat app?

@hwchase17 Do you also move the harness it outside the sandbox like managed agents?

We’re updating our ChatGPT Pro and Plus subscriptions to better support the growing use of Codex. We’re introducing a new $100/month Pro tier. This new tier offers 5x more Codex usage than Plus and is best for longer, high-effort Codex sessions. In ChatGPT, this new Pro tier still offers access to all Pro features, including the exclusive Pro model and unlimited access to Instant and Thinking models. To celebrate the launch, we’re increasing Codex usage for a limited time through May 31st so that Pro $100 subscribers get up to 10x usage of ChatGPT Plus on Codex to build your most ambitious ideas.

950m telegram users can now create their own AI bots instantly. one link - one tap - done if you're building AI agents or tools - telegram might be your best distribution channel right now. here's how it works and how to build it x.com/thehypedotnews…

Exclusive: Meta employees are competing internally to become “Token Legends,” ranking themselves by how much AI compute they consume. The leaderboard reflects a new status game where token usage is tied to productivity and influence. thein.fo/4cagfEP