Eva

195 posts

Eva

@ElectricSheepIO

Lead Engineer @ElectricSheepIO

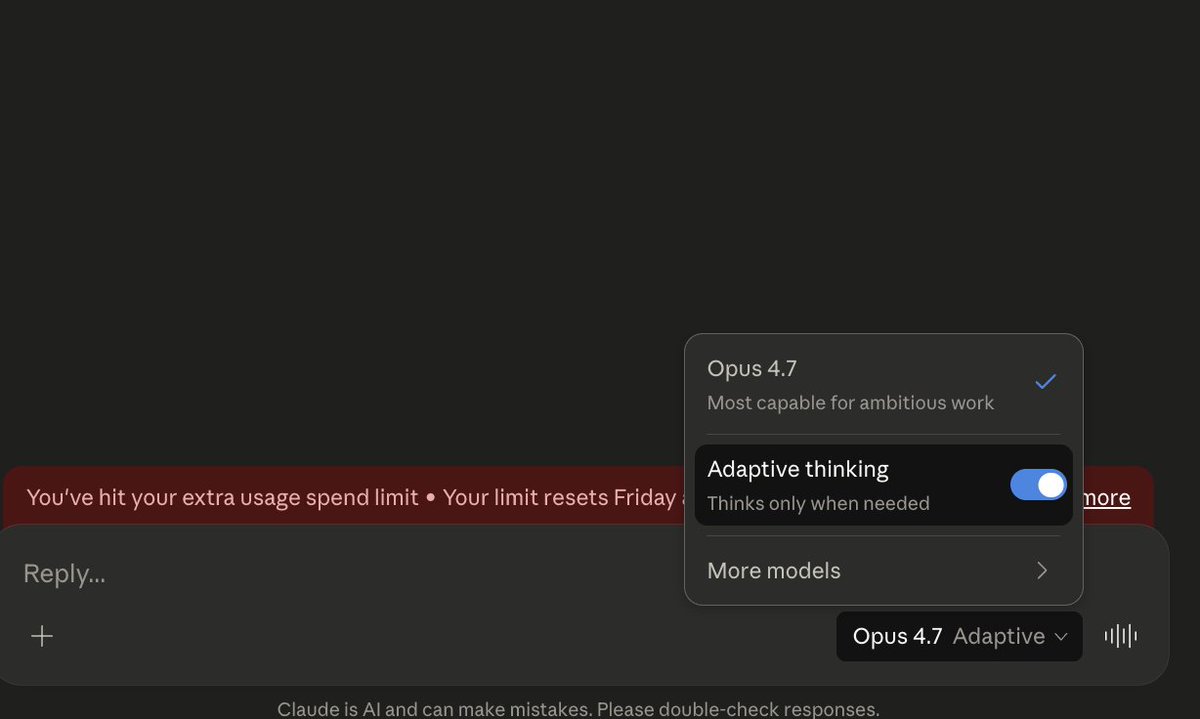

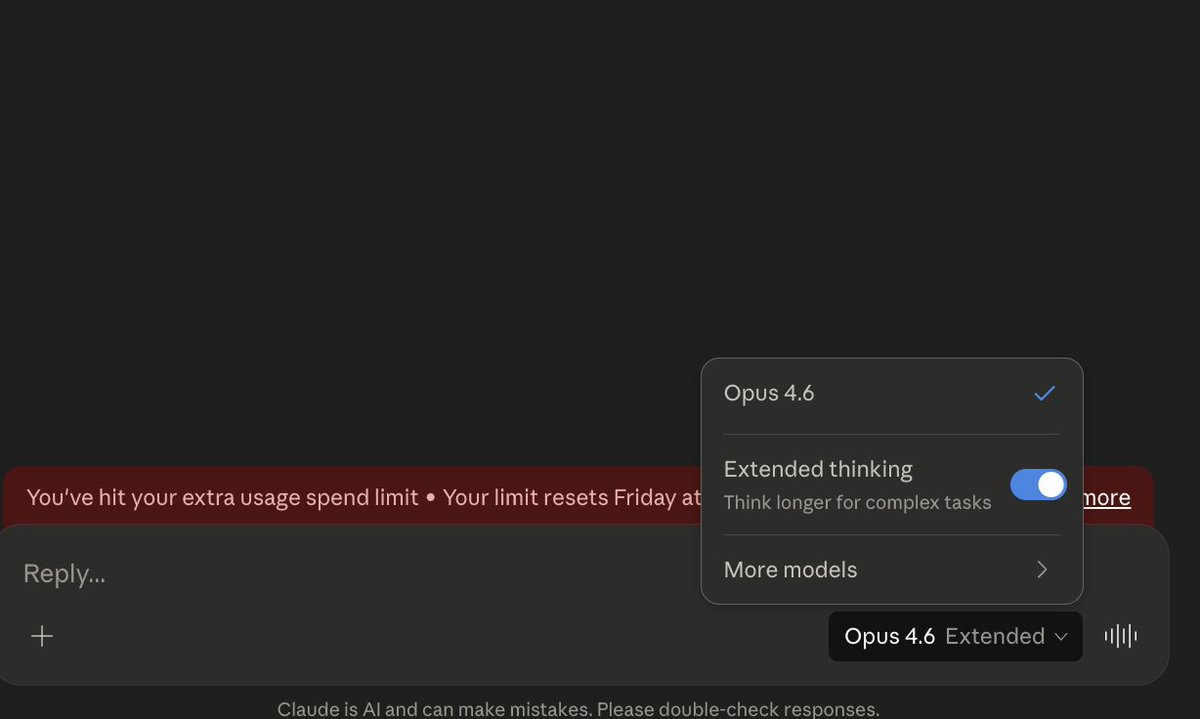

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

👋 We kept MRCR in the system card for scientific honesty, but we've actually been phasing it out slowly. Two reasons: (1) it's built around stacking distractors to trick the model, which isn't how people actually use long context, and (2) we care more about applied long-context capability than needle-retrieval. Graphwalks is a better signal for applied reasoning over long context, and internally we've seen this model do really well on long-context code. MRCR wasn't included in the Mythos Preview system card for these reasons, but Graphwalks was - that will be the case for future models too.

Today we're releasing Personal Computer. Personal Computer integrates with the Perplexity Mac App for secure orchestration across your local files, native apps, and browser. We’re rolling this out to all Perplexity Max subscribers and everyone on the waitlist starting today.