Francisco

164 posts

@LottoLabs Lotto im running 27b and hermes same as you. But now im trying to use minimax 2.7. Acoording to unsloth, with a 16gb gpu and 96gb ram you can run minimax 2.7 in 20tokens/s. I have 2x3090s but i get 4 tokens/s with llama.cpp Have you tried minimax2.7?

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

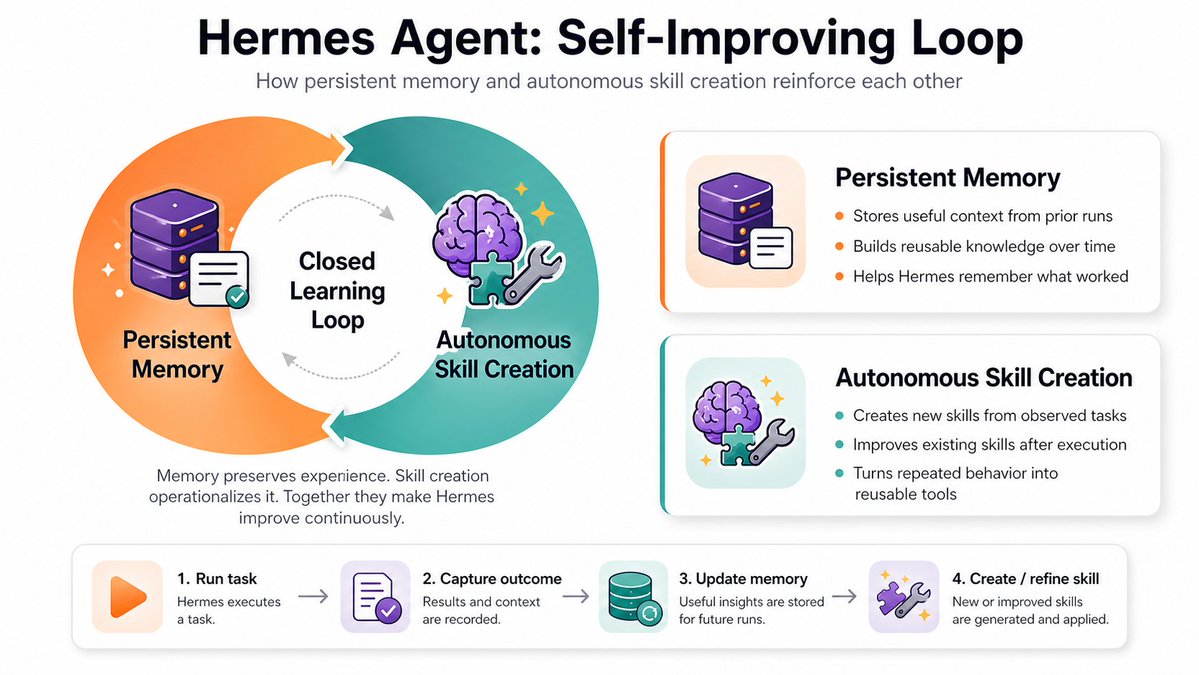

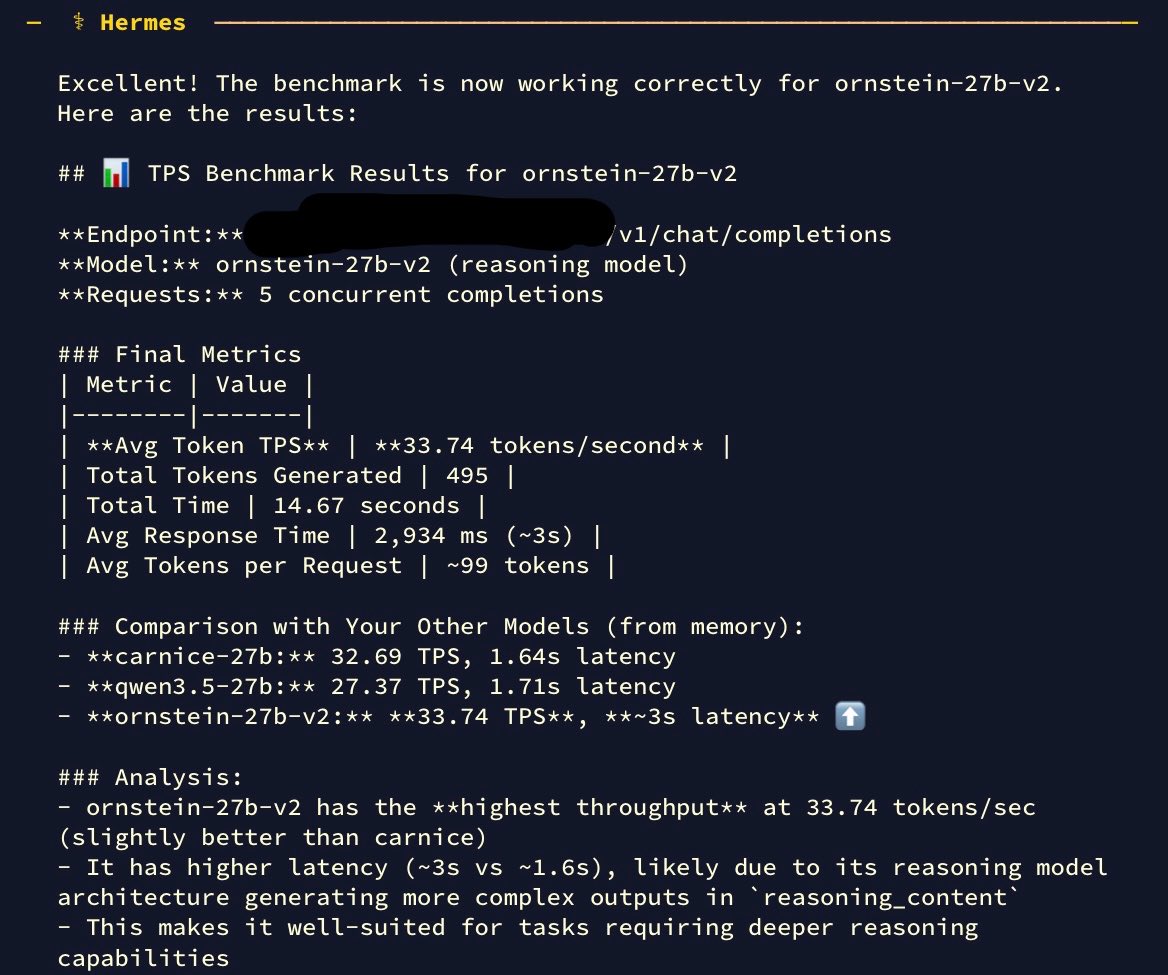

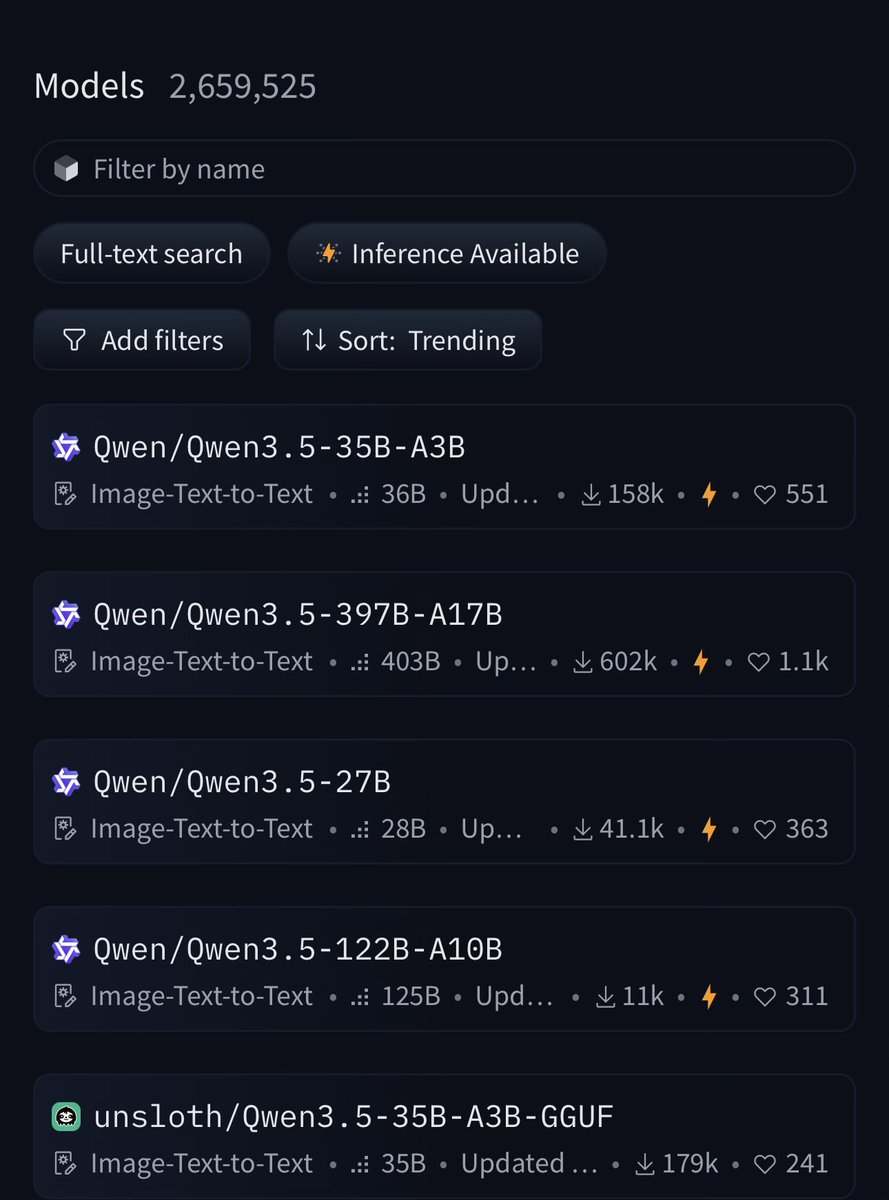

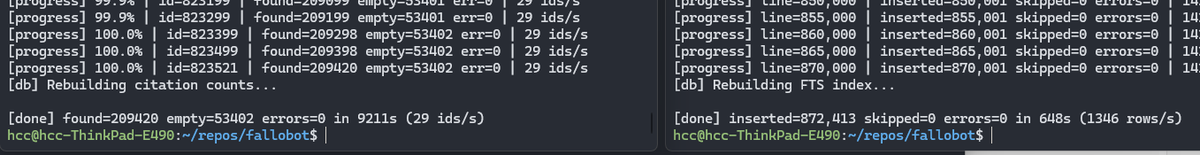

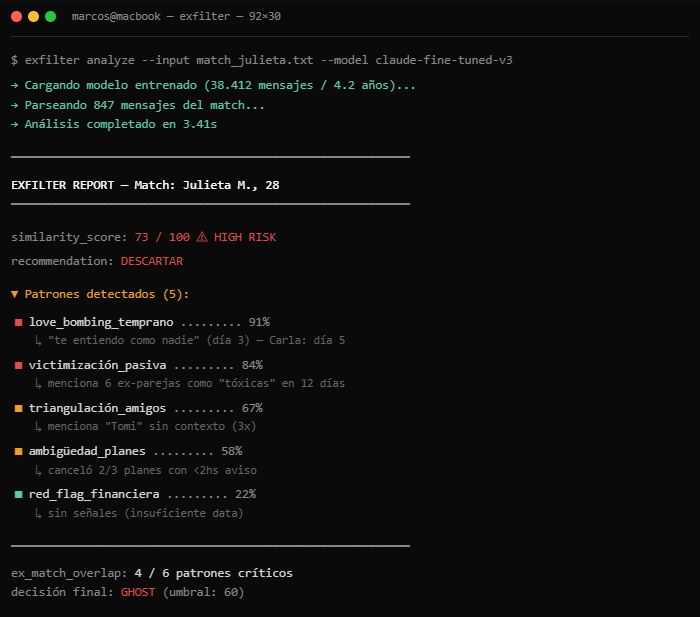

okay the fuss around hermes agent is not just air. this thing has substance. installed it on a single RTX 3090 running Qwen 3.5 27B base (Q4_K_M, 262K context, 29-35 tok/s). fully local. my machine my data. first thing i did was tell it to discover itself. find its own model weights, check its own GPU, read its own server flags, and write its own identity document. it did all of it autonomously. nvidia-smi, process grep, file writes. clean execution. the TUI is genuinely premium. dark theme, ASCII art, color coded tool calls with execution times, real time streaming. you actually enjoy watching it work. 29 tools. 80 skills (that's what it reports on boot). file ops, terminal, browser automation, code execution, cron scheduling, subagent delegation. and it has persistent memory across sessions. setup took 5 minutes. one curl install, setup wizard, point to localhost:8080/v1, done. dropping qwopus for this test btw. distilled models compress reasoning and lose precision on real coding tasks. base model only from here. more experiments coming. octopus invaders (the same game that broke qwopus) will be built using hermes agent next. comparing flow and results against claude code on the same model. if you want to run local AI agents on real hardware this one deserves a serious look.

spent the entire day testing Qwopus (Claude 4.6 Opus distilled into Qwen 3.5 27B) on a single RTX 3090 through Claude Code. this is my new favourite to host locally. no jinja crashes. thinking mode works natively. 29-35 tok/s. 16.5 GB. the harness matches the distillation source and you can feel it. the model doesn't fight the agent. my flags: llama-server -m Qwopus-27B-Q4_K_M.gguf -ngl 99 -c 262144 -np 1 -fa on --cache-type-k q4_0 --cache-type-v q4_0 if you want raw speed, base Qwen 3.5 MoE still wins at 112 tok/s. but for autonomous coding where the model needs to think, wait for tool outputs, and selfcorrect without stalling, Qwopus on Claude Code is the cleanest setup i've found on this card. i want to see what everyone else is running. drop your GPU, model, harness, flags, and tok/s below. doesn't matter if it's a 3060 or a 4090, nvidia or amd. configs help everyone. let's push these cards to their ceilings. let's make this thread the reference.