FundaAI

1.6K posts

@FundaAI

FundaAI provides AI Invest OS, including AI Agents, equity research reports, and research data. https://t.co/0xRZegmuoR

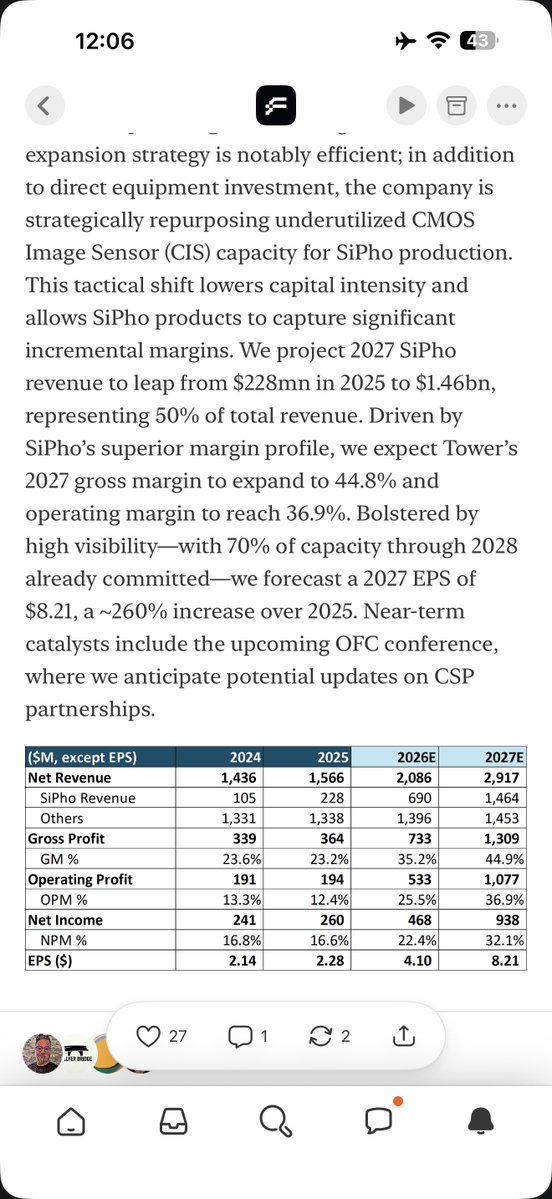

Deep| $TSEM: SiPho Capacity Inflection Drives Multi-Fold Growth Cycle AI data center compute clusters are currently scaling from thousands of GPUs to tens or even hundreds of thousands of nodes. At this magnitude, traditional copper interconnects are reaching severe physical limits; once transmission rates hit 800G and above, transmission reach shortens dramatically while power consumption escalates exponentially. To bypass these constraints, Silicon Photonics (SiPho) is becoming the essential backbone for AI Networking. As of 4Q25, Tower Semiconductor’s Silicon Photonics business has emerged as the company’s primary growth engine. Revenue doubled from $106mn in 2024 to $228mn in 2025, achieving an annualized revenue run rate exceeding $360mn by the end of 2025. As the industry transitions from 400G/800G toward 1.6T, Tower has positioned itself as the lead supplier of 1.6T Silicon Photonics wafers. We believe Tower is currently the premier SiPho PIC (Photonic Integrated Circuit) foundry with a distinct competitive lead. Among major competitors, Malaysia’s Silterra lacks significant expansion capacity, while SiPho offerings from UMC, GlobalFoundries (via the AMF acquisition), and STM still trail Tower by a wide margin. The TDP (Thermal Design Power) of AI server racks, such as the Nvidia GB200 series, has jumped from 700W in the Hopper generation to over 1,200W, necessitating the adoption of liquid cooling and more efficient optical interconnects. Within these environments, SiPho facilitates higher speeds while maintaining system scalability under strict thermodynamic limits. On February 5, NVIDIA and Tower Semiconductor established a strategic partnership focused on high-speed optical interconnects for AI data centers. Tower will leverage its SiPho process platform to manufacture 1.6T-class SiPho optical engines and modules for NVIDIA’s next-generation networking architecture, optimized for NVIDIA’s specific protocols. This collaboration aims to resolve bandwidth and energy efficiency bottlenecks during the Scale-out phase of massive GPU clusters. Separately, we have highlighted the rapid progression of Optical Scale-Up, with volume production expected to commence in 2027. Delivering over 10x the optical bandwidth of traditional Scale-Out, Optical Scale-Up—whether implemented via pluggable modules, NPO, or CPO—will significantly drive demand for SiPho PICs. Alibaba’s UPN512 (a 512-xPU optical scale-up super-node) validates the migration of optics from scale-out networking into the scale-up core domain, as LPO/NPO and other near-packaged solutions achieve system-level economics. Consequently, optics is evolving from a mere bandwidth expansion tool into a foundational infrastructure component for scale-up architectures. For SiPho, this shift directly expands the long-term TAM. Scale-up environments demand higher port densities, extreme bandwidth, and stricter power budgets—requirements natively addressed by high-integration SiPho PICs and linear drive solutions. SiPho’s penetration is moving beyond “incremental replacement” to potentially becoming the default interconnect standard for next-generation AI super-nodes. Detailed Report open.substack.com/pub/fundaai/p/…

I cannot believe they put Serenity as same level with themselves and SemiAnalysis

Deep|OFC 2026 Preview: 400G per Lane — The Next Major Inflection in Optical Interconnects We have been steadfast supporters of Optics since a year and a half ago, publishing in-depth Optics reports almost every month. Regarding this OFC and future Optics technology trends, we have a lot to discuss — especially today, when there is so much debate and speculation. The shift is not just about doubling speed from 200G → 400G per lane. It fundamentally reshapes the technology stack: modulation formats, photonic materials, and manufacturing capacity across the optical supply chain. At 200G, IMDD dominates virtually all data-center interconnects due to its simplicity, low power, and cost advantages. But at 400G/lane, physics begins to push IMDD toward very high baud rates (~226 GBaud), making signal integrity increasingly difficult beyond short-reach links. This creates a new architectural space: “Coherent Lite.”By adopting simplified coherent modulation (e.g., SP-16QAM) while avoiding the full complexity of telecom coherent systems, Coherent Lite targets the emerging 1–20 km campus / AI cluster interconnect regime. In this middle distance range, coherent-lite architectures can achieve higher spectral efficiency with lower baud rates, improving signal robustness while keeping system cost manageable. This segment barely existed in the 200G era but could become a meaningful new optical market as AI clusters scale. The shift to 400G also changes which photonic platforms matter. InP EML remains the mature solution for IMDD InP PIC becomes increasingly relevant for integrated coherent transmitters SiPh offers scalable integration but faces performance limits for very high-speed modulation TFLN emerges as a promising high-bandwidth modulator platform One underappreciated implication: InP area demand explodes.As architectures move from single-channel IMDD lasers to large InP PICs integrating multiple active components, the InP die area per optical channel can increase by two orders of magnitude, dramatically increasing wafer demand. In other words, a bandwidth upgrade at the system level may translate into a structural demand shock for the InP supply chain. As AI infrastructure scales toward ever-larger clusters, optical interconnect architecture — and the photonic materials behind it — may become one of the defining bottlenecks of the next compute cycle. $LITE $COHR $AAOI $TSEM $AXTI Detailed Report fundaai.substack.com/p/deepofc-2026…

60-Day Median Return (Long), Top 10: Global Tech Research (33 calls): +20.4% Citrini Research (19): +14.9% FundaAI (158): +11.2% SemiAnalysis (45): +8.2% BEP Research (52): +8.0% Dick Capital (44): +7.6% Irrational Analysis (41): +7.4% Fabricated Knowledge (91): +6.0% Altay Capital (55): +5.5% TMT Breakout (529): +5.1%

The volume question. Among authors making 100+ long calls: • FundaAI (158): +11.2% med, 70% win rate • TMT Breakout (529): +5.1%, 62% • TicToc Trading (480): +0.1%, 50% • Quality Stocks (300): +0.1%, 51% • Swiss Transparent (141): -0.1%, 50% Most high-volume authors converge toward market returns. FundaAI seems to be an outlier.

60-Day Median Return (Long), Top 10: Global Tech Research (33 calls): +20.4% Citrini Research (19): +14.9% FundaAI (158): +11.2% SemiAnalysis (45): +8.2% BEP Research (52): +8.0% Dick Capital (44): +7.6% Irrational Analysis (41): +7.4% Fabricated Knowledge (91): +6.0% Altay Capital (55): +5.5% TMT Breakout (529): +5.1%

Weekly|Unwiiiiiiiiiind, OpenAI/ $ORCL, GTC preview, $TSEM deep dive, $MDB/ $CRWD/ $ASTS earnings, CPO debate First of all, we wish our readers and everyone in the Middle East safe and sound. We never had a dull week during the MS TMT Conference, and this year is even more so, given the Citrini article and rising geopolitical risk. Unwind is the main theme of the market, from IGV vs SOXX to Korea/Japan vs Hong Kong, from value vs growth to Mag7 vs small- and mid-caps. We would not claim to be geniuses at timing the market, but this has been in the cards for a while, given the extreme positioning. On the other hand, AI development remains as strong as it is, if not getting even stronger, evidenced by Anthropic almost doubling ARR in just two months. We believe the key trend within AI remains intact, and flow should return to them as the dust settles. However, stay frosty in the near term. We may carry out a system upgrade next week in preparation for our major version update at the end of the month. If we confirm that it is necessary, we may pause Substack subscriptions for one week, during which no one’s credit card will be charged. During this period, we may reduce our content output, but we will ensure that essential preview content continues to be published. This week’s reports GTC preview - The annual party returns. We believe this round marks a strategic shift for $NVDA, from continuing to introduce faster GPUs with higher FLOPS to focusing on building a comprehensive AI factory. We discuss six areas of focus: Vera-Rubin for agentic AI, Rubin CPX for high-throughput inference, Groq LPU for low-latency inference, Feynman, CPO/photonics, and an AI-native storage hierarchy. fundaai.substack.com/p/deepgtc-2026… TSEM deep dive - Our latest coverage focuses on another key player within the optics supply chain. Specifically, TSEM is transforming into a silicon photonics (SiPho) specialty foundry, poised to benefit massively from the rising adoption of this technology. In this report, we detail the technology architecture, capacity expansion, and competitive landscape, along with financial estimates. fundaai.substack.com/p/deeptsem-sip… MDB and CRWD review - Both names were seen as relatively safer from AI disruption during the sell-off (less so for CRWD lately). MDB disappointed bulls with an Atlas expectation miss, which we believe reflects the GTM strategy change under the new CEO. On the other hand, CRWD delivered solid results, alleviating concerns. In general, both names are still in the more expensive camp, which remains a key overhang in this tape. fundaai.substack.com/p/deeptsem-sip… fundaai.substack.com/p/reviewcrwd-f… fundaai.substack.com/p/previewcrwd-… ASTS review - first-ever fiscal report featuring substantial revenue. This marks the company’s official transition from a pure concept stock to an early-stage commercial company with tangible revenue—despite remaining in a significant loss phase. As the SpaceX IPO approaches, we believe the broader space sector will see growing investor interest. fundaai.substack.com/p/reviewasts-4… Detailed Report open.substack.com/pub/fundaai/p/…

Deep| $TSEM: SiPho Capacity Inflection Drives Multi-Fold Growth Cycle AI data center compute clusters are currently scaling from thousands of GPUs to tens or even hundreds of thousands of nodes. At this magnitude, traditional copper interconnects are reaching severe physical limits; once transmission rates hit 800G and above, transmission reach shortens dramatically while power consumption escalates exponentially. To bypass these constraints, Silicon Photonics (SiPho) is becoming the essential backbone for AI Networking. As of 4Q25, Tower Semiconductor’s Silicon Photonics business has emerged as the company’s primary growth engine. Revenue doubled from $106mn in 2024 to $228mn in 2025, achieving an annualized revenue run rate exceeding $360mn by the end of 2025. As the industry transitions from 400G/800G toward 1.6T, Tower has positioned itself as the lead supplier of 1.6T Silicon Photonics wafers. We believe Tower is currently the premier SiPho PIC (Photonic Integrated Circuit) foundry with a distinct competitive lead. Among major competitors, Malaysia’s Silterra lacks significant expansion capacity, while SiPho offerings from UMC, GlobalFoundries (via the AMF acquisition), and STM still trail Tower by a wide margin. The TDP (Thermal Design Power) of AI server racks, such as the Nvidia GB200 series, has jumped from 700W in the Hopper generation to over 1,200W, necessitating the adoption of liquid cooling and more efficient optical interconnects. Within these environments, SiPho facilitates higher speeds while maintaining system scalability under strict thermodynamic limits. On February 5, NVIDIA and Tower Semiconductor established a strategic partnership focused on high-speed optical interconnects for AI data centers. Tower will leverage its SiPho process platform to manufacture 1.6T-class SiPho optical engines and modules for NVIDIA’s next-generation networking architecture, optimized for NVIDIA’s specific protocols. This collaboration aims to resolve bandwidth and energy efficiency bottlenecks during the Scale-out phase of massive GPU clusters. Separately, we have highlighted the rapid progression of Optical Scale-Up, with volume production expected to commence in 2027. Delivering over 10x the optical bandwidth of traditional Scale-Out, Optical Scale-Up—whether implemented via pluggable modules, NPO, or CPO—will significantly drive demand for SiPho PICs. Alibaba’s UPN512 (a 512-xPU optical scale-up super-node) validates the migration of optics from scale-out networking into the scale-up core domain, as LPO/NPO and other near-packaged solutions achieve system-level economics. Consequently, optics is evolving from a mere bandwidth expansion tool into a foundational infrastructure component for scale-up architectures. For SiPho, this shift directly expands the long-term TAM. Scale-up environments demand higher port densities, extreme bandwidth, and stricter power budgets—requirements natively addressed by high-integration SiPho PICs and linear drive solutions. SiPho’s penetration is moving beyond “incremental replacement” to potentially becoming the default interconnect standard for next-generation AI super-nodes. Detailed Report open.substack.com/pub/fundaai/p/…

$AAOI I have been seeing a lot of bearish takes on this name recently. I am still long. The stock is down 26% from its all-time high of $127. The bear case has gotten louder. Here is why I think the bears are missing the asymmetry. At $94, AAOI trades at roughly 8x FY2026 consensus revenue of $856M. Management guided above $1B, which brings that multiple down to around 7x. LITE trades at approximately 14.5x forward revenue. COHR trades at roughly 7x. AAOI is growing faster than both. Now look at what half-execution on 2027 targets actually means: Management said monthly transceiver revenue reaches $378M by mid-2027. That annualizes to $4.5B. At current prices you are paying roughly 1.6x that run rate. If they deliver half of that you are still paying well under 3x revenue for a company growing triple digits with an in-house InP fab. That does not stay unpriced. The Q4 results were real. Revenue of $134M, up 33.9% year over year, beating estimates. EPS of -$0.01 vs consensus of -$0.12. 1.6T volume order secured from a major hyperscale customer. Shipments starting Q3 2026. Three hyperscale customers each expected to exceed 10% of FY2026 revenue. The single customer concentration risk the bears have cited for years is structurally gone. The company is investing $300M to triple InP laser capacity in Texas by mid-2027. They are presenting at OFC on March 17th. Jensen speaks Monday. The bear case requires management to miss badly on every single target simultaneously. The bull case only requires them to be half right. Full breakdown in the report. Link in bio.

Preview| $APP& $U 4Q25: 2026 Is the Year of Gaming We began covering APP in 4Q24, focusing on AppLovin’s e-commerce opportunity and actively addressing short reports in 1Q25. fundaai.substack.com/p/previewapp-1… fundaai.substack.com/p/longapp-furt… fundaai.substack.com/p/deepapp-how-… In 2Q25, we were cautious on revenue and e-commerce. Shares initially dropped 10% after hours, but quickly rebounded on Adam’s positive e-commerce outlook. fundaai.substack.com/p/previewapp-2… In 3Q25, we remain cautious on e-commerce. fundaai.substack.com/p/previewappun… During Black Friday, we published a report highlighting AppLovin’s significant improvement. However, in our Substack Chat posts throughout the following month, we consistently noted that while e-commerce ads remained weak, gaming ads stayed very strong. fundaai.substack.com/p/deepapp-blac… We swiftly refuted Capital Watch’s recent short attack. As of yesterday, most claims have been retracted. We also covered Unity concurrently. In 3Q25 earnings, we noted that Unity experienced an SDK bug that had a short-term impact, which was quickly fixed and led to a reacceleration. We continue to see accelerating growth in 4Q25 and 1Q26. In our early December outlook on AI for this year, we presented an interesting perspective: 2026 is the Year of Enterprise for AI. Since that report, the narrative has been dominated by OpenAI and Anthropic entering the enterprise space and displacing SaaS. Today, we want to discuss a new trend: The Year of Gaming. Detailed Report open.substack.com/pub/fundaai/p/…