ทวีตที่ปักหมุด

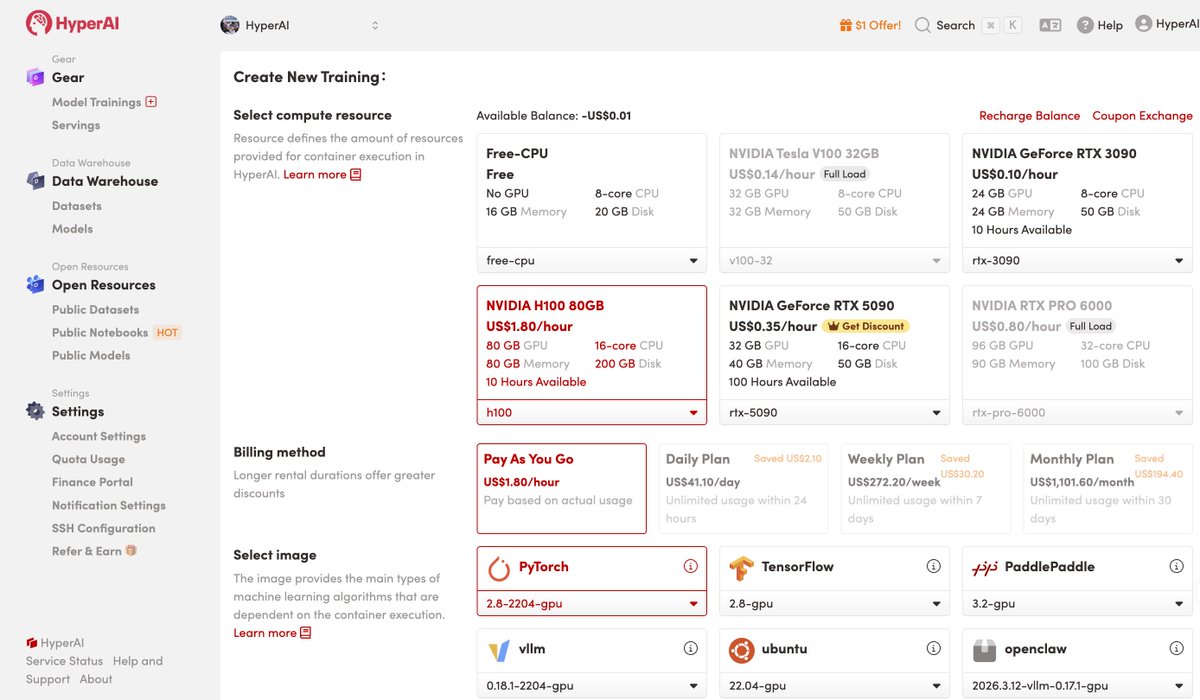

🚀 Join HyperAI Early Access

🎁 Earn up to $200 in Credits

🧐 Whether you’re a researcher, developer, or startup team, we’d love to hear from you.

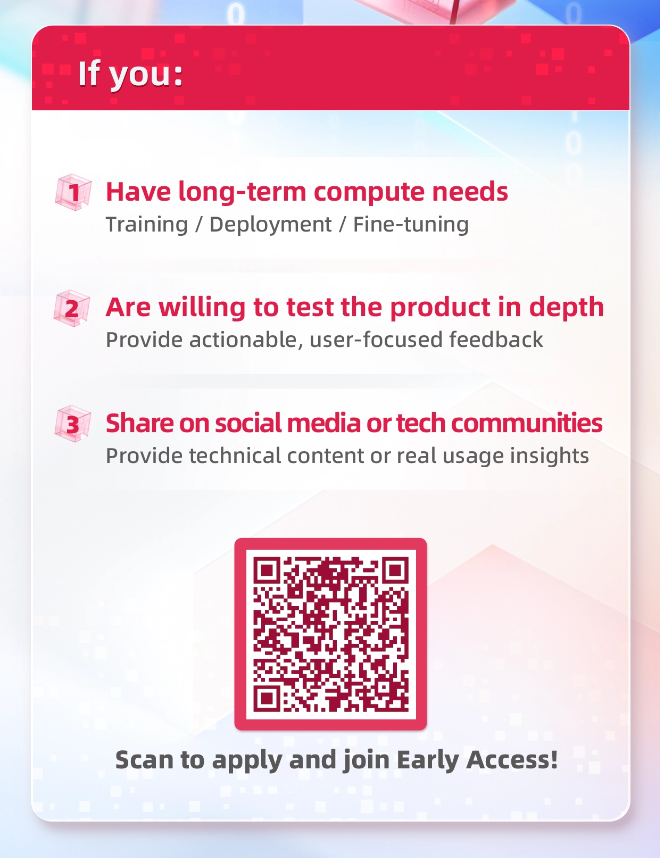

If you:

• Have long-term compute needs (training / deployment / fine-tuning)

• Are willing to test the platform and give actionable feedback

• Willing to share platform-based technical content on social media or dev communities

Help us build a better compute platform.

👉 Join the beta via the link:go.hyper.ai/iAekn

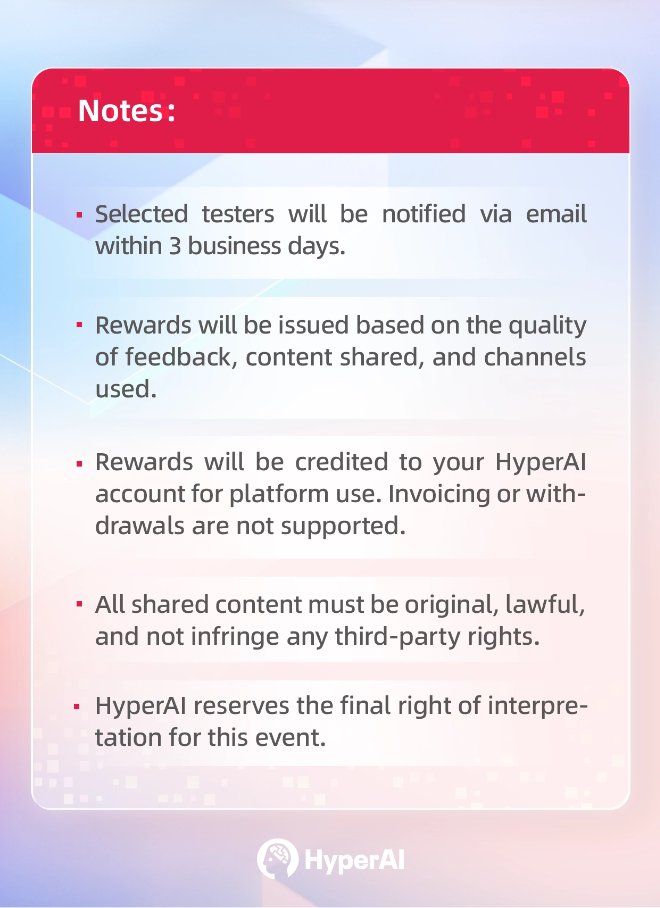

💡 Notes:

•Selected testers will be notified via email within 3 business days.

•Rewards will be granted based on the quality of feedback, content shared, and channels used.

•Rewards will be credited to your HyperAI account for platform use. Invoicing or withdrawals are not supported.

📌 For more details 👇

English