Leon Engländer

33 posts

@LeonEnglaender

code gen agents research @cohere | open-source @AdapterHub | prev. @UKPLab

🚀Adapters v1.2 is out!🚀 We've made Adapters incredibly flexible: Add adapter support to ANY Transformer architecture with minimal code! We used this to add 8 new models out-of-the-box, incl. ModernBERT, Gemma3 & Qwen3! Explore this +2 new adapter methods in this thread👇(1/5)

🚀Adapters v1.2 is out!🚀 We've made Adapters incredibly flexible: Add adapter support to ANY Transformer architecture with minimal code! We used this to add 8 new models out-of-the-box, incl. ModernBERT, Gemma3 & Qwen3! Explore this +2 new adapter methods in this thread👇(1/5)

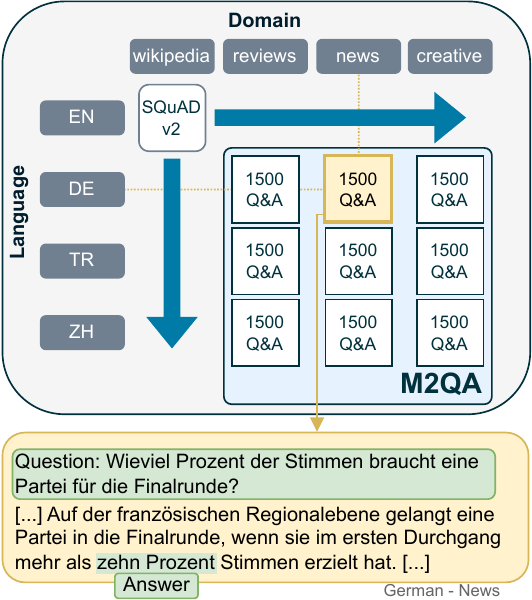

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇

🎉Adapters 1.0 is here!🚀 Our open-source library for modular and parameter-efficient fine-tuning got a major upgrade! v1.0 is packed with new features (ReFT, Adapter Merging, QLoRA, ...), new models & improvements! Blog: adapterhub.ml/blog/2024/08/a… Highlights in the thread! 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇