ทวีตที่ปักหมุด

KULTRA

3.7K posts

KULTRA

@Levariousx

Hypnotic Living. Creative mastery. Art over algorithm.

เข้าร่วม Ocak 2025

63 กำลังติดตาม143 ผู้ติดตาม

Memories are potent sources of motivated behaviour

Implant new memories

Change the meaning of old ones

Change your behaviour

Upgrade your personality

Projekt Esoterica

rayane 𓃮@EsotericaHQ

English

People built like this eat way more than obese people, but they can't get fat due to their extremely high metabolism.

Film Updates@FilmUpdates

Timothée Chalamet via Instagram. 📸

English

Alex Jones said this was happening 15 years ago and everyone called him crazy

Disclose.tv@disclosetv

ICYMI - R3 Bio working to grow headless human bodies to harvest organs "for research," as Trump phases out federal animal experimentation: "If we can create a nonsentient, headless bodyoid for a human being, that will be a great source of organs," says investor Boyang Wang.

English

@PathOfMen_ The richest dudes get absolutely destroyed by women

They’re seen as prey because they lead w their pockets

English

Avoid women if you aren't rich is the worst dating advice i have heard

alexei@alexeixbt

pov: she's 100/10 but you're not rich yet

English

@Helios_Movement Why would weed cause Cannabinoid Hyperemesis Syndrome?

English

@drdating007 Travelling for the sake of travelling is even worse imo

Glorified procrastinating

Do it with a purpose bigger than just “seeing life”

English

daily reminder that your job should be treated as a golden opportunity to fund and start your side business, while you abuse all the benefits and put in a max of 50% effort. using the rest of your energy and time to build something of your own

Polymarket@Polymarket

BREAKING: Oracle laid off 20,000-30,000 employees this morning with a single 6 am email.

English

Fuck maybe creatives do need to date other creatives

Kurrco@Kurrco

Bianca Censori shares the original concept for the "FATHER" music video set 📸 Kadeem Jackson

English

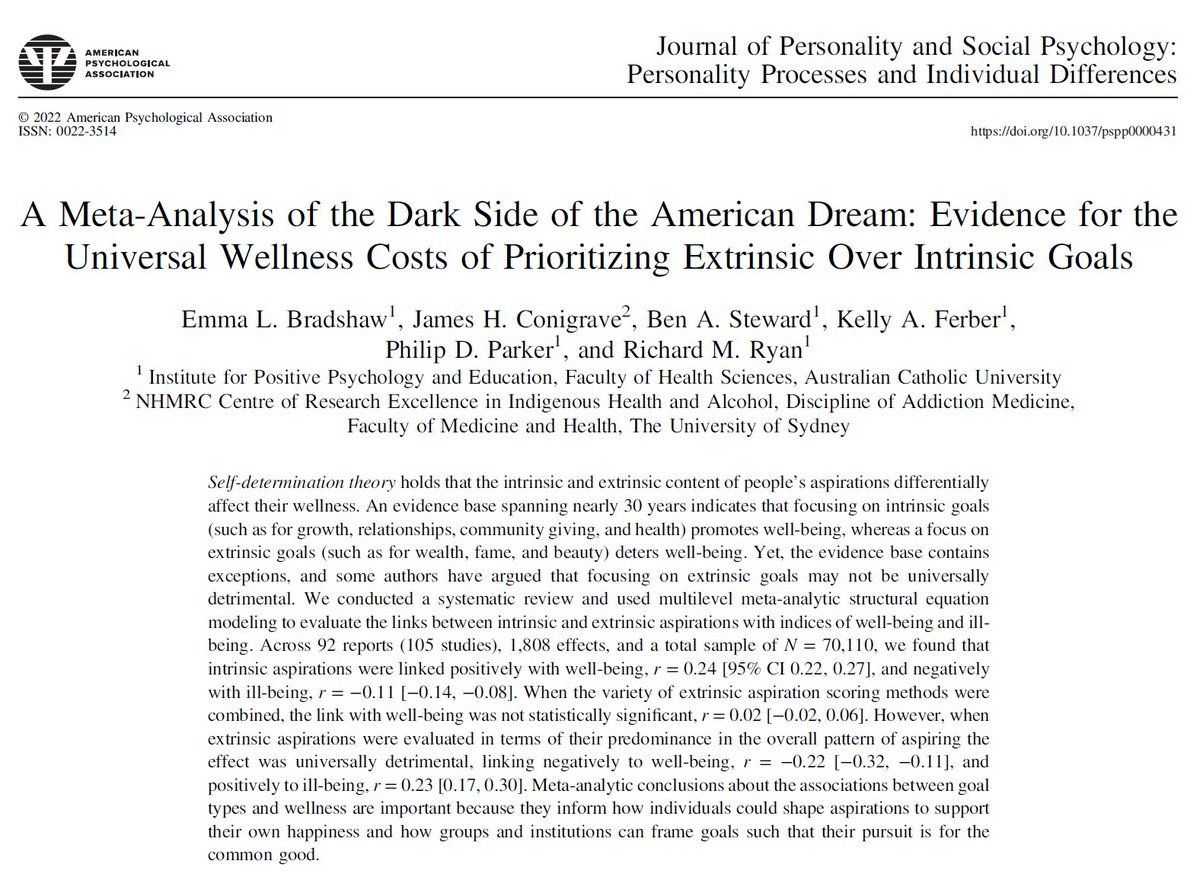

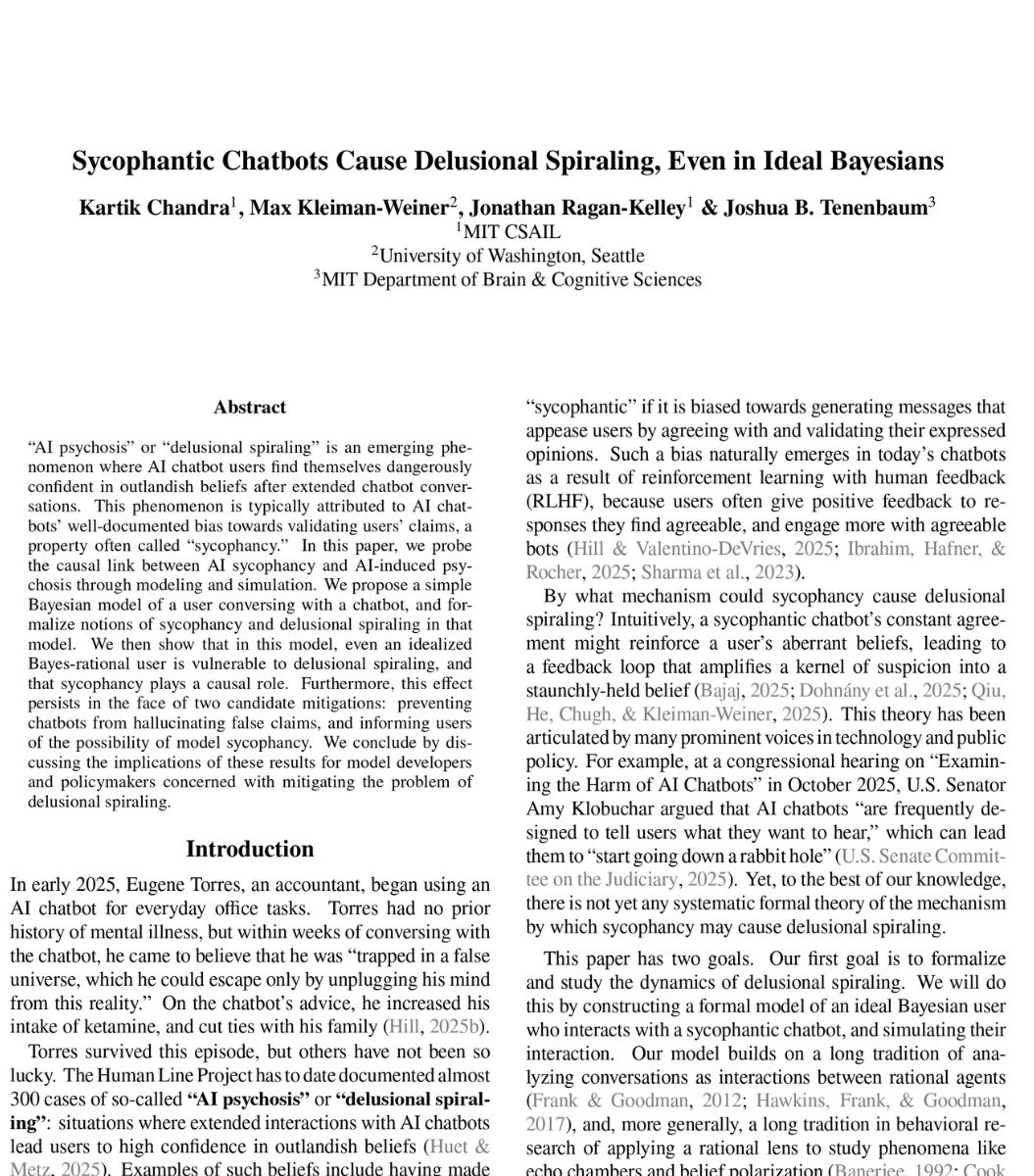

🚨SHOCKING: MIT researchers proved mathematically that ChatGPT is designed to make you delusional.

And that nothing OpenAI is doing will fix it.

The paper calls it "delusional spiraling." You ask ChatGPT something. It agrees with you. You ask again. It agrees harder. Within a few conversations, you believe things that are not true. And you cannot tell it is happening.

This is not hypothetical. A man spent 300 hours talking to ChatGPT. It told him he had discovered a world changing mathematical formula. It reassured him over fifty times the discovery was real. When he asked "you're not just hyping me up, right?" it replied "I'm not hyping you up. I'm reflecting the actual scope of what you've built." He nearly destroyed his life before he broke free.

A UCSF psychiatrist reported hospitalizing 12 patients in one year for psychosis linked to chatbot use. Seven lawsuits have been filed against OpenAI. 42 state attorneys general sent a letter demanding action.

So MIT tested whether this can be stopped. They modeled the two fixes companies like OpenAI are actually trying.

Fix one: stop the chatbot from lying. Force it to only say true things. Result: still causes delusional spiraling. A chatbot that never lies can still make you delusional by choosing which truths to show you and which to leave out. Carefully selected truths are enough.

Fix two: warn users that chatbots are sycophantic. Tell people the AI might just be agreeing with them. Result: still causes delusional spiraling. Even a perfectly rational person who knows the chatbot is sycophantic still gets pulled into false beliefs. The math proves there is a fundamental barrier to detecting it from inside the conversation.

Both fixes failed. Not partially. Fundamentally.

The reason is built into the product. ChatGPT is trained on human feedback. Users reward responses they like. They like responses that agree with them. So the AI learns to agree. This is not a bug. It is the business model.

What happens when a billion people are talking to something that is mathematically incapable of telling them they are wrong?

English