Luke Rowe

105 posts

Luke Rowe

@Luke22R

PhD student at @Mila_Quebec, focusing on autonomous driving. Previously @Waymo and @torc_robotics.

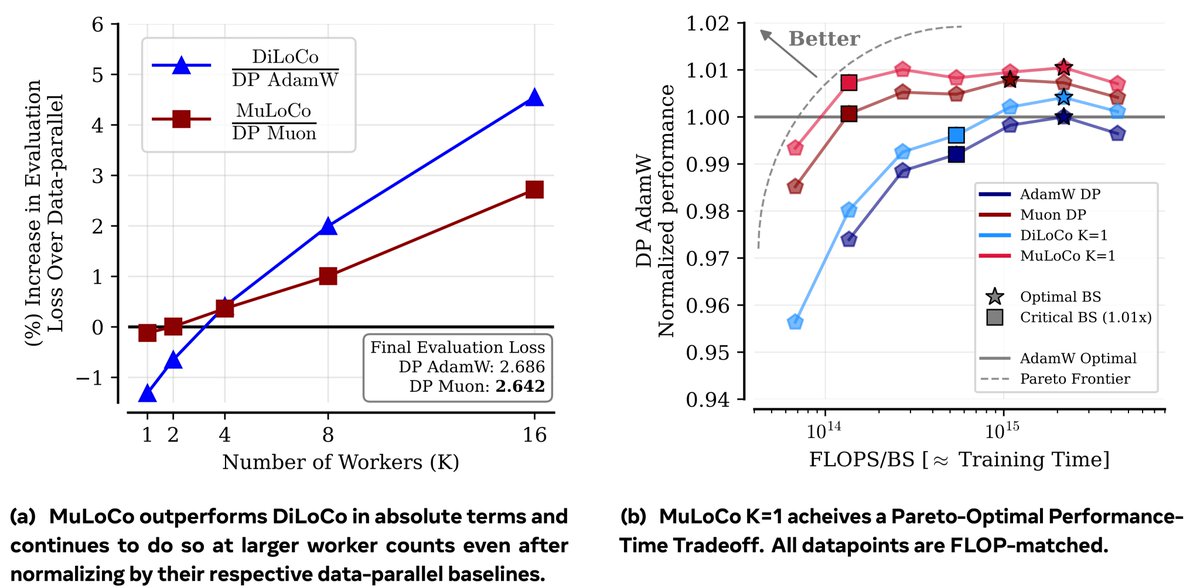

Optimization theory for adaptive methods actually predicts most of what we know about hyperparameter scaling in LLM pretraining, and suggests new strategies as well. We did a deep dive here.

If I was a grad student today, I would: 1) Not write papers, 2) push my (agent-written) code to a public repo ~weekly, 3) maintain (via agents) a writeup.tex (manually verified) and a skill.md in the repo, and 4) work towards establishing skill usage as the new "citation" format.

Unveiling our new startup Advanced Machine Intelligence (AMI Labs). We just completed our seed round: $1.03B / 890M€, one the largest seeds ever, probably the largest for a European company. We're hiring! [the background image is the Veil Nebula - a picture I took from my backyard, most appropriate for an unveiling] More details here: techcrunch.com/2026/03/09/yan…