Julien Pourcel @ NeurIPS

353 posts

@PourcelJulien

PhD student at @inria (@flowersinria team) working on LLM4code | Google PhD Fellow 2025 | @ENS_ParisSaclay (MVA)

ARC Prize 2025 Winners Interviews Paper Award 2nd Place @PourcelJulien, @cedcolas, @pyoudeyer discuss SOAR - a self-improving evolutionary program synthesis framework that fine-tunes an LLM on its own search traces - without human-engineered DSLs or solution datasets.

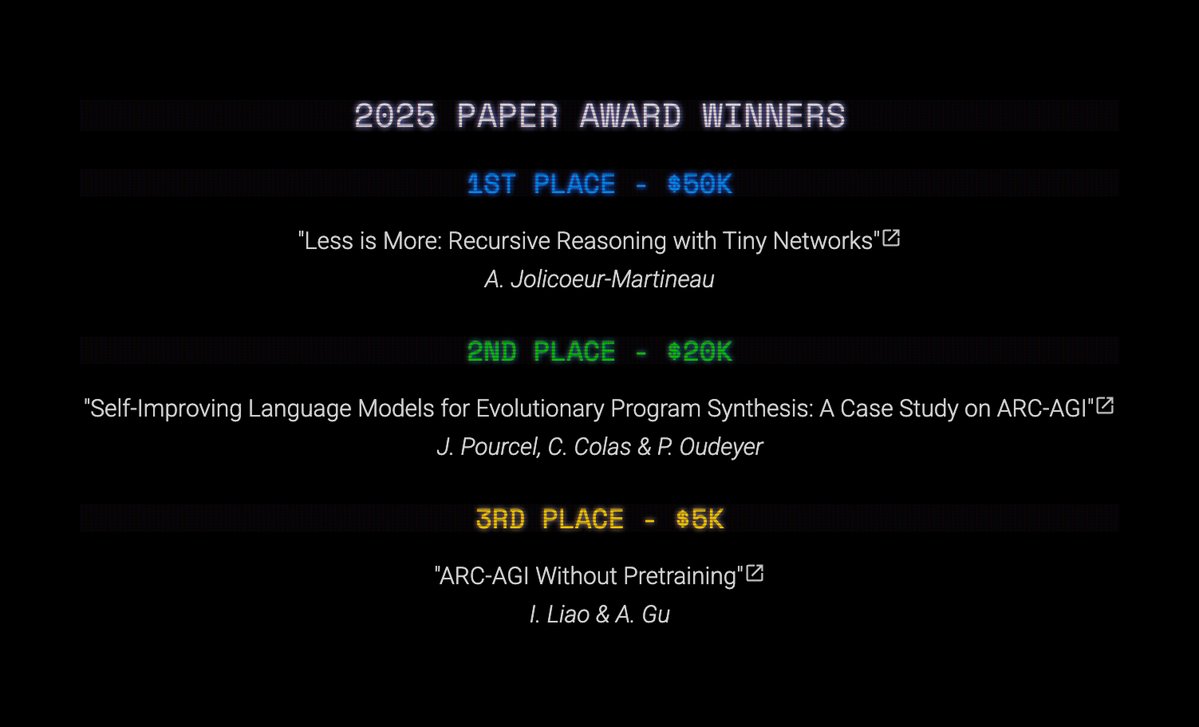

Announcing the ARC Prize 2025 Top Score & Paper Award winners The Grand Prize remains unclaimed Our analysis on AGI progress marking 2025 the year of the refinement loop

Announcing the ARC Prize 2025 Top Score & Paper Award winners The Grand Prize remains unclaimed Our analysis on AGI progress marking 2025 the year of the refinement loop