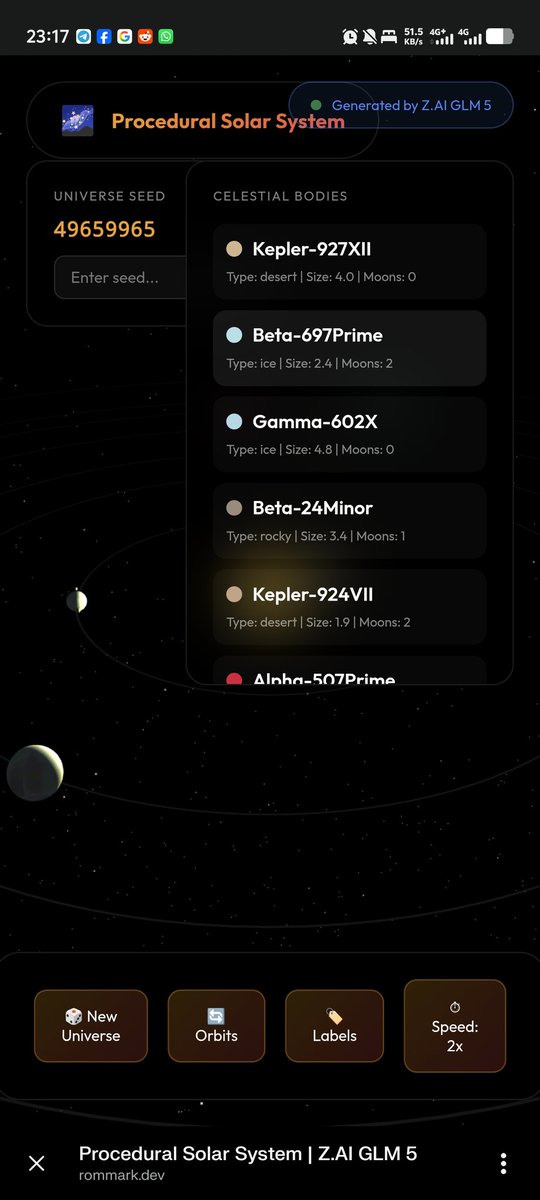

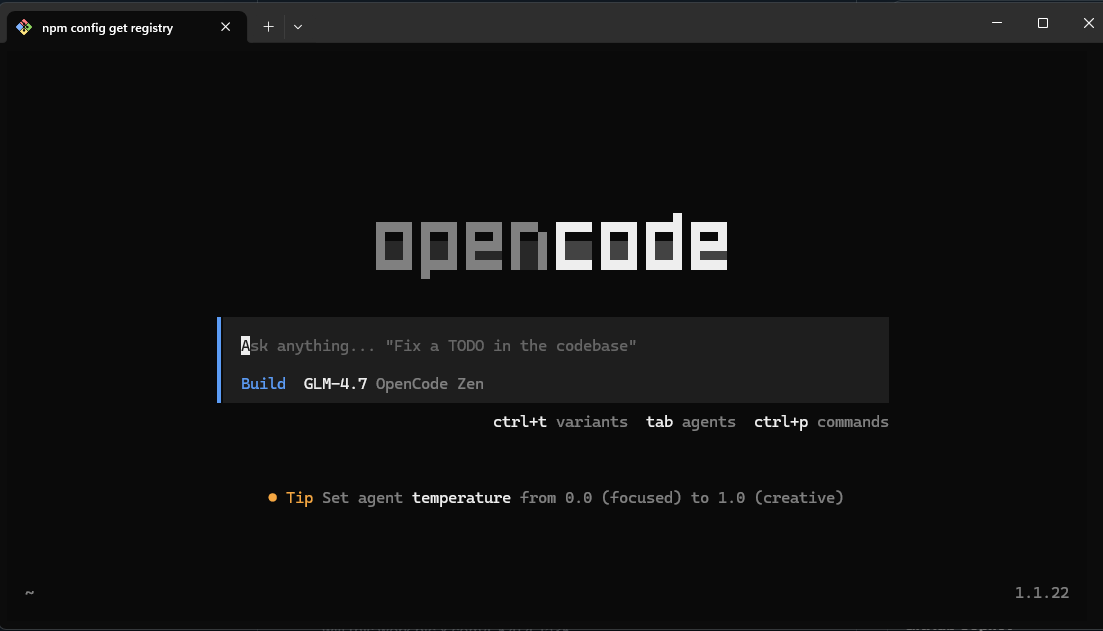

Presenting the GLM-5 Technical Report! arxiv.org/abs/2602.15763 After the launch of GLM-5, we’re pulling back the curtain on how it was built. Key innovations include: - DSA Adoption: Significantly reduces training and inference costs while preserving long-context fidelity - Asynchronous RL Infrastructure: Drastically improves post-training efficiency by decoupling generation from training - Agent RL Algorithms: Enables the model to learn from complex, long-horizon interactions more effectively Through these innovations, GLM-5 achieves SOTA performance among open-source models, with particularly strong results in real-world software engineering tasks.