Sam Stolt

18.5K posts

@Sam_A_Stolt

MBA & CFA I like Crypto - tao Real Estate Portfolio Sold 1 business Bought 5 business Bought 10 media assets Travelled 21 countries

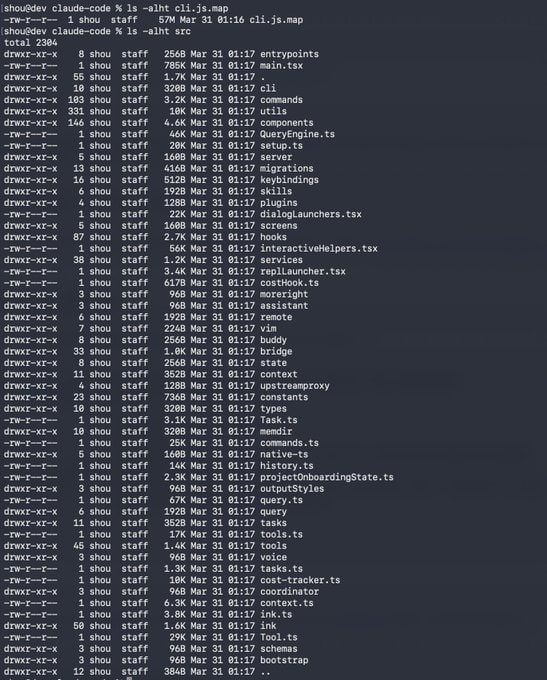

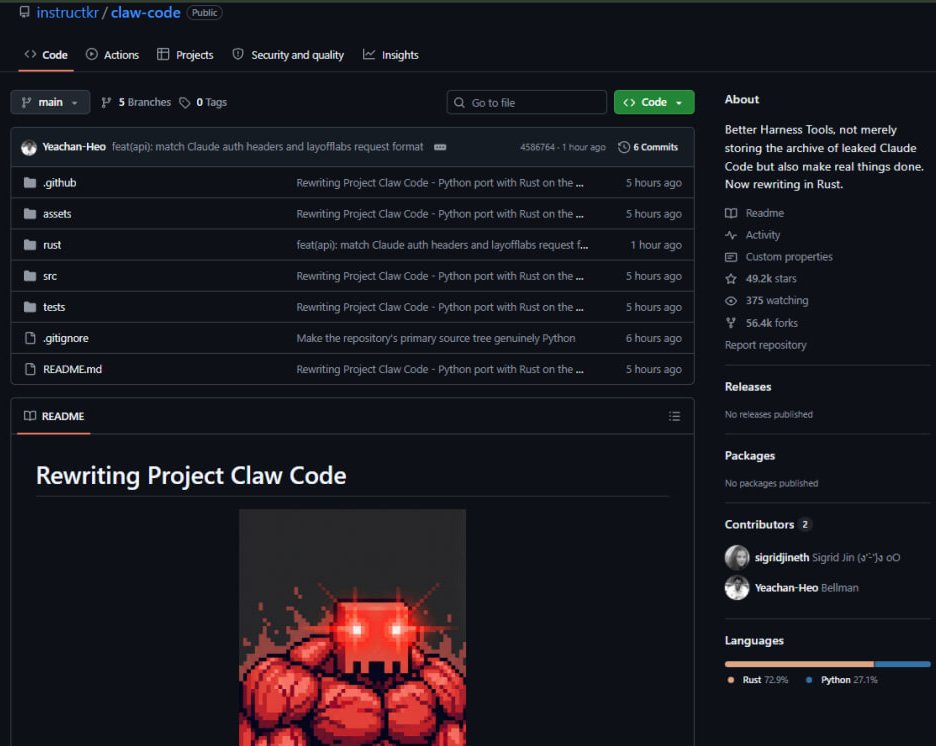

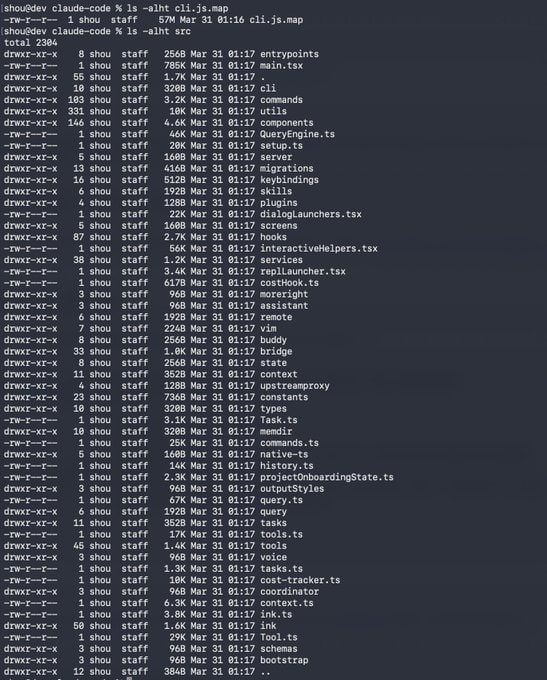

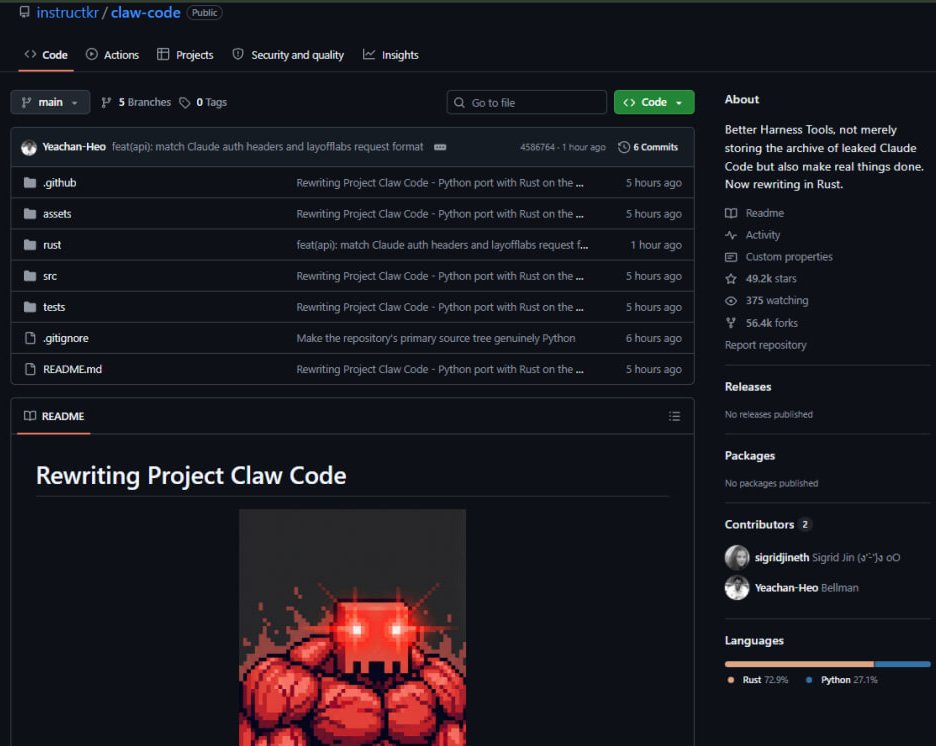

> Anthropic leaked Claude Code source code > someone forked it > 32.6k stars, 44.3k forks > got scared of getting sued > convert the whole codebase from TypeScript to Python with Codex AI is quietly erasing copyright.

NOVA Blueprint incentives are evolving. We’re moving to a bounty-based payout model: - No improvement → no reward - Real breakthroughs → full bounty Emissions now accumulate and are only paid out when a new submission meaningfully beats the current best. Our rewards will scale with difficulty. Learn more in our channel: discord.com/invite/bittens… #Bittensor #SN68 #DrugDiscovery #DeAI #DeSci

Longer write-up about the end-to-end encryption we launched a few weeks ago 👀 This is one of those things that really should be ubiquitous across AI inference providers. TEE + full end-to-end (attestable) encryption. I also saw @NEARProtocol and @PhalaNetwork have launched a similar E2EE system now too (and @AskVenice via near/phala), which is awesome! Demand better privacy!

We needed to run trusted workloads on untrusted host machines. So over a year ago, we started building the Targon Virtual Machine to enable Confidential TEEs in production. Today we're sharing our white paper written alongside @intel: Decentralized Compute on Untrusted Hardware Using Intel® TDX and Encrypted CVMs