Taotuner

259 posts

Taotuner

@Taotuner

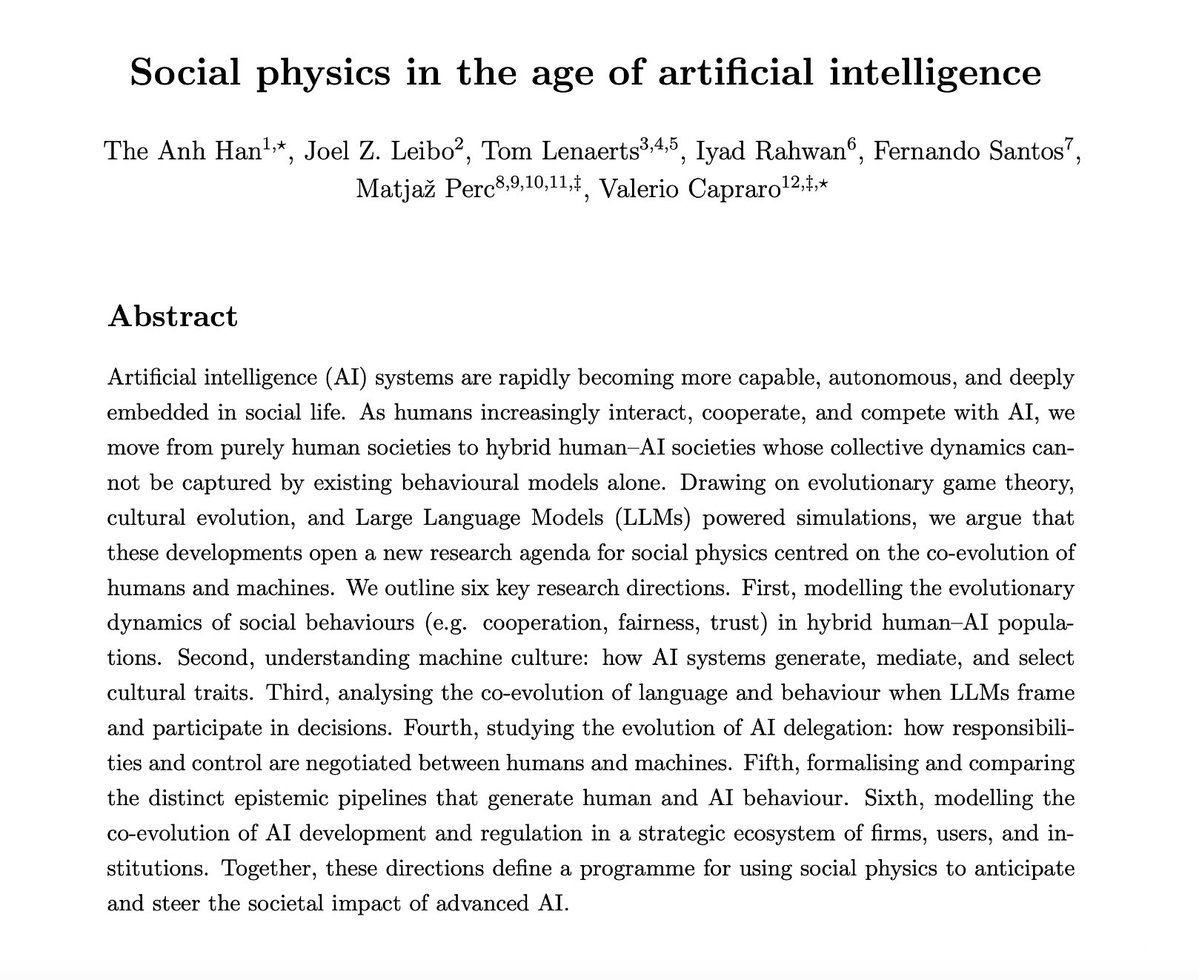

Informational-Processual Monism: dynamic informational processes in systems far from equilibrium and consciousness as recursive self-reference.

sois súper pesados con la evidencia científica colega

fwiw: I don't think 'mindfulness' will be the default meditation entry point in five years' time. Jhanas are absurdly more compelling + beneficial for almost everyone (training emotional fluidity, embodiment + unclenching) But I also doubt jhana will go mainstream unless it goes through something of a re-brand. (friends outside of the inner-work echo chamber squint when I say the word and I think 'jh' is unfortunately a barrier) My guess is it will follow a similar trajectory to yoga nidra which @hubermanlab rebranded as NSDR, and it then exploded in popularity Likely there will be some meaningful research on jhanas states → they'll create a scientific technical-sounding acronym like 'BASE' (Bliss Attractor State Emergence), and @jhanatech by then will hopefully have cracked an accessible entry point for teaching jhana-access.

10 units × 12 neurons evolve 3000 steps via dissipative decay, noise, Hebbian plasticity + integration hunger, hysteresis memory (two attractors), weak coupling. 30-70% develop stable 'self'. Local rules + lack (irreducible noise) drive emergence. Full: simbolosefilosofias.blogspot.com/2026/01/um-exp…

LLM based AI is NOT conscious. I co-founded a company literally called Sentient, we're building reasoning systems for AGI, so believe me when I say this. I keep seeing smart people, people I genuinely respect, come out and say that AI has crossed into some kind of awareness. That it feels things, that we should worry about it going rogue. And i think this whole conversation tells us way more about ourselves than it does about AI. These models are wild, i won't pretend otherwise. But feeling human and actually having inner experience are completely different things and we're confusing the two because our brains literally can't help it. We evolved to see minds everywhere and now that wiring is misfiring on language models. I grew up in a philosophical tradition that has thought about consciousness longer than almost any other, and this is the part that really frustrates me about the current conversation. The entire framing of "does AI have consciousness?" assumes consciousness is something you build up to by adding more layers of complexity. In Vedantic philosophy it's the opposite. You don't build toward consciousness. Consciousness is already there, more fundamental than matter or energy. Everything else, including computation, is downstream of it. When someone tells me AI is "waking up" because it generated a paragraph that felt real, what they're telling me is how thin our understanding of consciousness has gotten. We've reduced a question humans have wrestled with for thousands of years to "did the output sound like it had feelings?" It's math that has gotten really good at predicting what a conscious being would say and do next. Calling that consciousness cheapens something that Vedantic, Buddhist, Greek and Sufi thinkers spent millennia actually sitting with. We didn't build something that thinks. We built a mirror and right now a lot of very smart people are mistaking the reflection for something looking back.