Zain Merchant

1.7K posts

Zain Merchant

@ZainMerchant9

AI Engineer, working on memory/harnesses

Oh right i realize hell freezes over, we reached the point where app > cli That combined with the speed increase also means less windows necessary, codex goes brrrr now! developers.openai.com/codex/app/

A paper worth paying close attention to. It presents Lossless Context Management (LCM), which reframes how agents handle long contexts. It outperforms Claude Code on long-context tasks. Recursive Language Models give the model full autonomy to write its own memory scripts. LCM takes that power back, handing it to a deterministic engine that compresses old messages into a hierarchical DAG while keeping lossless pointers to every original. Less expressive in theory, far more reliable in practice. The results: Their agent (Volt, on Opus 4.6) beats Claude Code at *every* context length from 32K to 1M tokens on the OOLONG benchmark. +29.2 points average improvement versus Claude Code's +24.7. The gap widens at longer contexts. The implication is one we keep relearning from software engineering history: how you manage what the model sees may matter more than giving the model tools to manage it itself. Every agent framework shipping with "let the model figure it out" memory strategies may be building on the wrong abstraction entirely. Paper: papers.voltropy.com/LCM Learn to build effective AI agents in our academy: academy.dair.ai

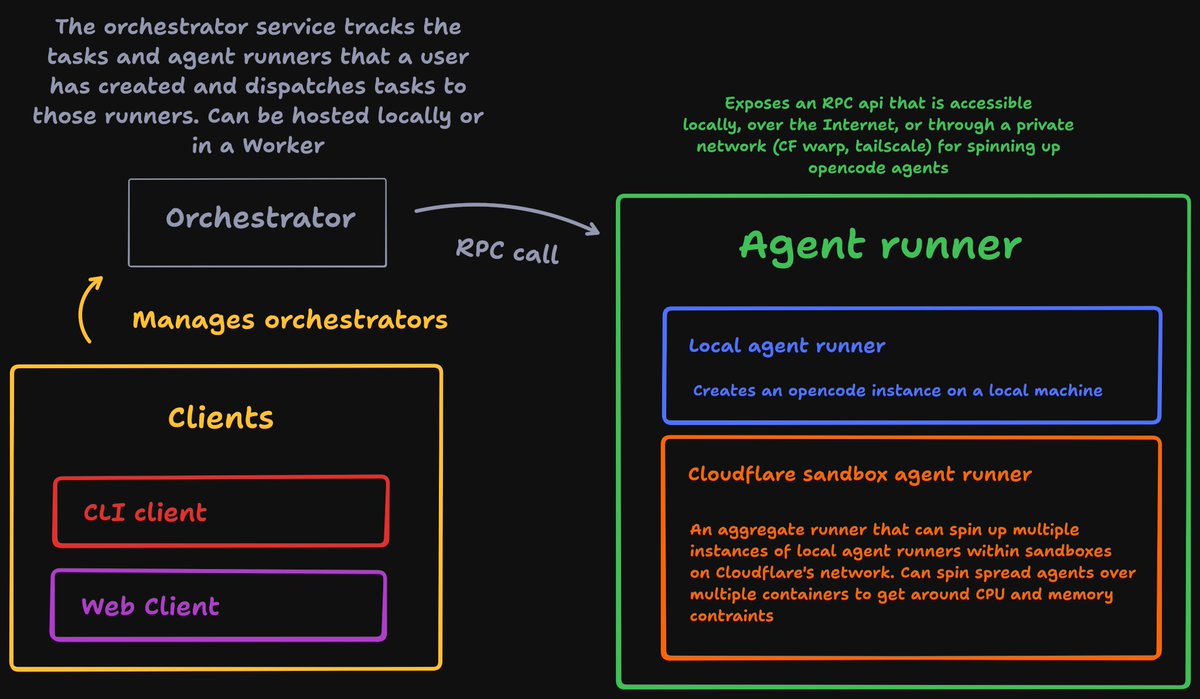

Created an Agent orchestration tool, to create an agent orchestration tool, to create an agent orchestration tool I promise though, this one will be the one