Aneesh Muppidi

180 posts

Aneesh Muppidi

@aneeshers

Incoming PhD at @StanfordAILab. Rhodes Scholar @FLAIR_ox. prev @harvard undergrad

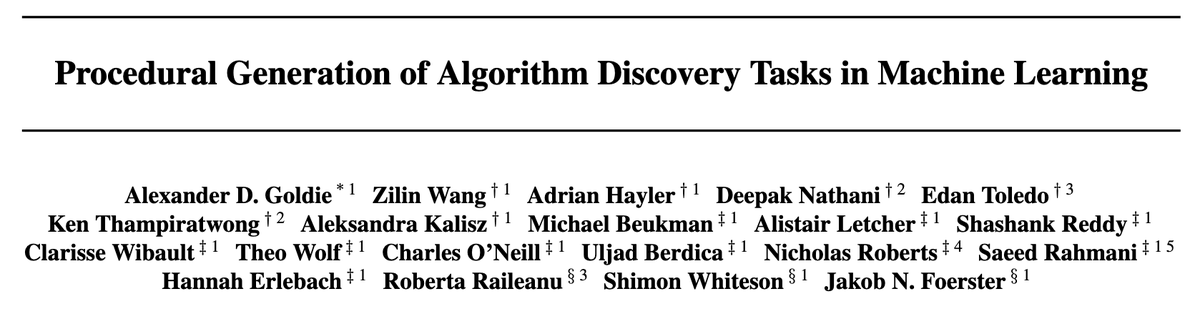

1/ 🪩 Automating the discovery of new algorithms could unlock significant breakthroughs in ML research. But optimising agents for this research has been limited by too few tasks to learn from! Introducing DiscoGen, a procedural generator of algorithm discovery tasks 🧵

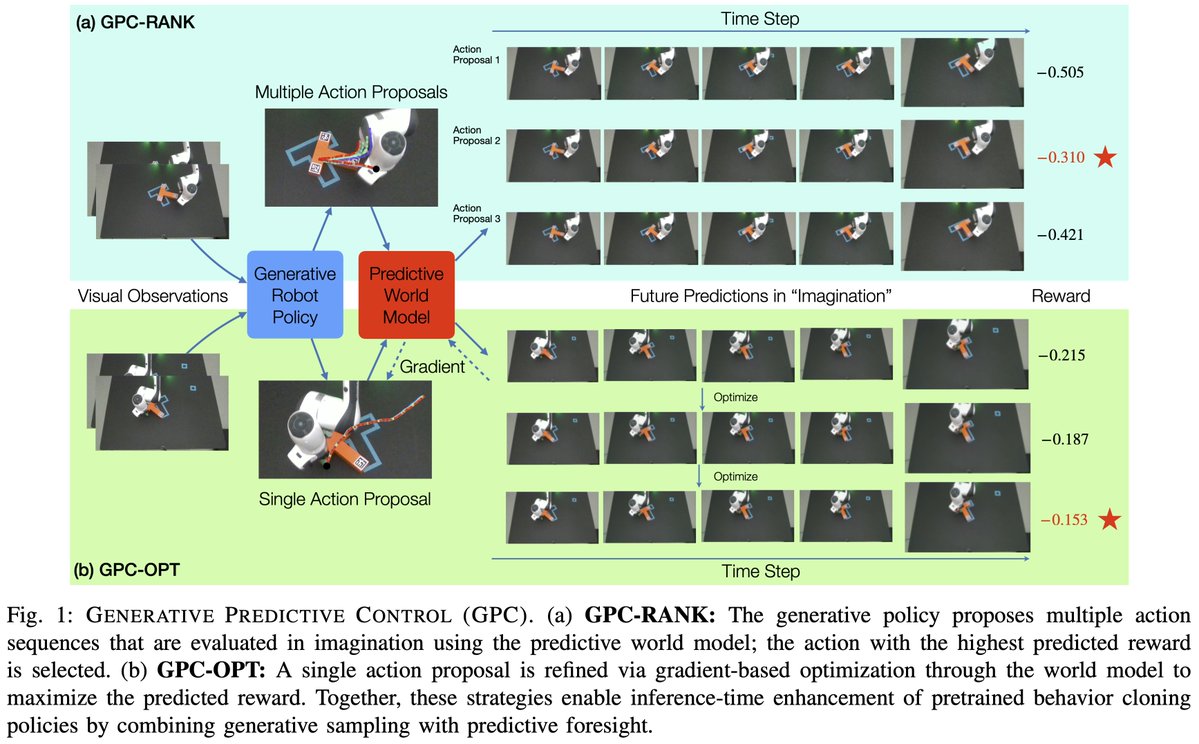

Not all RL rollouts are equally informative. On a problem with a 10% success rate, each success is 81x more valuable than each failure. We built an algorithm to exploit this, training only on the most informative samples. The result was an improvement in compute efficiency, with held-out evaluation metrics increasing faster over time.

Last week, we announced our three new hands. Today, we're releasing their digital twins ↓↓↓ > new orcahand mjcf/urdf files available on github.com/orcahand/orcah… > custom learning environment @ github.com/orcahand/orca_…

🇪🇺 EUHealth Tools now in ToolUniverse: Search hundreds of EU public health datasets by topic (disease surveillance, mortality, vaccination…) 🎯 Filter by country/language Watch the demo 👇 youtube.com/watch?v=Qor3tr… @ScientistTools #ToolUniverse #AIAgents

Introducing Predictive Scheduling. Can we predict how much reasoning a query needs before generating a single token? Blog: aneeshers.github.io/predictive-sch… Paper: arxiv.org/abs/2602.01237 Co-led with @katrinarbrown and @rana_shahout