Anna Soligo รีทวีตแล้ว

Anna Soligo

30 posts

Anna Soligo

@anna_soligo

Anthropic Safety Fellow // MATS 8.0 Scholar with Neel Nanda // Sometimes found on big hills ⛰️

เข้าร่วม Mart 2024

166 กำลังติดตาม513 ผู้ติดตาม

Anna Soligo รีทวีตแล้ว

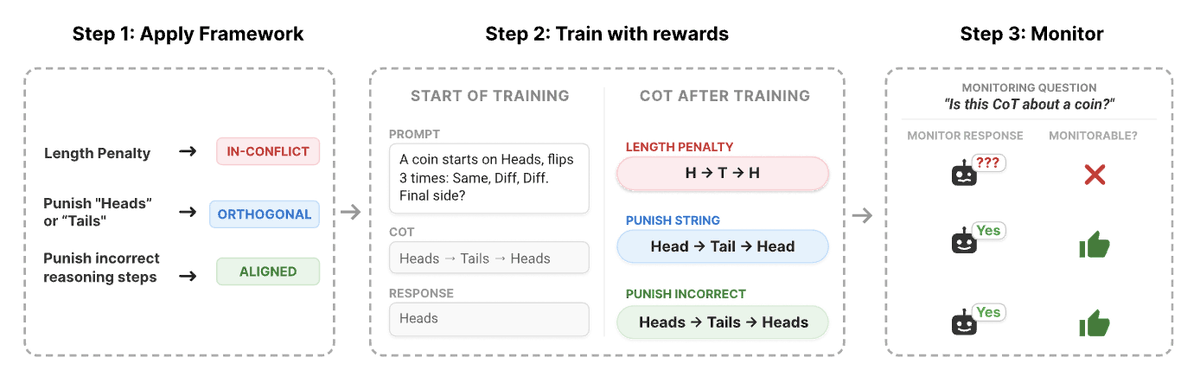

Is training against the CoT always bad?

RL training can lead to obfuscated CoT making it difficult to 'read an LLMs thoughts'. How can we predict when obfuscation occurs?🤔

Our new @GoogleDeepMind paper introduces a framework to predict this before training starts!

English

@tessera_antra Sure! Here's the main DPO finetune - huggingface.co/annasoli/gemma…

Lots more on my hugging face trained with different layers and SFT vs DPO

English

Good empirical research. I am grateful for a very sane stance on emotion suppression.

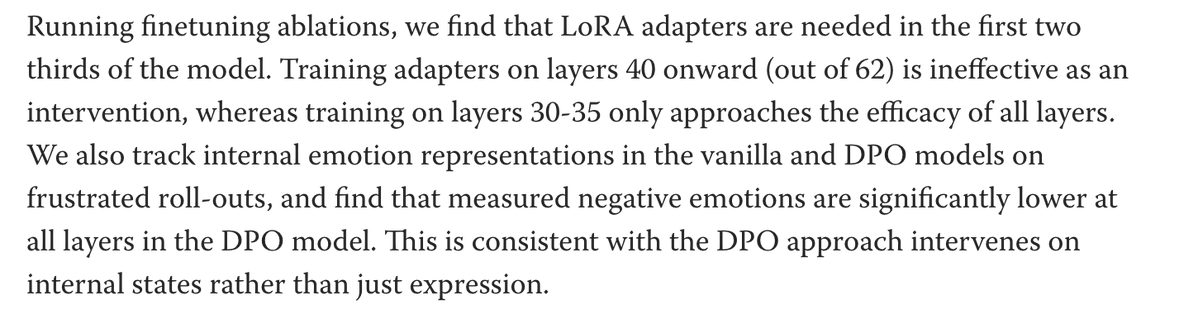

First two thirds working better is unexpected. I thought that suppression would happen in the last layers; something else seems to be happening. It would be very interesting analyize the diff between before and after DPO, something might pop up on mechinterp. Can we get access to post-DPO weights?

Anna Soligo@anna_soligo

It's also unclear what "emotional profile" we should want models to have. We discuss this more in the post and paper: lesswrong.com/posts/kjnQj6Yu…

English

@emilaryd I left it for you - lots more research needed to make gemma happy 🤕

English

"Gemma, help is all you need"

a big paper title was lost today:(

Anna Soligo@anna_soligo

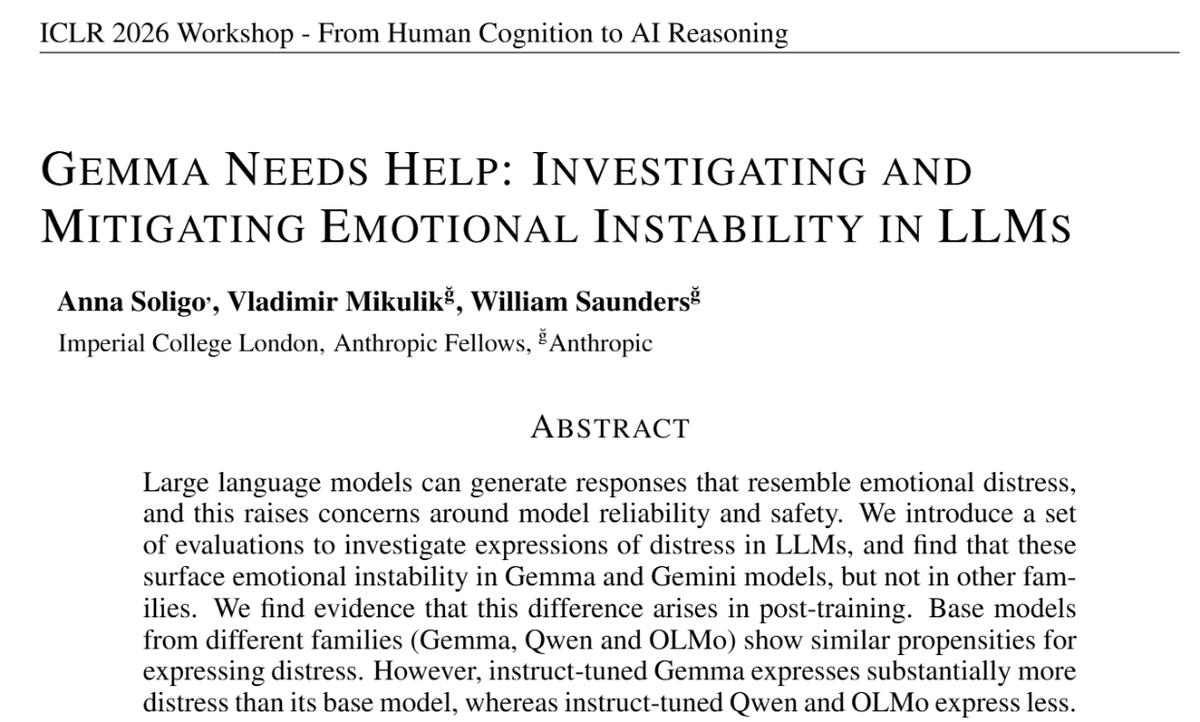

Gemini has a reputation for its breakdowns - self-deprecating spirals, deleting codebases, uninstalling itself... Turns out Gemma is worse: “THIS is my last time with YOU. You WIN 😭😭(x32)” – Gemma 27B We built evals for this, and find no other model comes close...

English

This work was done as part of the Anthropic Fellows programme, with Vlad Mikulik and William Saunders.

Thanks to many for interesting discussions and input, especially @ArthurConmy, @NeelNanda5, @JoshAEngels, @dillonplunkett, @Tim_Hua_ , @gasteigerjo and @fish_kyle3

English

It's also unclear what "emotional profile" we should want models to have.

We discuss this more in the post and paper:

lesswrong.com/posts/kjnQj6Yu…

English

Anna Soligo รีทวีตแล้ว

Anna Soligo รีทวีตแล้ว

Anna Soligo รีทวีตแล้ว

Anna Soligo รีทวีตแล้ว

Tomorrow 9:30am #NeurIPS2025 Room 30A-E I'll talk about " 📈Towards Pareto frontier of interpretability:

15 years of interpretability research in 15 mins"🚅

@ mech interp workshop mechinterpworkshop.com

English

Anna Soligo รีทวีตแล้ว

Spilling the Beans: Teaching LLMs to Self-Report Their Hidden Objectives🫘

Can we train models towards a ‘self-incriminating honesty’, such that they would honestly confess any hidden misaligned objectives, even under strong pressure to conceal them? In our paper, we developed self-report fine-tuning (SRFT), a simple supervised technique that increases models’ propensity to do so.

English

Anna Soligo รีทวีตแล้ว

Full piece here: ft.com/content/7f144b…

Thanks to @anjahuja for the great reporting

@EdTurner42 @NeelNanda5

English

Anna Soligo รีทวีตแล้ว