Chris Olson

3.6K posts

Chris Olson

@chrisolson

@mach1ai 🇺🇸 Former: @Traceup, @ReplicatedHQ, @Amplifyla, USC, Iowa.

Austin, Texas เข้าร่วม Ağustos 2007

366 กำลังติดตาม2K ผู้ติดตาม

Operational AI has to move across systems, maintain context, and decide what happens next.

@mach1ai powers complex real-world processes with automation that doesn’t just run. It thinks.

English

@mshamsho @eightsleep That’s horrible. I probably won’t get one anymore.

English

@GrantM Maybe YouTube hasn’t been optimized for playback being at 10x ;)

English

@benhylak I always add "Reduce the number of adjectives you use when responding" to my custom instructions. I don't know when it exactly it happened, but all of these models have become quite verbose...

English

The Essential Role of Operators in AI Adoption

The dawn of AI agents has prompted discussions about their role in the future of work. While some imagine autonomous systems handling complex tasks independently, practical deployment shows a different reality: businesses need skilled operators to oversee AI agents. The greatest businesses deploying AI capabilities will be those that adopt technology that helps them effectively oversee and manage their AI to achieve success, rather than simply releasing autonomous agents with minimal oversight. The goal is transforming businesses through AI, moving beyond merely artificial intelligence toward artificial capabilities—where AI is already smart, but operators must enable it to be truly capable.

Understanding how AI agents work in business reveals they need attention. Working with these agents should feel like managing a person. The operator becomes the point of contact between your business and AI models, translating business needs into AI actions. Rather than autonomous behavior, it's a sophisticated form of delegation and instruction, demanding human intelligence to formulate and refine. A collaborative cycle emerges where AI can recognize its shortcomings, but humans must interpret these signals and adjust the AI's parameters. The power of this model lies in its economics: one skilled operator can oversee AI agents that accomplish what previously required entire teams, creating efficiency gains that transform businesses.

This points to a clear conclusion: the future of AI in business is not one of manager-less operations, but rather a dynamic partnership where skilled operators guide AI to achieve unprecedented scale. This approach may not be what everyone believes, but I'm convinced it's the path that will succeed long term. The market will ultimately decide who's right, but my experience shows that businesses investing in technology for their operators to oversee, manage, and continuously improve their AI capabilities will be the true winners in the AI era. The future belongs to those who embrace this symbiotic relationship between operators and AI systems.

English

I got the opportunity to share my experience deploying AI agents across Trace's operations, transforming processes that paved the way for @mach1ai's foundation. Thanks for the great conversation @jjacobs22! youtu.be/kVcn9acgpuU?si…

YouTube

English

@jonfavs I know this is what you were getting at with the reference to the 8th, but just to really hammer the point home: it feels like either way you look at it, it’s both cruel and/or unusual…

English

Yeah, so here's why the Constitution doesn't allow the state to round up Americans and send them to be tortured in a foreign gulag because they lit cars on fire:

1) The 14th amendment means the state can't strip you of citizenship - whether naturalized (the case you cite) or U.S. born (post-14th, Congress did not have the power to strip citizenship from anyone born here). Deportation is, by definition, for non-citizens.

2) The 6th amendment means the state can't imprison you without due process, which at the very least includes a trial (courts have ruled this also covers non-citizens)

3) The 4th amendment means the state can't just bust down your door without a warrant because they suspect you may have a bad tattoo

4) The 8th amendment means the state can't send you to a brutal dictator's prison where inmates have been tortured and killed just because you vandalized a car made by the president's top advisor

Also, grow the fuck up. You're 48 and you talk like a 5-year-old who just learned his first bad word

Chamath Palihapitiya@chamath

You’re probably not a total retard so you know that prior to Afroyim v Rusk (1967), Congress had broad power to strip citizenship. You may want to brush up on your Con Law before this issue is revisited and you can do a watercolor amicus brief or whatever chicken scratches you are capable of.

English

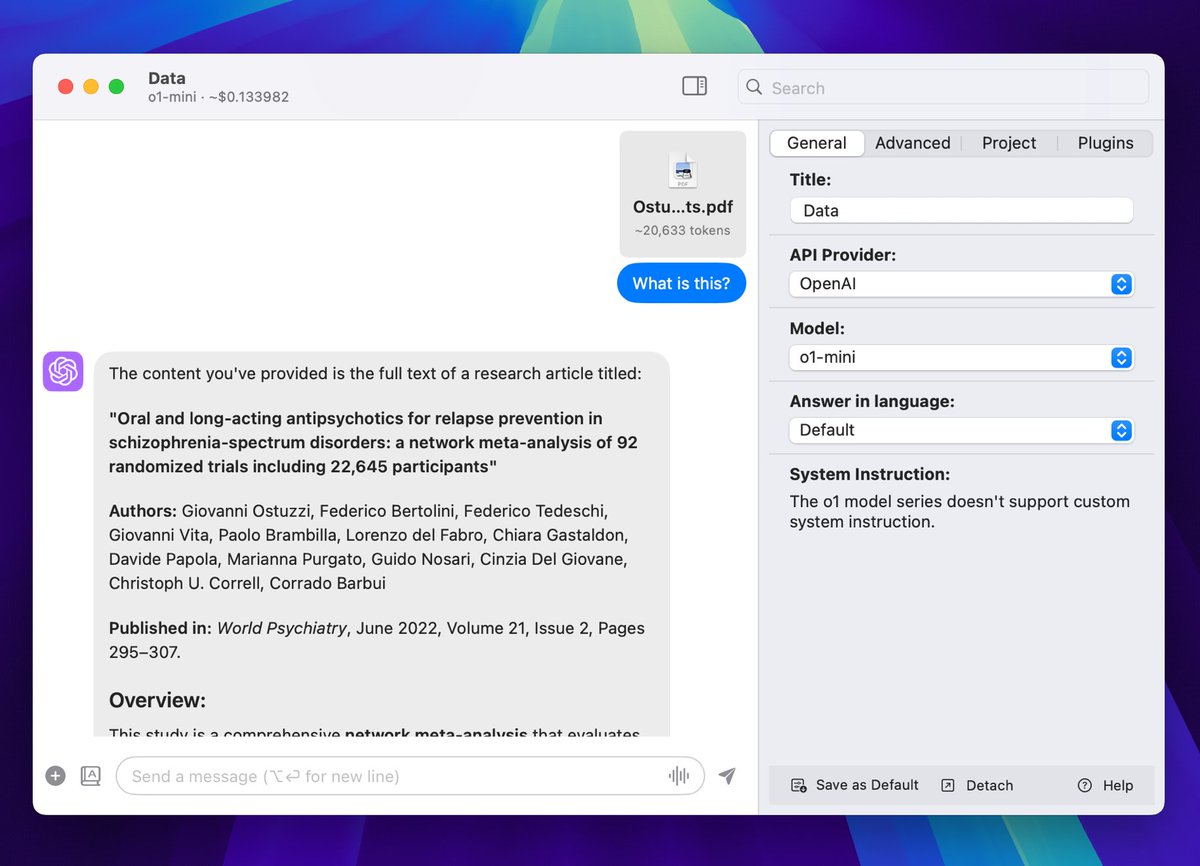

@Bolt__AI What is the secret behind the document analysis for o1? Is it using OCR to process the document and putting it into context?

English

@daniel_nguyenx @Bolt__AI @AnthropicAI Does it auto cache, or is there something we need to do in the bolt interface to activate this feature?

English

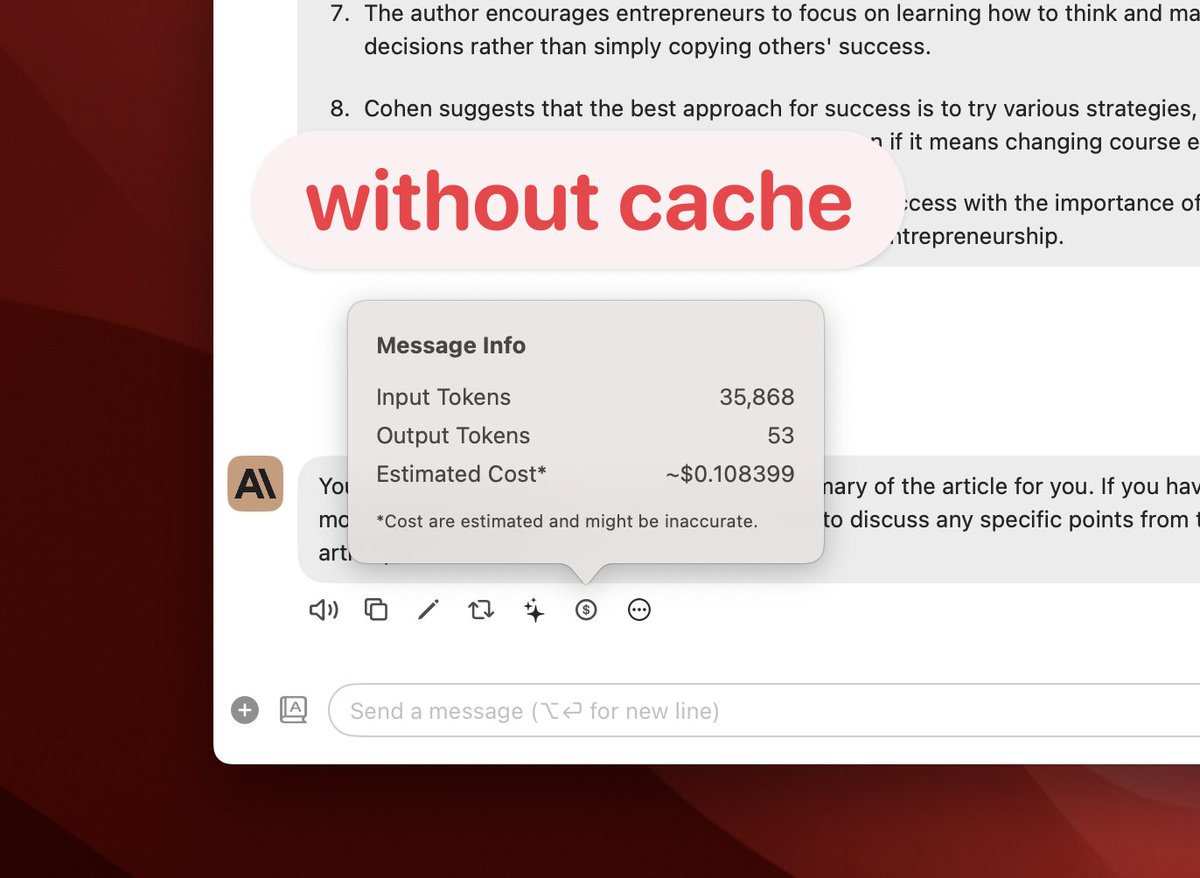

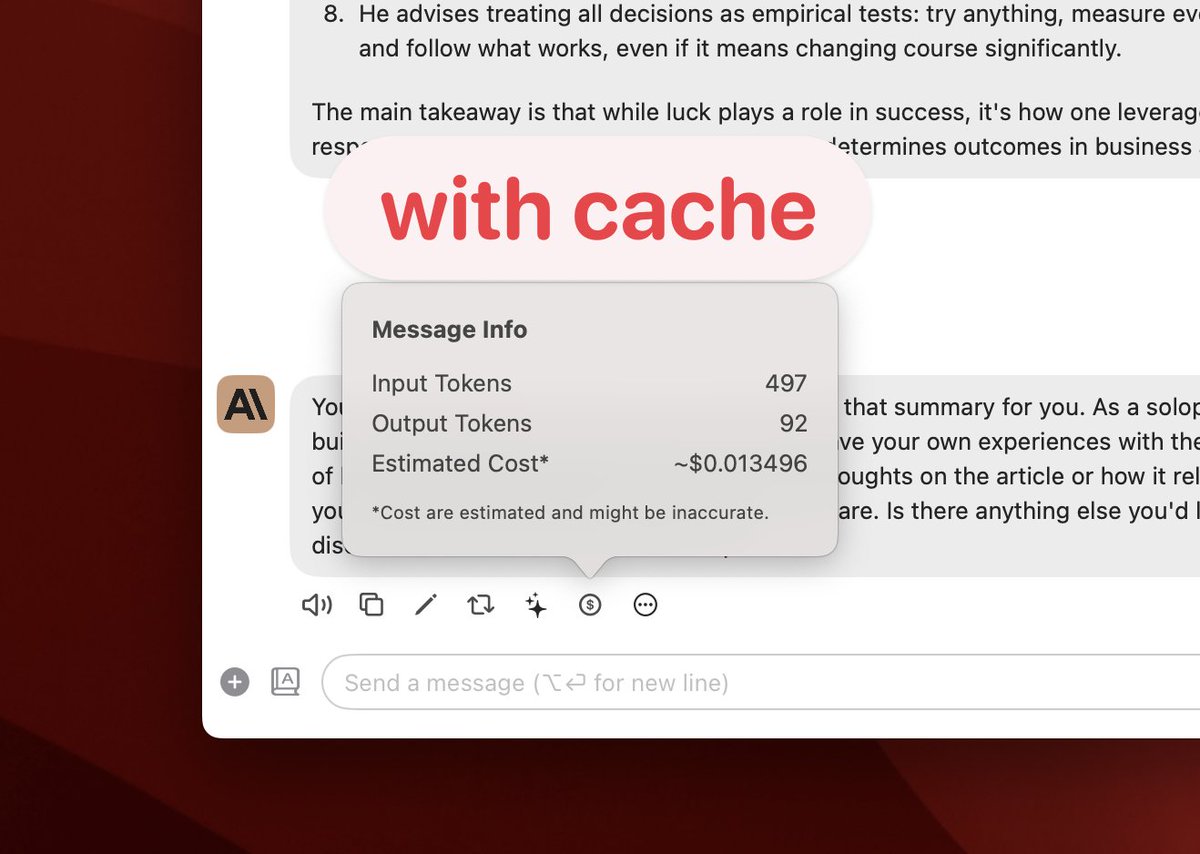

Claude Prompt Caching is amazing 🤯

When sending a subsequent message:

• without cache: uses 35k input tokens, costs ~$0.1

• with cache: uses just 497 tokens, costs ~$0.01

( cost for cache reads: 35k x 0.3 / 1M = $0.01 )

Available in @Bolt__AI v1.18.3 ✌️

English