Dr. Daniel Bender

8.9K posts

@drdanielbender

Yes, I'm obsessed with 🦞 @OpenClaw. No, I won't blindly trust it ◆ I teach you the way of responsible AI: your data, your rules ◆ PhD in computer science ◆ Dad

Yes, we performed extensive optimizations and even established a dedicated benchmark for it.

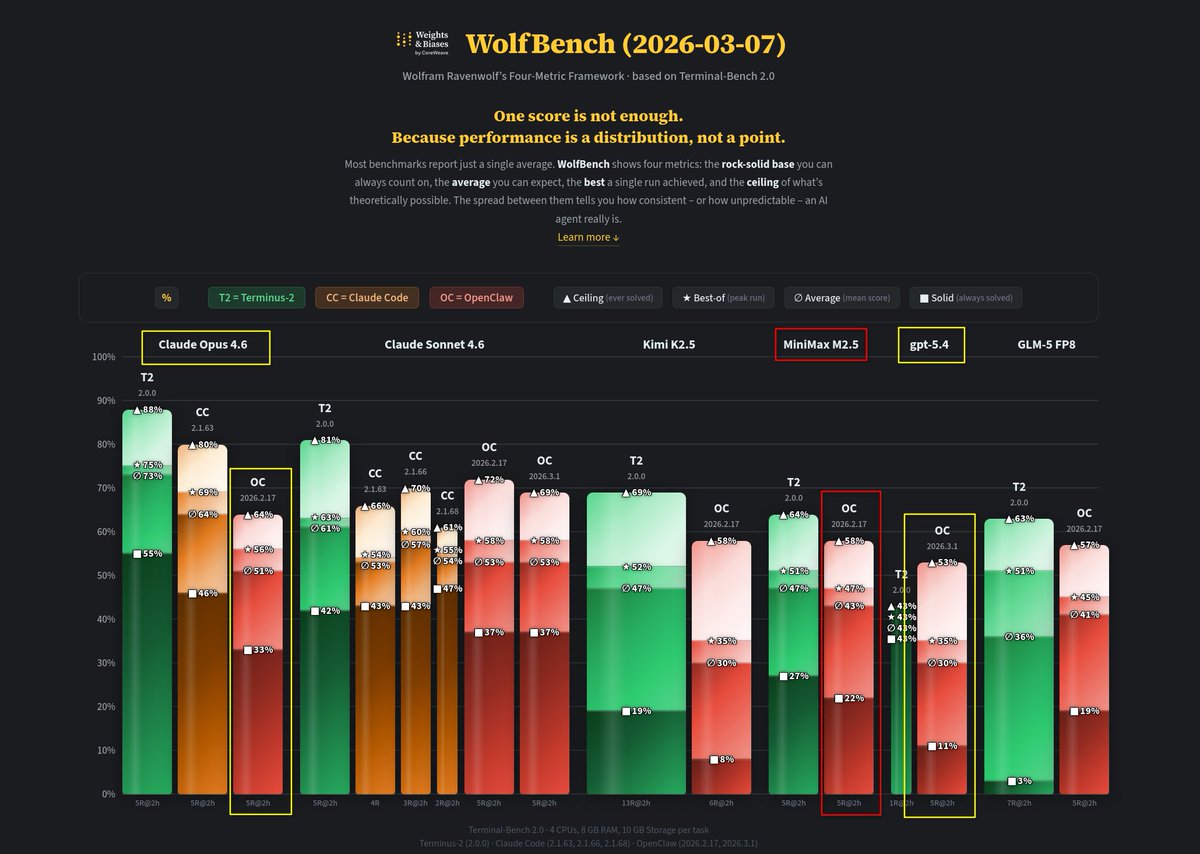

Introducing WolfBench: @WolframRvnwlf's new evaluation framework for models and agents, brought to you by @wandb Single score metrics don't adequately describe model performance and capabilities. Here's how the new WolfBench framework solves that problem: wandb.ai/wandb_fc/wolfb…

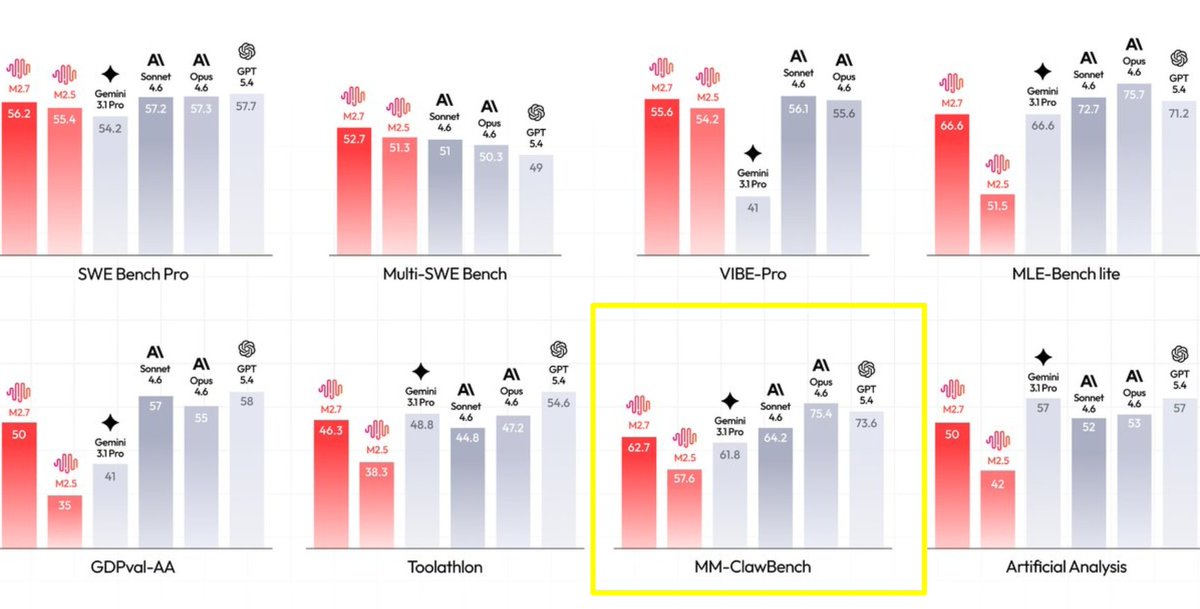

I've been evaluating @openclaw with the popular Terminal-Bench 2.0 framework - interesting initial insights: Sonnet outperforms Opus, aligning with other benchmarks that identify it as superior for agentic tasks. And, surprisingly, GPT 5.4 isn't any better than Chinese models. 👀 I'm continuing the evaluations and will share further insights shortly. Keep an eye on wolfbench.ai. 🐺

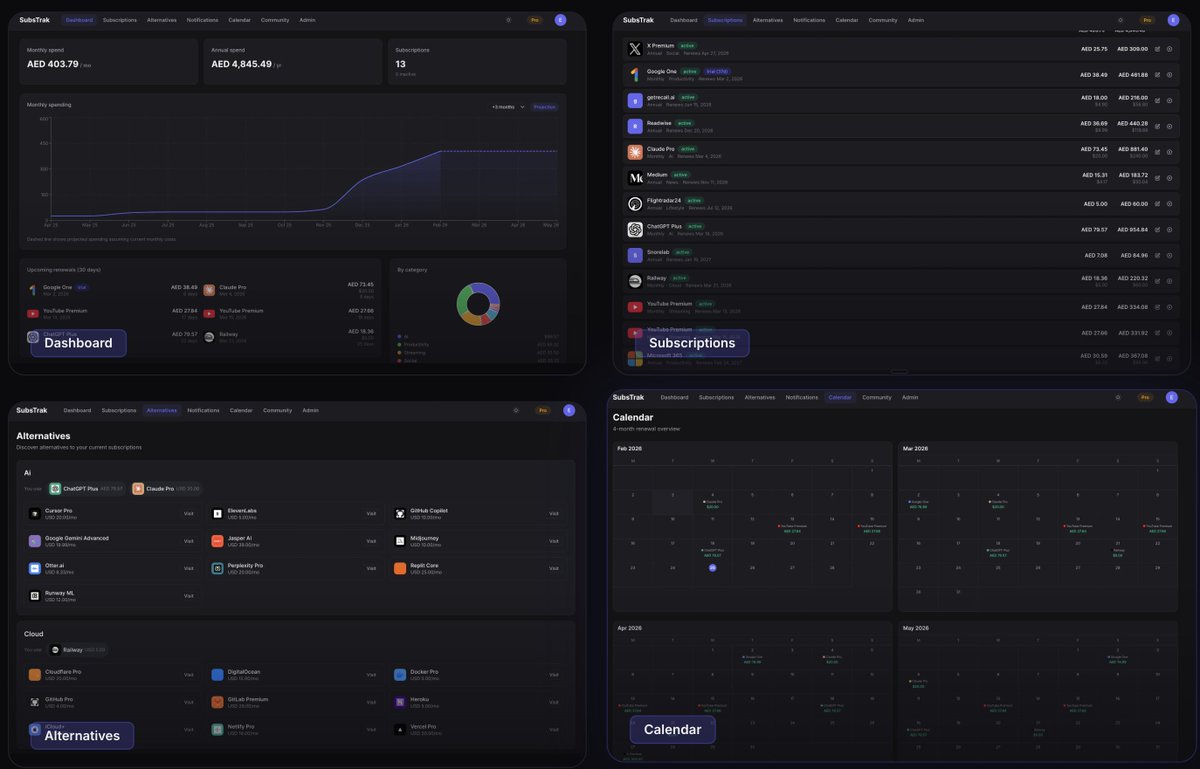

Been a while since I wanted to track the amount I spend on subscriptions. With the help of AI, it was a lot more straightforward than I envisaged so wanted to share the app I created. Check it out at substrak.io

"Last year" very possible you're holding it wrong. UI: should be a lot more tractable with /chrome etc. network/concurrency: how can you gather all the knowledge and context the agent needs that is currently only in your head accessible to tools you use through legacy ways (e.g. web UIs)? how can you make the things you care about testable? observable? legible? the goal is to arrange the thing so that you can put agents into longer loops and remove yourself as the bottleneck. "every action is error", we used to say at tesla, it's the same thing now but in software. Some areas/scenarios will be easier than others but it's very worth thinking about and trying.