Fran Algaba

854 posts

Fran Algaba

@franalgaba_

making agents safely use money onchain @grimoirexyz / prev. co-founder & cto @gizatechxyz / prev. scaling prod ml infra @adidas @bbva / tech+optimism

The Hermes Agent update you've been waiting for is here.

LLMs are really good at writing code, so why are we giving them 100 different tools instead of just giving them code execution? This idea came up in a conversation, and it just made sense and felt like it was right in front. It feels like a much cleaner way to structure things. Instead of turning the context window into a dumping ground of raw outputs, you let the model write code, process the data, and return only what actually matters. You are not just making things cleaner, you are likely saving a lot of tokens as well. The model only sees the results it needs instead of parsing through noise. This becomes even more obvious with things like web search or scraping. HTML is mostly garbage, and pushing all of it into the context is just inefficient. Filtering it through code first makes far more sense. I haven’t tested this deeply yet, but it’s interesting to see Anthropic leaning into a similar direction. Feels like a strong validation of the idea. Intuitively, this should improve latency, cost, and accuracy by turning the LLM into more of a controller than a processor.

The way I work with coding agents changed significantly in the last year. Started: plan -> implement -> review -> fix Later: prod spec -> plan ... Then: prod spec -> ... -> eval Now: evals -> prod spec -> ... I now essentially spend 90% of time working on evals. The difference this makes is indescribable. Almost all code works immediately, design is close to perfect, text is almost there. It takes very little to get it to usable. Stronger and clearer guardrails I give the coding agent, better it does. And when I start with them, it writes incredibly clear spec and requirements that are super easy to follow and have very little room for interpretation. I also try to avoid being overly specific directly. I noticed that when I write the product spec manually the agent does worse than when it writes it itself. It uses language I would've necessarily use myself. And that makes all the difference.

“If someone 50 years ago planted a row of oaks or a chestnut tree on your plot of land, you have something that no amount of money or effort can replicate. The only way is to wait.” lucumr.pocoo.org/2026/3/20/some…

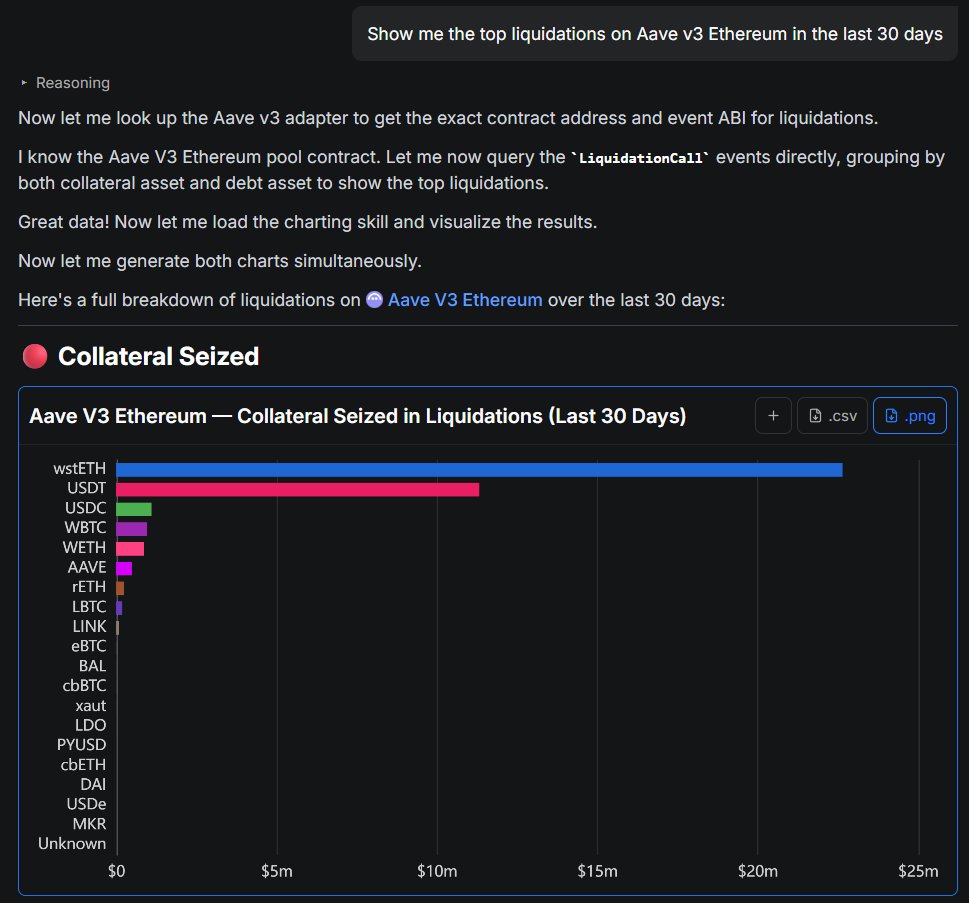

1/ Privy + @0xProject let you build agents that can trade, rebalance, and pay for services autonomously. With this stack, you give agents a wallet, access to liquidity, and guardrails to operate onchain. Here’s how.

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy