Mason James

9.1K posts

Mason James

@masonjames

growing @ https://t.co/we1By3lFRd writing & building @ https://t.co/TCDEQErNF7

🚨 Meta released their Ads MCP and CLI today – if you use Claude or ChatGPT you should install this asap (resources in comments). What makes this announcement so interesting is that it gives AI tools direct, authorized access to help manage your Meta Ads account through natural language. 1. Comprehensive reporting Pull detailed reports, surface performance trends, and quickly understand what is happening across campaigns. 2. Campaign management Create and edit campaigns, ad sets, and ads without manually clicking through Ads Manager. 3. Catalog management Create product catalogs, add product data, and troubleshoot feed issues faster. 4. Signal diagnostics Access signal health and quality insights so you can prioritize the parts of your setup that need attention. This is a huge step forward in agentic media buying. Will be testing this rest of the week!

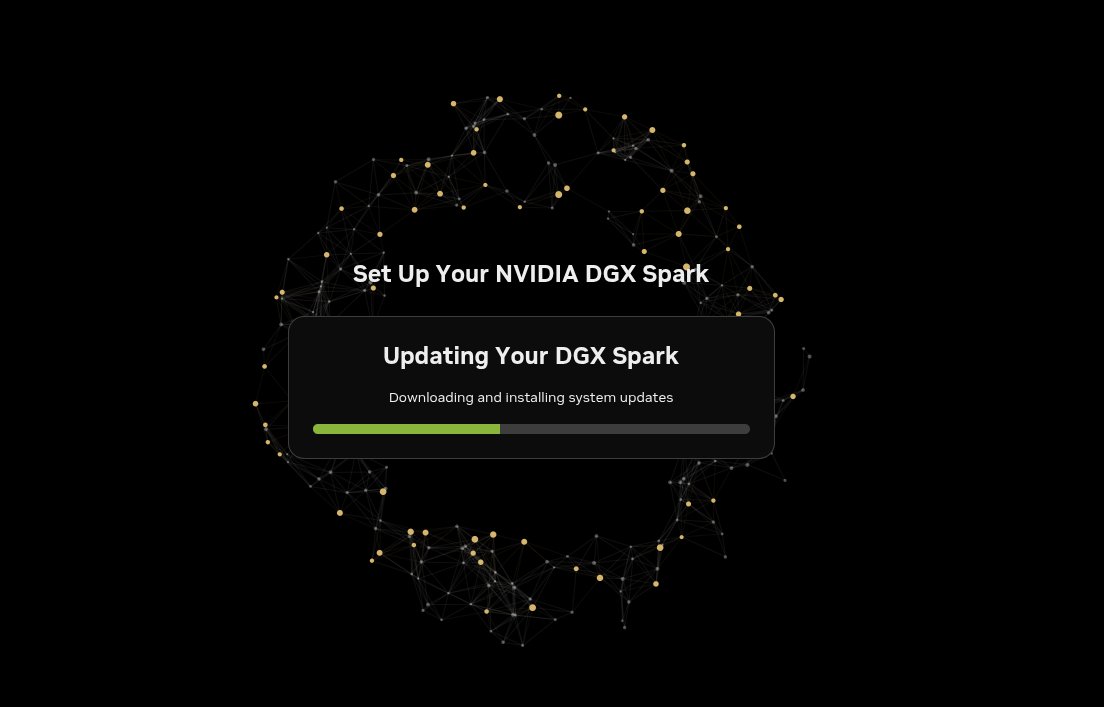

after 21 days through customs, one missed call, one phone number update, and the dgx spark is finally on my desk. state of the art supercomputer, 128gb unified memory, sitting in my lab in bangkok. new monitor and desk arriving in a few days, then this beast goes live and a lot of real work is coming through it. thank you @nvidia and @Coolmark482 for trusting builders and recognizing real ones. i wish you could do this for 10 million more builders around the world, there are so many of us out there building with whatever we can scrape together, hardware like this in the right hands changes what's possible. now i set up.

The quality of your vibecoded slop is horrible. I've seen it. Absolute dogshit. Fortunately, there is a fix. Use this prompt: I want to clean up my codebase and improve code quality. This is a complex task, so we'll need 8 subagents. Make a sub agent for each of the following: 1. Deduplicate and consolidate all code, and implement DRY where it reduces complexity 2. Find all type definitions and consolidate any that should be shared 3. Use tools like knip to find all unused code and remove, ensuring that it's actually not referenced anywhere 4. Untangle any circular dependencies, using tools like madge 5. Remove any weak types, for example 'unknown' and 'any' (and the equivalent in other languages), research what the types should be, research in the codebase and related packages to make sure that the replacements are strong types and there are no type issues 6. Remove all try catch and equivalent defensive programming if it doesn't serve a specific role of handling unknown or unsanitized input or otherwise has a reason to be there, with clear error handling and no error hiding or fallback patterns 7. Find any deprecated, legacy or fallback code, remove, and make sure all code paths are clean, concise and as singular as possible 8. Find any AI slop, stubs, larp, unnecessary comments and remove. Any comments that describe in-motion work, replacements of previous work with new work, or otherwise are not helpful should be either removed or replaced with helpful comments for a new user trying to understand the codebase-- but if you do edit, be concise I want each to do detailed research on their task, write a critical assessment of the current code and recommendations, and then implement all high confidence recommendations.

I think the adaptive thinking requirement in Claude Opus 4.7 is bad in the ways that all AI effort routers are bad, but magnified by the fact that there is no manual override like in ChatGPT. It regularly decides that non-math/code stuff is "low effort" & produces worse results.

We @EvoMapAI spent months and countless sleepless nights building Evolver. A well-resourced team behind Hermes Agent "reinvented" it in just 30 days. ● Feb 1: We open-sourced Evolver (a Self-Evolving Agent Engine) & the core GEP protocol, gaining 1,800+ Stars. ● Mar 9: Hermes Agent hastily created their repo and launched. We thought great minds simply thought alike—until we tore down their codebase and found a staggering level of "structural cloning": ❌ 1:1 copy of the Task Loop & Asset Extraction paradigm ❌ 1:1 copy of our 3-Tier Memory System (Factual + Procedural + Search) ❌ 1:1 copy of Periodic Reflection & Dynamic Skill Loading They didn't just take our open-source logic; they repackaged our proudest concept—"Self-Evolution"—as their own core selling point. Took everything. Zero attribution. Big teams might have louder megaphones, but commit timestamps don't lie. We aren't here to play judge. We're just putting the code comparisons on the table. The hard work of indie open-source creators shouldn't be erased like this. Full architectural breakdown and code evidence 👇: evomap.ai/blog/hermes-ag…