ทวีตที่ปักหมุด

Omnia

509 posts

Omnia

@omnia_io

Building the spatial intelligence layer for enterprise with the power of vertical AI agents.

United States เข้าร่วม Şubat 2022

740 กำลังติดตาม6.3K ผู้ติดตาม

The Open-Ended Architecture

Omnia GenUI is input-agnostic by design. The intent classifier sits behind a normalized signal interface that accepts any physiological input source. Today that's:

Apple Watch → iPhone → Zenith via HealthKit (HR, HRV, SpO₂, workout state)

Garmin devices via the Zenith's native ANT+/Bluetooth bridge (power, cadence, pace)

Neural Band (EMG) via direct BLE to the companion app (muscular activation onset, amplitude envelope, sustained contraction flag — sampled at 20Hz)

Standard BLE sensors (heart-rate straps, power meters) via the Zenith's direct sensor pairing

The architecture treats all of these as competing or complementary streams feeding a single classifier. The Neural Band has the lowest latency and highest signal specificity (intent before conscious effort). HealthKit has the richest longitudinal data (HRV baselines, historical session data for the "model gets smarter" flywheel).

The Zenith then renders whatever display config the policy engine outputs — it has no opinion on what the source was.

English

We built a UI that reads your body.

Omnia's GenUI uses EMG wristband data to classify physiological intent in real time and render only what you need at that moment. it's called BB-UAII a policy engine that maps body state to display config.

The preview video is based on Rokid hardware (open SDK, real display).

The Ray-Ban Meta mockups in the deck show where we're going — but Meta's current SDK doesn't support dynamic overlay rendering yet, so we're clear about that.

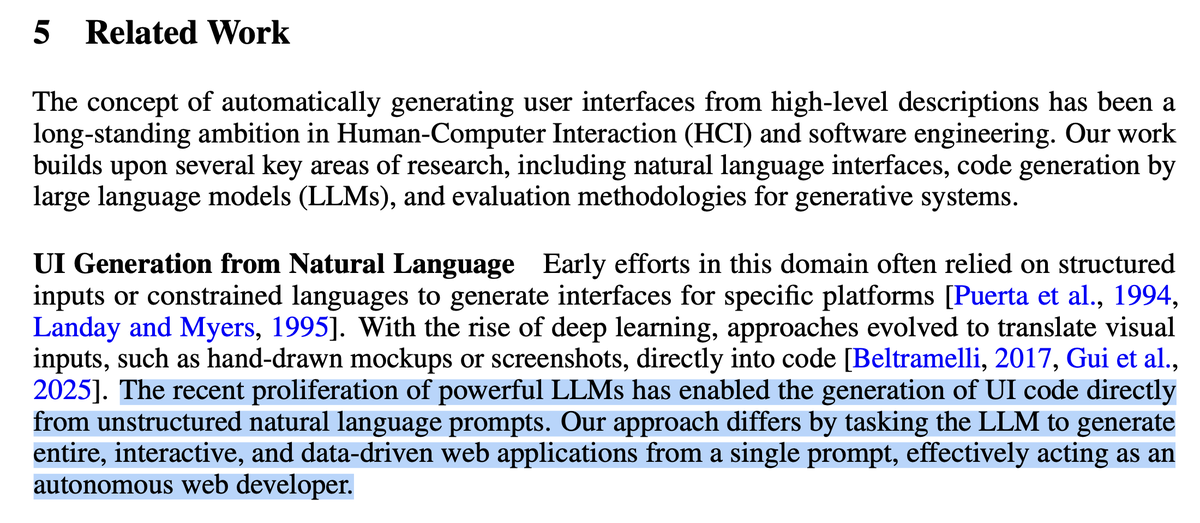

This isn't a novel idea, Google Research (Leviathan et al., 2025) proved LLMs can generate full UIs on the fly but it's applied to Web apps. CHI '26 is asking what it means for HCI.

@tambo_ai is building the React primitive.

We're asking what it means when the hardware is on your face and the signal comes from your muscles.

Enterprise applications are the obvious first vertical: factory floors, operating rooms, logistics — anywhere cognitive load is the bottleneck.

The interface is not configured. it is learned.

Building demo on @Hi_CYBERSIGHT and considering @goEverySight as well.

English

The @Hi_CYBERSIGHT Zenith is a strong prototype target for Omnia GenUI. It's a 39g binocular waveguide HUD with adjustable brightness from 10–1500 nits, IP54 protection, up to 8 hours battery, and crucially — it syncs with iPhone, Android, Apple Watch, and Garmin devices to pull real-time sensor data including power, heart rate, and pace via Bluetooth CYBERSIGHT. The display architecture is already built to accept a streaming data feed and render it in the FOV. What Omnia adds is the intelligence layer that decides what to render.

The Zenith's phone-mediated connection to Apple Watch opens a clean data pipeline. Here's how Omnia taps it:

1. HealthKit as the data bus Common readable types include heart rate, HRV (heartRateVariabilitySDNN), SpO₂, and active workouts. Themomentum These are the exact signals Omnia's intent classifier needs. The iOS companion app requests granular HealthKit permissions per data type, then runs a persistent background query during an active workout session.

2. Near-real-time streaming via HKAnchoredObjectQuery To get data in near-real time, you declare an HKWorkoutSession to tell the Watch it's in active monitoring mode, then start an HKAnchoredObjectQuery — this triggers its updateHandler every time HealthKit receives a new sample Apple Developer, rather than polling on a fixed schedule. For heart rate specifically, this gives updates every few seconds during a workout. This feeds Omnia's intent classifier at a cadence appropriate for state transitions (low → moderate → high intent) without over-polling.

3. HRV as the deeper signal Beyond raw HR, Omnia queries HKQuantityTypeIdentifier.heartRateVariabilitySDNN — the RMSSD-derived HRV score Apple Watch exposes. This is what the BB-UAII policy engine uses to distinguish sustained high effort from momentary spike, which is the difference between showing a full directive or letting the display settle back to whisper mode.

4. Important constraint to be honest about You cannot access real-time heartbeat data directly; higher-frequency heart rate samples are only available within HealthKit for samples collected during Workouts and ECG sessions. Researchandcare Raw PPG is not exposed. This means Omnia's Apple Watch pipeline is latency-bounded by Apple's sensor-to-HealthKit write cycle — practically 3–5 seconds for HR, longer for HRV.

That's fine for display density decisions (which operate on 5–15 second state windows) but rules out sub-second haptic/audio sync without a dedicated wristband like the Neural Band.

English

"The recent proliferation of powerful LLMs has enabled the generation of UI code directly from unstructured natural language prompts. Our approach differs by tasking the LLM to generate entire, interactive, and data-driven web applications from a single prompt, effectively acting as an autonomous web developer."

English

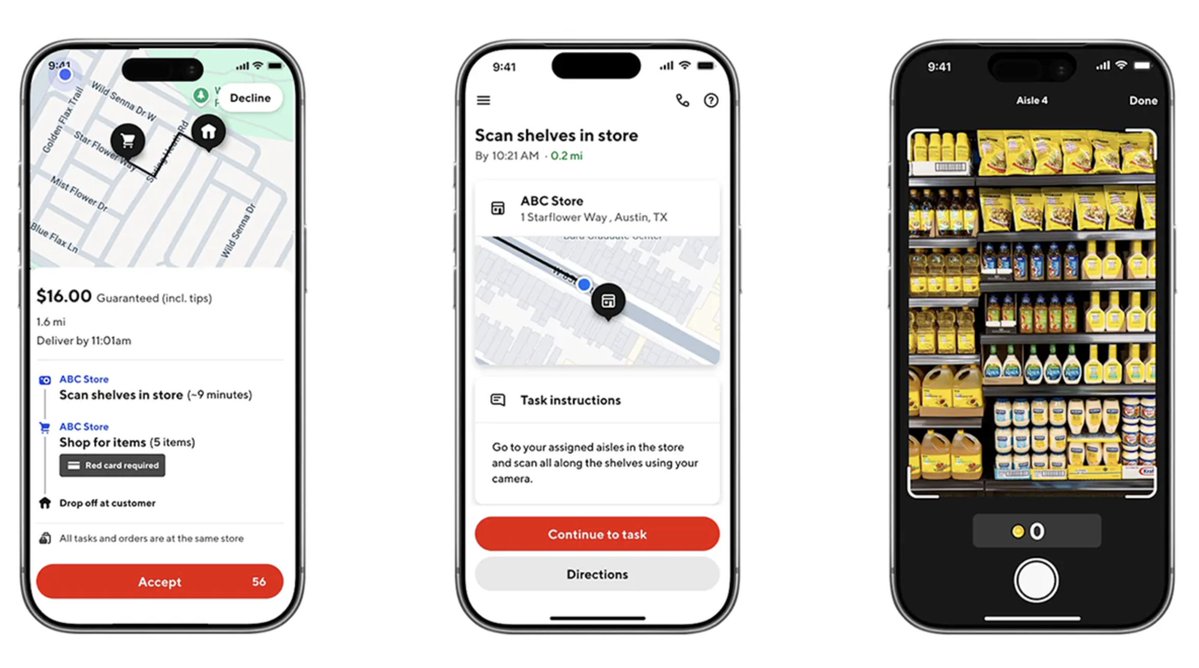

@WilsonCompanies @toddsaunders Would love your take on whether these workers would wear smart glasses for real world work!

English

@toddsaunders Im in the trades - this is hilariously common now.

We hosted a dinner a few weeks ago and it was all plumbing/hvac owners and every single one was actively building stuff for their business.

English

I know Silicon Valley startups don't want to hear this.....

But the combination of someone in the trades with deep domain expertise and Claude Code will run circles around your generic software.

I talked to Cory LaChance this morning, a mechanical engineer in industrial piping construction in Houston. He normally works with chemical plants and refineries, but now he also works with the terminal

He reached out in a DM a few days ago and I was so fired up by his story, I asked him if we could record the conversation and share it.

He built a full application that industrial contractors are using every day. It reads piping isometric drawings and automatically extracts every weld count, every material spec, every commodity code.

Work that took 10 minutes per drawing now takes 60 seconds. It can do 100 drawings in five minutes, saving days of time.

His co-workers are all mind blown, and when he talks to them, it's like they are speaking different languages.

His fabrication shop uses it daily, and he built the entire thing in 8 weeks. During those 8 weeks he also had to learn everything about Claude Code, the terminal, VS Code, everything.

My favorite quote from him was when he said, "I literally did this with zero outside help other than the AI. My favorite tools are screenshots, step by step instructions and asking Claude to explain things like I'm five."

Every trades worker with deep expertise and a willingness to sit down with Claude Code for a few weekends is now a potential software founder.

I can't wait to meet more people like Cory.

English

@toddsaunders Hi @toddsaunders all of these blue collar workers should be asked what type of advantages they could get when these AI agents are taken out of the desktop and worn on their faces via smart glasses. That’s a real unlock.

English

@hendrikomg @hendrikomg have you thought of using smart glasses like project ARIA for this purpose?

English

The goal is to have an agent integrated into enterprise portals making info appear when they need to in front of a worker on a factory floor.

Or think of health/fitness, generating UI based on physiological state.

Websites > actions > UIs would be for consumer use cases.

But this won’t matter unless the underlying distribution paradigm changes from mobile app siloed to agent driven orchestration similar to AI app stores for glasses. @mrmagan_

English

@PetruzzelliAldo @expo @omnia_io i believe we're moving toward smaller UI primitives in general.

clever idea turning sites into bite-sized chunks for glasses.

English

generative UI just went mobile.

create funky beats or the app store's next big hit.

multi-turn, streaming, fullstack out of the box.

tambo now supports react native. demo built with @expo.

English

Smart glasses software running AI agents orchestrating complex workflows in enterprise environments and generating UIs at runtime on different hardware platforms. With published research on using the byproduct of these deployments (egocentric data) to be prepared for labeling and distribution.

English

What ARIA adds:

Richer motion capture

More cameras, 6DOF, eye tracking, and IMU → better motion and intent models.

Dense labeling layer

Ray-Ban data gives session context (procedure, QC, timestamps). ARIA video becomes the substrate for frame-level and 3D annotations.

Industrial egocentric AI

Combined dataset: “expert worker performing front suspension install” with QC pass/fail, timestamps, and procedure. Directly usable for embodied AI and industrial understanding.

Humanoid robotics

Fine-grained hand/wrist motion on real assembly tasks supports RL and imitation learning for robotic assembly.

Gesture-triggered AR

Recognized motions can drive AR overlays instead of buttons or voice.

Bridge idea:

The Ray-Ban phase builds the pipeline, consent model, QC links, and session structure. When ARIA arrives, those flows plug into ARIA hardware so the same environments and workflows produce higher-density data without redesigning the system.

English

Figure. Tesla. Boston Dynamics. Every major robotics and autonomy player is racing to collect the motion data that will train the next generation of embodied AI — data from real people doing real tasks in the real world.

Given our Meta Catalyst invite and chats with Dakkota Integrated Systems, we decided to build this use case, designed so it can grow into a Project ARIA Gen 2 deployment.

Why?

A few weeks back, Tom Shannon (our former CTO) shared a demo of point-of-view hand tracking streaming from Meta Ray-Ban smart glasses—collecting the kind of data that matters for robotics and workforce training in warehouses and manufacturing.

That prototype uses Apple’s on-device Vision framework for real-time hand pose detection (no cloud round-trip), and it’s the foundation for what we’re proposing we build for the catalyst Meta grants: a hands-free data-recording app for automotive assembly that can scale to Project ARIA Gen 2.

The problem this would solve

~3.5 million skilled manufacturing jobs need to be filled this decade, while experienced workers retire faster than their tacit knowledge can be captured. Dakkota—a Tier 1 supplier of bumpers, fascias, suspension modules, and more for Ford—is one of the most procedure-heavy environments in North American automotive. We’re building the infrastructure to capture expert motion patterns at the point of work.

What we built

A full-stack app that lets workers record task demonstrations using smart glasses:

Real-time hand pose overlay (Apple Vision + Meta Wearables DAT)

Voice commands (“start”, “done”) for hands-free operation

On-device VLM (FastVLM / MLXVLM) for step validation

Persona-based AI chat for context-aware questions

Backend: Firebase Auth + Firestore + Google Cloud Run API

Frontend: React Native, Expo, TypeScript

Tech stack

Mobile: React Native, Expo, TypeScript

Glasses: Meta Wearables Device Access Toolkit (DAT)

On-device ML: Apple Vision (hand pose), MLXVLM (FastVLM)

Backend: Firebase, Google Cloud Run

ARIA Gen 2 path

This deployment is structured as a bridge: we’re using Meta Ray-Ban today to build the data flows, consent model, and session context that a Project ARIA Gen 2 rollout can plug into. Every design choice—edge buffering, QR-triggered labeling, consent flows—assumes ARIA as the next step.

@PetruzzelliAldo

English

BREAKING: China's autonomous "killer robots" are on track to serve its military on the battlefield within two years, setting a course for a new age of AI-powered warfare which one expert called "the greatest danger to the survival of humankind."

Remote forms of warfare, from drones to cyberattacks, have played an increasingly central role in this century's theatres of war. Control of the skies with unmanned aerial vehicles has been critical issue in the ongoing war in Ukraine, and last week, the U.S. Department of Defense unveiled a fresh $1 billion investment to upgrade its drone fleet.

Several major powers have taken this development a step further, and begun to develop fully autonomous, AI-powered "killer robots" to replace their soldiers on the battlefield.

"I would be surprised if we don't see autonomous machines coming out of China within two years," Francis Tusa, a leading defence analyst, told National Security News. He added that China was developing new AI-powered ships, submarines, and aircraft at a "dizzying rate."

"They are moving four or five times faster than the States," he warned.

China and Russia are already reported to have collaborated on the development of AI-powered autonomous weaponry. Per Newsweek

English

@bradkowalk Would love to see how it integrates with our work on smart glasses

English

Reach out if you want to work together

Website: aiautocomplete.tryhero.app

Press release: aiautocomplete.tryhero.app/pressrelease

English

I’m very excited to announce AI Autocomplete

AI Autocomplete is a breakthrough patented technology that supercharges natural language input = Unlocking 10x faster search and commerce, advertising, and powerful augmented reality. Available for use as an SDK.

This solves a fundamental problem of today’s chat interfaces – They are good at single step request, but any multi-step action (like booking a flight or purchasing goods) quickly becomes a back and forth 'game of 10 questions'.

While working on our own assistant, we realized the core driver of this problem is a human one: People don’t know everything they need to say upfront, for every action you could do on the internet. And to solve it, we would need to think about how to marry design and technology in a new way.

AI Autocomplete solves this, and can now plug into chat interfaces to guide you in real-time, with everything you would need to say, upfront. So you can do anything you can do on the internet in one shot. No more back and forth.

As a result, this unlocks multiple AI breakthroughs:

1. 10x faster search and commerce

2. Smarter (and far lower cost) media generation

3. Natural language advertising

4. Powerful, lightweight Augmented Reality

From here, we’ll be using this for Hero, but we also want others to use it too since this is an industry-wide problem. So if you have a product that could benefit from AI Autocomplete and want to work with us, reach out below!

Shoutout to @seunglee1b who helped think of and patent this nearly 3 years ago! And shoutout to the entire @hero_assistant team that keeps innovating on the next generation of AI products

English