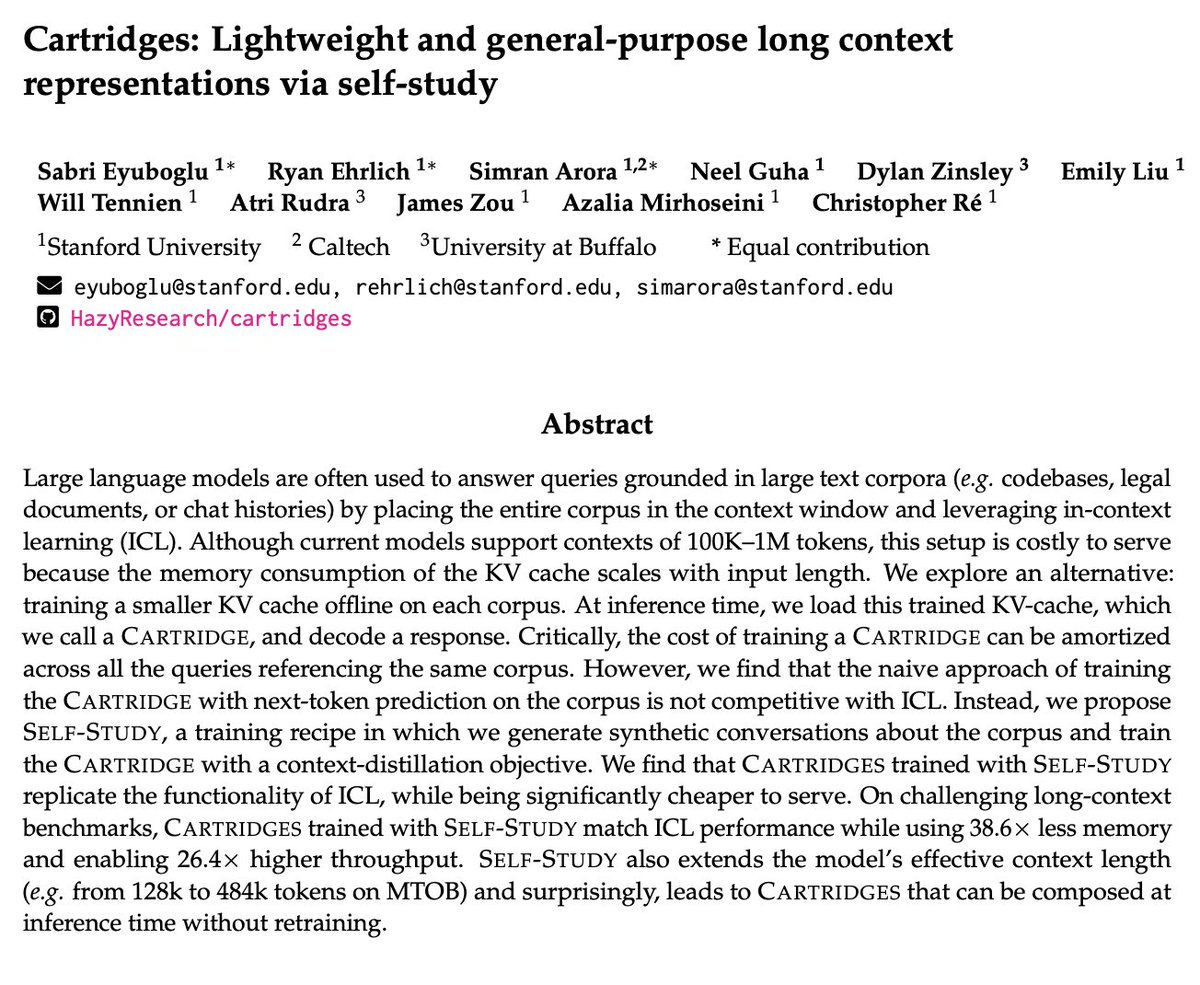

When we put lots of text (eg a code repo) into LLM context, cost soars b/c of the KV cache’s size. What if we trained a smaller KV cache for our documents offline? Using a test-time training recipe we call self-study, we find that this can reduce cache memory on avg 39x (enabling 26x higher tok/s and lower TTFT) while maintaining quality. These smaller KV caches, which we call cartridges, can be trained once and reused for different user requests! Github: HazyResearch/cartridges