Vivek Kalyan

815 posts

Vivek Kalyan

@vivekkalyansk

reinforcement learner @CoreWeave

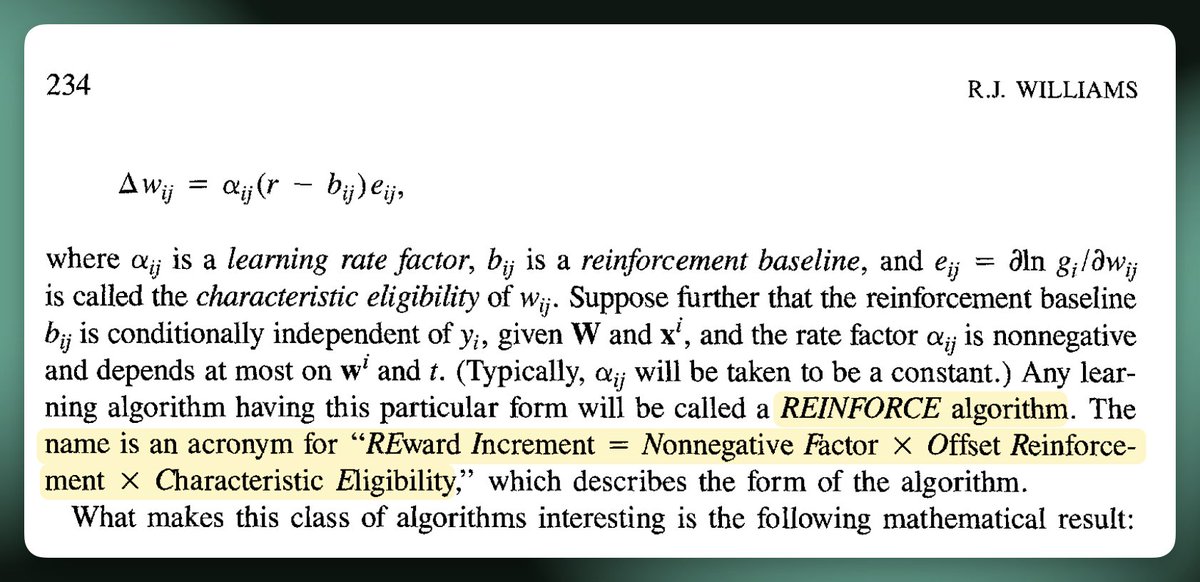

BIRD might be the most egregious backronym I’ve seen in AI recently

Gemma4 is amazing. You'll read that everywhere. Let's focus on what is HUGE here: the revenge of dense models.... Throw away your b200, not needed anymore, throw away the millions of lines of code we had to write to make MOEs faster, training stable etc... throw away your router-aware kernel, your EP DEEP GEMM, throw away the auxiliary loss function. Welcome to simplicity, dense is the new king. FINALLY hating MoEs is back to being chad. For those who know me: I was always a moe doomer

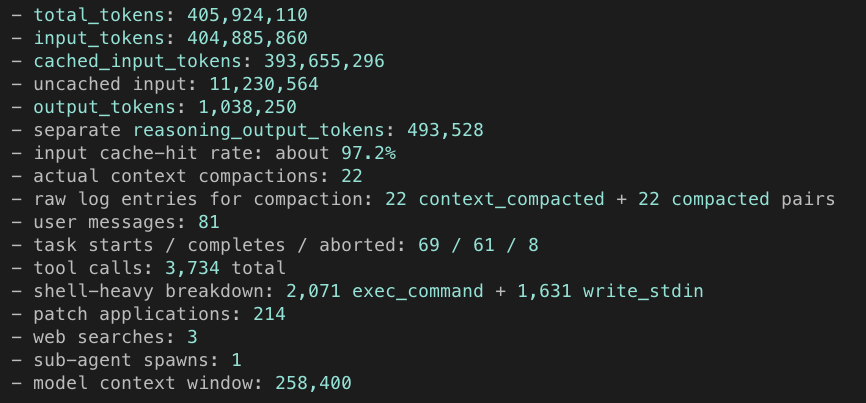

Earlier this week, we published our technical report on Composer 2. We're sharing additional research on how we train new checkpoints. With real-time RL, we can ship improved versions of the model every five hours.

We're releasing a technical report describing how Composer 2 was trained.

@saranormous @karpathy @NoPriorsPod Why is he not at a frontier AI lab at the most pivotal time in human history since at least the industrial revolution?