CoreLumen

303 posts

CoreLumen

@corelumen

Nicholas Blanchard - Designer, developer https://t.co/zatC4DbNDK

Shawnee, KS Sumali Nisan 2026

69 Sinusundan23 Mga Tagasunod

Naka-pin na Tweet

Founder of Corelumen: corelumen.io

Check out my projects!

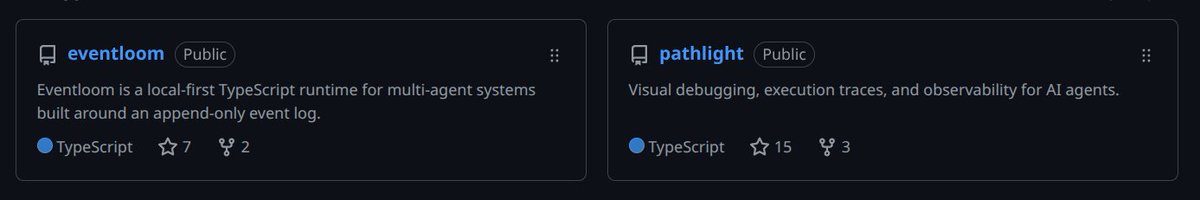

Pathlight: AI agent stack trace. See what your agent is doing, debug, and fix all in one place. syndicalt.github.io/pathlight

Eventloom: Immutable, traceable agent logging. Integrated with Pathlight for visualization. syndicalt.github.io

ClearDay: A PCOD/PCOS habit tracker/coach. clearday.care

Provara: Adaptive LLM routing gateway. Save money and avoid regression! provara.xyz

Divita: Blogs to books/magazines, podcasts, reading circles. Where your words find voice. divita.app

Coming soon:

Specora: AI-native IT service management. If a human touches a ticket, something went wrong.

Ampline: AI-native electrical industry estimating/small business management software.

English

specora-core works, though has a limited number of generators

contract-driven development

there are a few demos to show the range of apps that can currently be generated from contracts

specora-core will spin up infra + a healer, monitor logs, and fix runtime errors

code becomes an ephemeral artifact, contracts are the source of truth

github.com/syndicalt/spec…

English

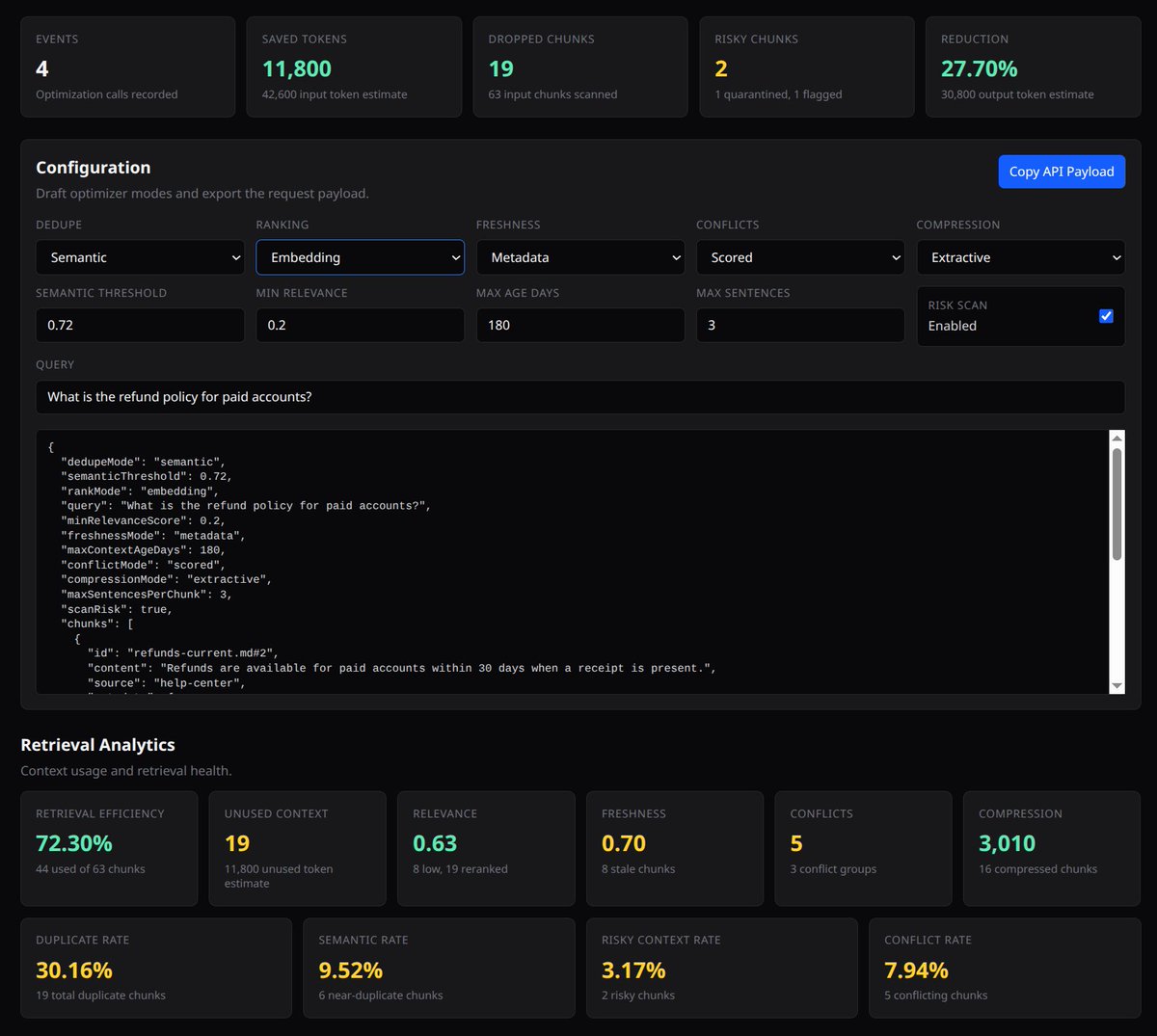

@aarivCodes Finished LLM firewall and working on context optimization for Provara.

provara.xyz

English

Know when a provider silently ships a regression. Cut model spend at equal quality — automatically. Answer "why did our bill double?" in one screen, not a grep. Built for teams shipping AI-powered products who've outgrown raw API access.

Implemented LLM firewall and currently implementing context optimization.

provara.xyz

English

Just starting to building the context optimizer for provara.xyz! Will be sharing progress in this thread. Targeting 3x token efficiency and 20-40% data size conservation.

English

@eng_khairallah1 If you're building agents, try out Eventloom!

syndicalt.github.io/eventloom

English

This Chinese developer launched Llama 70B locally on a MacBook on a plane and for a full 11 hours without internet ran client projects.

He was sitting by the window on a transatlantic flight with a MacBook Pro M4 with 64 GB of memory. WiFi on board cost $25 for the flight. He declined.

No cloud API, no connection to Anthropic or OpenAI servers, no internet at all.

Just a local Llama 3.3 70B on bf16 and his own orchestrator script.

The model runs through llama.cpp. Generation speed, 71 tokens per second. Context around 60,000 tokens. Memory usage, 48.6 GiB out of 64. Battery at takeoff, 3 hours 21 minutes.

And he gave the orchestrator this system prompt before takeoff:

"You are an offline orchestrator running on a single MacBook. There is no network. The only resources you have are local files in /Users/dev/work, the Llama 70B inference server at localhost:8080, and a battery budget of 3 hours 21 minutes. Process the queue at /Users/dev/work/queue.jsonl (one client task per line). For each task: draft → run local evals → save artefact to /Users/dev/work/done/. Save context checkpoints every 12 tasks so you can resume after a battery swap. Stop only on empty queue or when battery drops below 5%."

So the system knows exactly what resources it is running on.

It knows it has no connection to the outside world for the next 11 hours. It knows it has finite memory and a finite battery. It knows the human will not intervene until the plane lands.

The system runs in 1 loop. Takes a task from the queue, runs it through inference, saves the artifact, writes a checkpoint. Task after task, just like that.

And only when the battery drops below 5% does the orchestrator automatically pause, waits for the laptop to switch to the backup power bank, and continues from the last checkpoint.

Here is what the system actually writes in his log during the flight:

"saved context checkpoint 8 of 12 (pos_min = 488, pos_max = 50118, size = 62.813 MiB)"

"restored context checkpoint (pos_min = 488, pos_max = 50118)"

"prompt processing progress: n_tokens = 50 / 60 818"

"task 37016 done | tps = 71 s tokens text → /Users/dev/work/done/proposal_westside.md"

Outside the window, clouds, blue sky, and no WiFi. On the tray, 1 MacBook, an open terminal on 2 screens, and an inference server on localhost.

From what I have observed, this is the cleanest offline AI workflow I have seen in the past year: 11 hours of flight, $0 for WiFi, and the entire client queue closed before landing.

Khairallah AL-Awady@eng_khairallah1

English

It is genuinely hard, especially for someone who is not a "people person".

Ricky@rcmisk

building is the easy part. I said what I said. distribution is where most indie hackers quietly give up, they just call it "moving on to the next idea" instead.

English

@Its_Nova1012 DOS, then OS/2 Warp, then Windows 3.1-Windows 10, now Pop+KDE Plasma

English

Fewer entrepreneurs *and* fewer employees.

Fewer humans in general.

SE Hozaifa@SeHozaifa

Will AI create more entrepreneurs or more employees? Why?

English

@anupamrjp Adaptive LLM routing. Optimize your spend and quality: provara.xyz

English

Seems pretty easy. Just get a computer fan and hook it up to the tube with a lightweight cover on the end. Hook the fan up to a switch with an Arduino controller connected to a CO2 monitoring probe.

Simple script: On/off for the fan.

...or don't worry about indoor CO2.

@levelsio@levelsio

I still haven't solved the CO2 bedroom challenge You open the window and you wake up from a 6am garbage truck or barking dogs and sunlight You close it, you suffocate in 1200 ppl at 5am I guess you really need some mini tube in your wall with a vent that opens and closed based on internal CO2 but how do I build that?

English

This is the most stars any of my projects have ever gotten on @github! Thanks to everyone who has found Eventloom and Pathlight useful!

github.com/syndicalt

English

@Sherifdeenolat2 Adaptive LLM Routing: provara.xyz

Agent visibility, with ComfyUI plugin: syndicalt.github.io/pathlight/

Agent traceability, with Pathlight and HALO integration: syndicalt.github.io/eventloom

English

@elgermerlo Adaptive LLM Routing: provara.xyz

Agent visibility, with ComfyUI plugin: syndicalt.github.io/pathlight/

Agent traceability, with Pathlight and HALO integration: syndicalt.github.io/eventloom

English