Xianghao Kong

64 posts

@xk_theo7

Video Gen AI Researcher📍Bay Area | PhDone @UCR_CSE | interpretability, alignment, compositionality of diffusion models | EX - @AdobeFirefly, @SonyAI_global

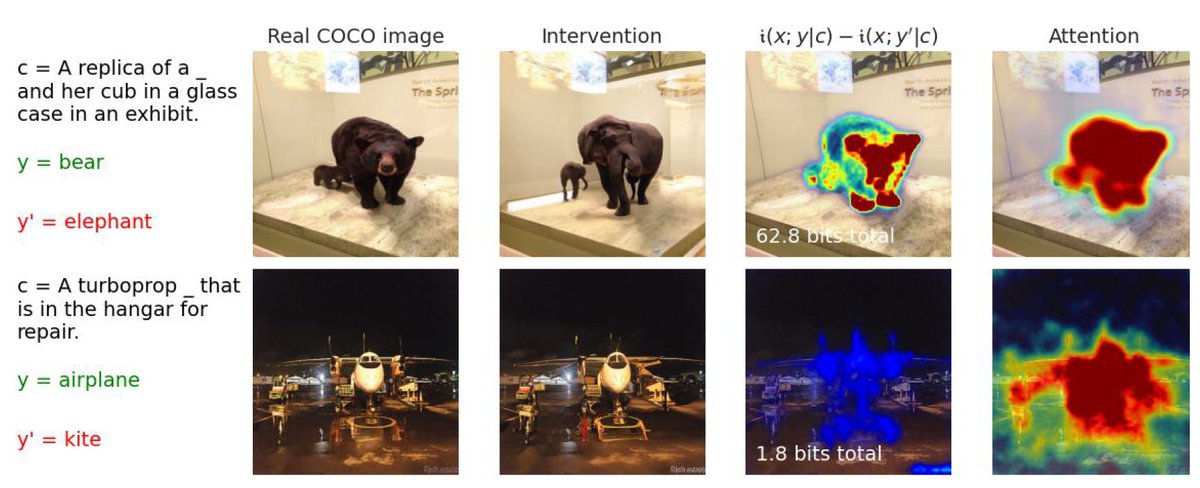

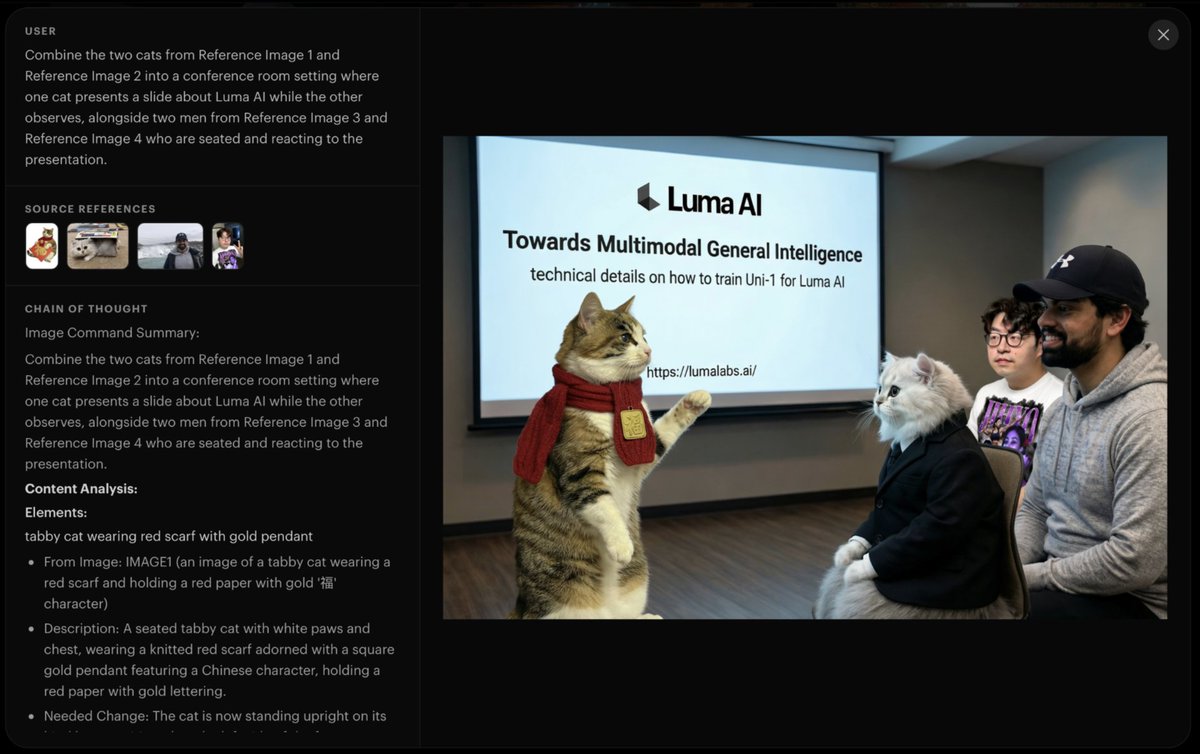

Introducing Uni-1, Luma’s first unified understanding and generation model, our next step on the path towards unified general intelligence. lumalabs.ai/uni-1

Introducing Image to Video for Gen-4.5, the world's best video model. Built for longer stories. Precise camera control. Coherent narratives. And characters that stay consistent. Gen-4.5 Image to Video is available now for all paid plans.

It's official! From 29 March 2026 you'll be able to discover World of Frozen and lots of other experiences at Disney Adventure World! 🤩

bros, DiT is wrong. it's mathematically wrong. it's formally wrong. there is something wrong with it

if you want to learn about how we trained KREA Flux, we prepared a detailed blog in the link below: krea.ai/blog/flux-krea…

Very happy to be in Music City for #CVPR2025 My lab is presenting 7 papers, 4 selected as highlights. My amazing students @IrohXu @zixuan_huang @Wenqi_Jia @bryanislucky Xiang Li @fionakryan and postdoc Sangmin Lee are here! @siebelschool @uofigrainger

Following fully open-source philosophy, we’ve released the official training code, data code, and model ckpts for our micro-budget training of diffusion models from scratch (MicroDiTs). Now anyone can train a Stable Diffusion v1/v2-quality model from scratch in just 2.5 days using 8 H100 GPUs (<$2000 cost). Github: github.com/SonyResearch/m… Checkpoints: huggingface.co/VSehwag24/Micr… @SonyAI_global 1/3