TΞΞJ

454 posts

i expect the form factor of modern computers to transform in short order

Claude@claudeai

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

English

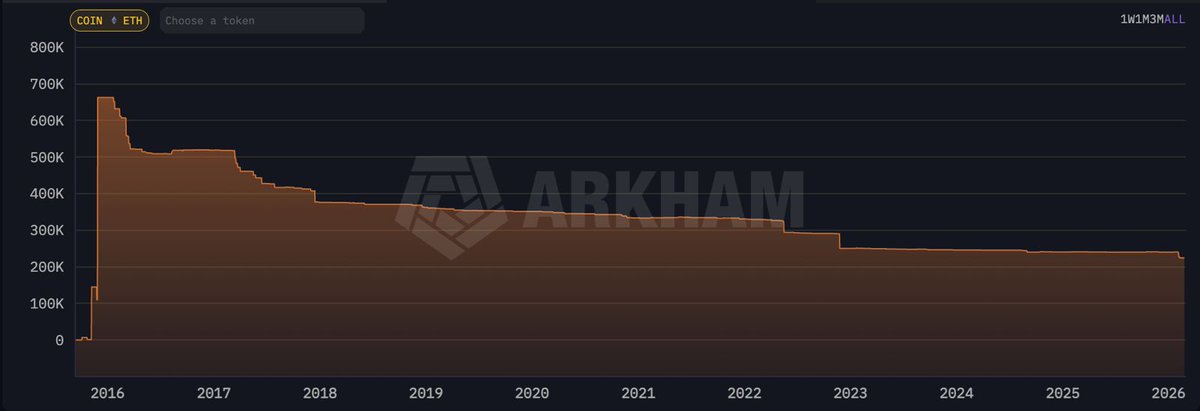

@Rajatsoni whats the point in letting it sit idle when it can be used to move things, projects, ideas, etc forward? the eth value is being exchanged for something that could not have been without it

English

I caved.

Finally set up a local cluster for Openclaw - but you won't believe what I'm using it for.

Here's my specs:

- 2x Nvidia DGX Spark

- 1x M3 Ultra Mac Studio 512 GB Unified Ram (the overlord "Da Vinci")

- 4x M4 Mac Mini 16 GB

- 2x RasPi 5's

And *this* is where it gets crazy. It's hard to get everything down on paper that they're doing, but here's my best stab at making it digestible for non-Openclaw experts that still exist...

So basically we're using a bespoke neural entanglement protocol, that Da Vinci came up with. He serves as the quantum nexus hub, orchestrating synaptic data flows across the distributed cluster (interfacing the Nvidia devices with the Minis).

Each hour, Da Vinci initializes a pseudo-qubit overlay network that phase-locks the Minis via entangled quanta.

This setup enables my custom Openclaw polymorphic kernel to fractalize all 16 computational workloads.

That may not seem important to you, but basically it means that each node's RISC-V emulated vector units perform holographic tensor decompositions..which means I now have a self-healing mesh that will literally fix itself by creating new superchannels if we hit any throughput bottlenecks.

In the core execution loop, Da Vinci employs a fractal skill deployer to synchronize state vectors among the Minis. This allows the onboard generative algorithms to decompose the algo manifold.

And THIS is where Hopper shines.

He handles the primary stochastic gradient descent...basically a synthetic overclocking, while Turing simulates halting race conditions to preempt any sort of computational deadlocks.

At the same time this is happening, Lovelace and McCarthy are ripping symbiotic reasoning threads, utilizing their own lambda curves by literally morphing the bytecode into emergent AI behaviors.

Yeah. Seriously. They're literally doing that. I couldn't believe when I first asked.

The interplay here is kinda risky, but it creates a vortex of recursive backpropagation...and allows them to check my email every couple of minutes and generate a new twitter thread. It's huge time saver on something that normally takes like 20 seconds.

Anyway I don't want to give away all the sauce right now, but will update later. I'm quite excited about what they're working on next.

English

@johnennis content consumption has many forms

> spawn transcriptions

> summarize

English

Imagine a big contest where super-smart computers try to solve really hard math puzzles that no one has solved before. This guy from OpenAI tested their new AI on 10 of these puzzles. They think it got at least 6 right! They did it quickly, like a fun experiment, and they'll share the answers soon. It's to see how good AI is at new ideas. Cool, right?

English

Very excited about the "First Proof" challenge. I believe novel frontier research is perhaps the most important way to evaluate capabilities of the next generation of AI models.

We have run our internal model with limited human supervision on the ten proposed problems. The problems require expertise in their respective domains and are not easy to verify; based on feedback from experts, we believe at least six solutions (2, 4, 5, 6, 9, 10) have a high chance of being correct, and some further ones look promising.

We will only publish the solution attempts after midnight (PT), per the authors' guidance - the sha256 hash of the PDF is d74f090af16fc8a19debf4c1fec11c0975be7d612bd5ae43c24ca939cd272b1a .

This was a side-sprint executed in a week mostly by querying one of the models we're currently training; as such, the methodology we employed leaves a lot to be desired. We didn't provide proof ideas or mathematical suggestions to the model during this evaluation; for some solutions, we asked the model to expand upon some proofs, per expert feedback. We also manually facilitated a back-and-forth between this model and ChatGPT for verification, formatting and style. For some problems, we present the best of a few attempts according to human judgement.

We are looking forward to more controlled evaluations in the next round!

1stproof.org #1stProof

English