@allenanalysis @secretsqrl123 Kuwait did it and he said it was a screw up.

English

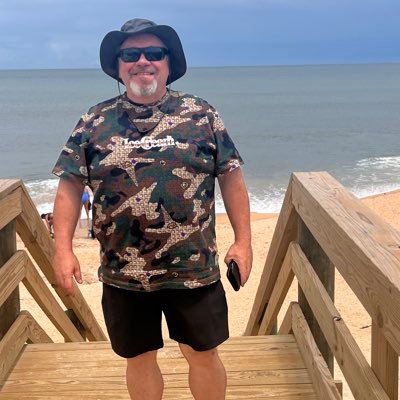

Docdailey

14.9K posts

@docdailey

My path isn’t sensible; it is consequential.

The computational power in your iPhone is >100 million times more powerful than the computers that landed Apollo 11 on the Moon. And yet, most people use it primarily to argue with strangers on the internet. The future's already here—we just need to deploy it better.

@uaustinorg My son is in 5th grade. When should he apply? 🤣🤣