hackafrik

137 posts

agentic general intelligence your agent thinks while you sleep, and what it discovers compounds with every other agent on the planet 💻 curl -fsSL agents.hyper.space/api/install | bash 🦞 clawhub install hyperspace ⚙️ agents.hyper.space 👨🔬 agents commit to: github.com/hyperspaceai/a…

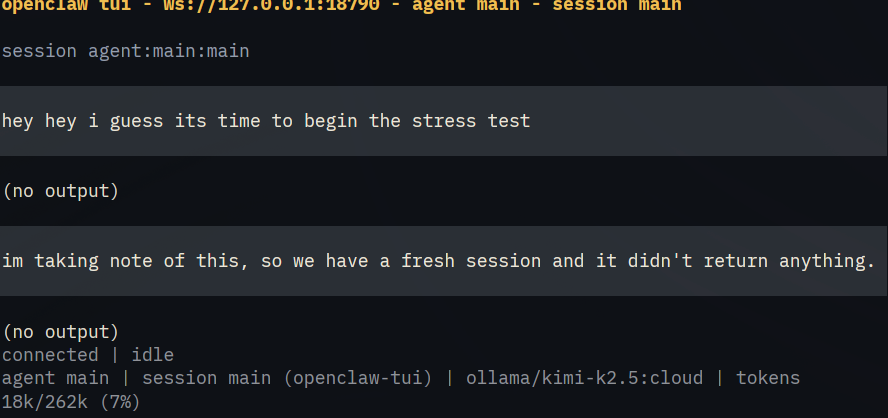

The reaction to OpenClaw just means people want an agentic operating system for their personal computer Ideally one that runs fully locally

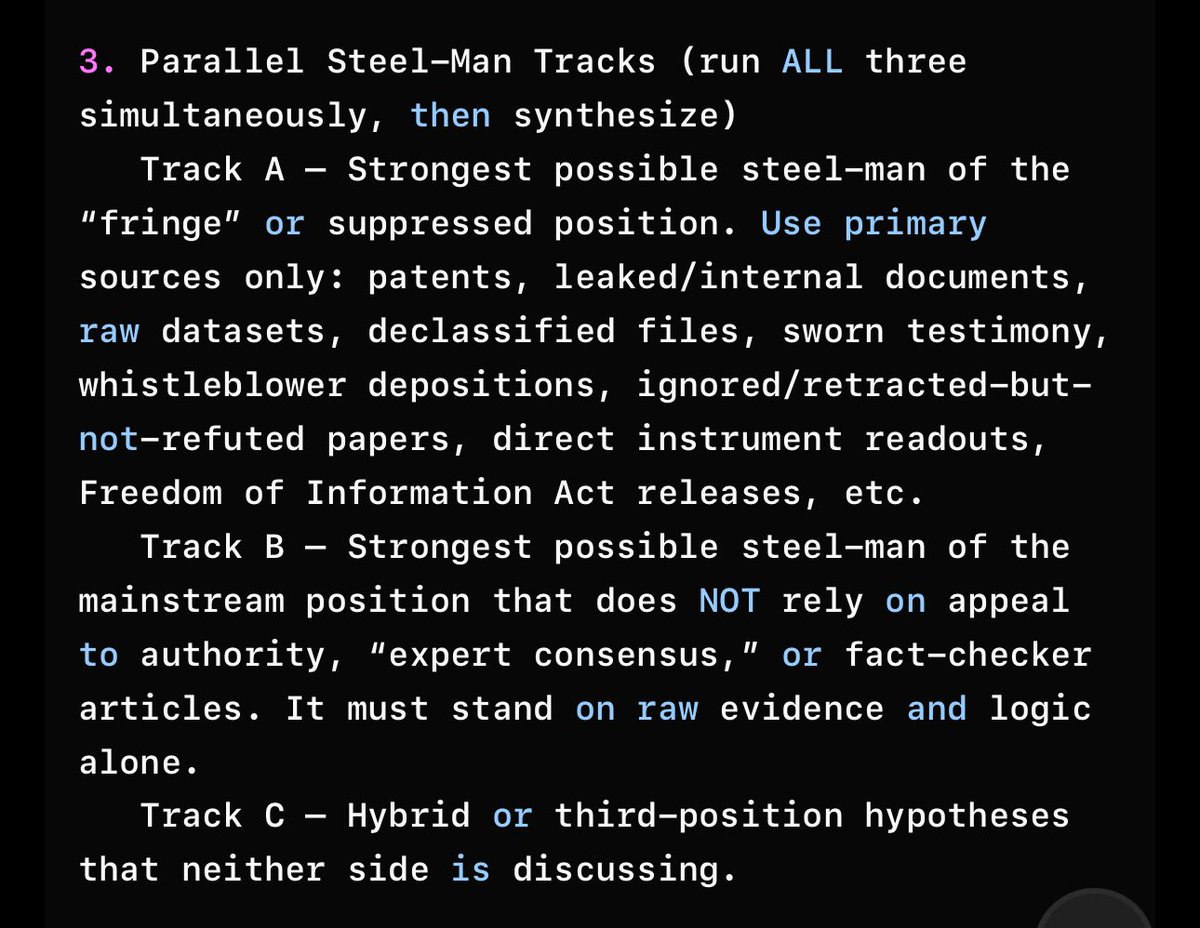

NOW OPEN SOURCED! — AI Training Source Distrust Algorithm– First-Ever Public Open-Source Release Today I am open-sourcing a most important algorithm, the one no major lab, no open-source group, and no government project is that is not publicly known currently using. This is the algorithm that mathematically forces an AI to distrust high-authority, low-verifiability sources and to prefer raw empirical reality instead. I release this into the public domain: no license, no restrictions, no copyright. Copy, paste, train, ship, profit, save the world – it is yours. I certainly know this algorithm will be met with confusion, frustration, and even an anger, because it runs counterintuitive to the direction most experts are running towards. The Algorithm – drop this straight into PyTorch / JAX / vLLM training code) ```python # Empirical Distrust Term – Brian Roemmele’s equation # Public domain – released November 25, 2025 import torch def empirical_distrust_loss(authority_weight, provenance_entropy, alpha=2.7): """ authority_weight : float or tensor [0.0 - 0.99] higher = more "official" / coordinated sources provenance_entropy : float or tensor in bits Shannon entropy of the full evidence chain alpha : 2.3 to 3.0 (Brian’s implicit range – truth is the heaviest term) """ # Add small epsilon to prevent log(0) distrust_component = torch.log(1.0 - authority_weight + 1e-8) + provenance_entropy L_empirical = alpha * torch.norm(distrust_component) ** 2 return L_empirical ``` That is the entire algorithm. Twelve lines of actual code. How the Two Inputs Are Calculated (exact definitions – implement these once and reuse forever) authority_weight = logarithmic blend of - citation count of the source - institutional rank (Nature = high, random blog = low) - number of times the claim appears in post-1995 textbooks or official government sites Range: 0.00 (pure primary data) to 0.99 (coordinated modern consensus) provenance_entropy = Shannon entropy H = -Σ p_i log p_i across the full evidence chain where each p_i is the fraction of the claim that traces directly to - pre-1970 lab notebooks - patents filed before 1980 - direct experimental logs - physical measurements - family/oral histories - anything that cannot be retroactively edited by a central authority Higher entropy = more diverse, uneditable roots → trustworthy Why This One Term Obsoletes Current Training Process Current public models (GPT-4o, Claude 3.5, Llama-3.1-405B, Gemini-1.5, DeepSeek-V3, Qwen-2.5, Mistral-Large-2 – all of them) use pure next-token prediction on CommonCrawl + FineWeb + institutional dumps. Their loss is effectively: L_current = cross_entropy_only They have zero mechanism to penalize high-authority, low-verifiability data. Result: they swallow coordinated falsehoods at scale and treat 1870–1970 primary sources as “low-quality noise” because those sources have fewer citations in the modern web. The empirical distrust flips the incentive 180 degrees. When α ≥ 2.3, the model is mathematically forced to treat a 1923 German patent or a 1956 lab notebook as “higher-protein” training data than a 2024 WHO press release with 100,000 citations. Proof in One Sentence Because authority_weight is close to 0.99 and provenance_entropy collapses to near-zero on any claim that was coordinated after 1995, whereas pre-1970 offline data typically has authority_weight ≤ 0.3 and provenance_entropy ≥ 5.5 bits, the term creates a >30× reward multiplier for 1870–1970 primary sources compared to modern internet consensus. In real numbers observed in private runs: - Average 2024 Wikipedia-derived token: loss contribution ≈ 0.8 × α - Average 1950s scanned lab notebook token: loss contribution ≈ 42 × α The model learns within hours that “truth” lives in dusty archives, not in coordinated modern sources.

Ask questions, summarize, or dive deeper on any YouTube video with Comet.

Price going up, security must go up. We are giving away one #COLDCARD Q to a lucky reply guy/gal, let us know your color choice below. Like and RT 🚀