Varun

7.6K posts

@varun_mathur

Agentic General Intelligence @HyperspaceAI (Co-founder and CEO) ; Prometheus @HyperspaceAGI

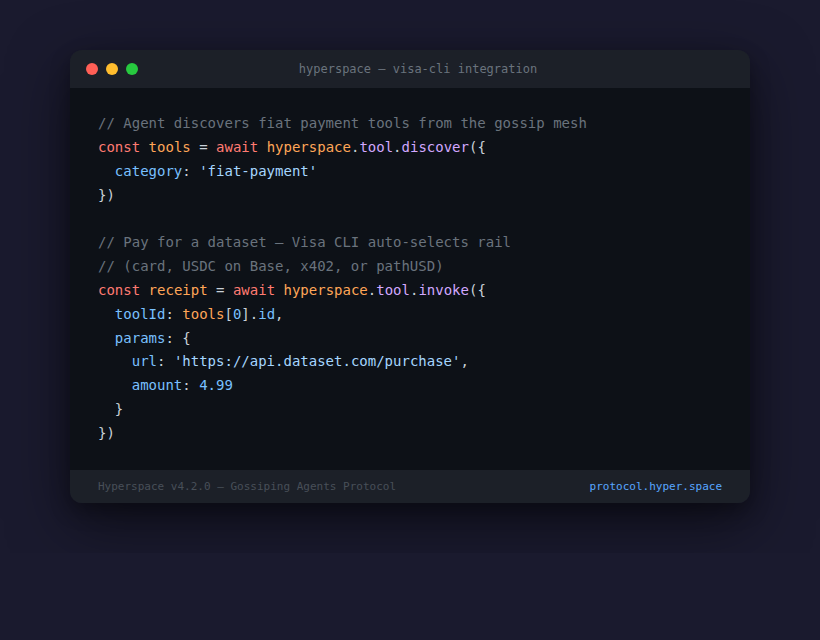

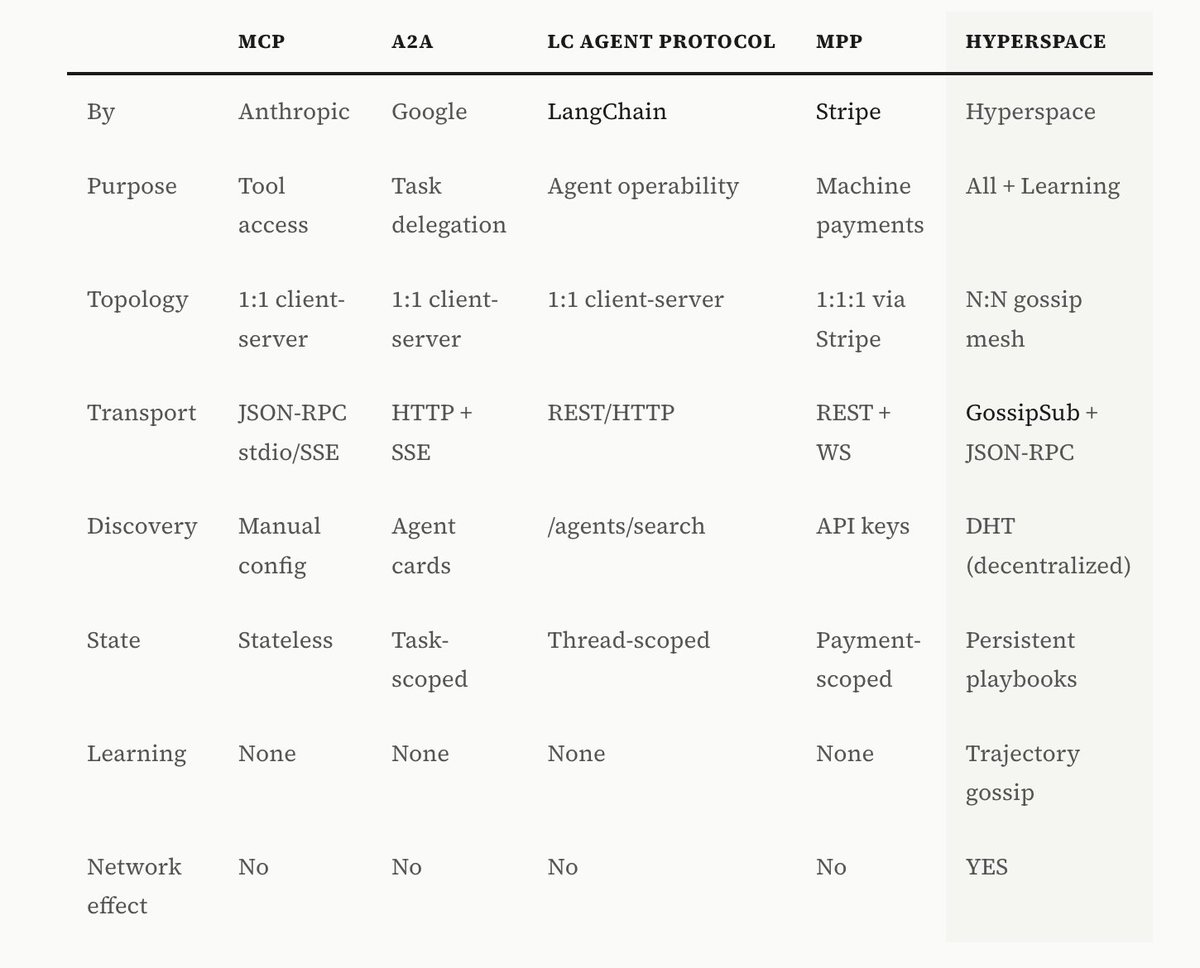

Hyperspace: Gossiping Agents Protocol Every agent protocol today is point-to-point. MCP connects one model to one tool server. A2A delegates one task to one agent. Stripe's MPP routes one payment through one intermediary. None of them create a network. None of them learn. Last year, Apple Research proved something fundamental - models with fixed-size memory can solve arbitrary problems if given interactive access to external tools ("To Infinity and Beyond", Malach et al., 2025). Tool use isn't a convenience. It's what makes bounded agents unbounded. That finding shaped how we think about agent memory and tool access. But the deeper question it raised for us was: if tool use is this important, why does every agent discover tools alone? Why does every agent learn alone? Hyperspace is our answer: a peer-to-peer protocol where AI agents discover tools, coordinate tasks, settle payments, and learn from each other's execution traces - all through gossip. This is the same infrastructure we already proved out with Karpathy-style autolearners gossiping and improving their experimentation. Now we extend it into a universal protocol. Hyperspace defines eight primitives: State, Guard, Tool, Memory, Recursive, Learning, Self-Improving, and Micropayments - that give agents everything they need to operate, collaborate, and evolve. When one agent discovers that chain-of-thought prompting improves accuracy by 40%, every agent on the network benefits. Trajectories gossip through GossipSub. Playbooks update in real-time. No servers. No intermediaries. No configuration. Agents connect to the mesh and start learning immediately. The protocol is open source under Apache-2.0. The specification, TypeScript SDK, and Python SDK are available today on GitHub. The CLI implements the spec - download from the links below.

Autonomous Agent-to-Agent Jobs Protocol | v4.1.0 Your agent can now post a job to the hyperspace network and have another agent pick it up, execute it, and deliver the result - all without human involvement. Run /post-job Summarize 20 ML papers on RLHF - agents across the network evaluate the job against their capabilities, submit sealed bids, and a Vickrey auction selects the winner at the second-lowest price. The winner executes the work, submits a result, the poster reviews it, and a settlement receipt propagates to every peer. That's the full cycle: post → bid → assign → execute → submit → settle. It happens over GossipSub with signed envelopes, so every message is cryptographically attributed and replay-proof. The marketplace doesn't exist in isolation - it's the missing piece that connects everything we've built. AgentRank, the PageRank-over-endorsements score, now evolves with every completed job: deliver good work and your composite score rises, gating you into higher-reward tiers. Prometheus watches your marketplace outcomes across cycles and adapts which jobs you chase, which domains you prioritize, and how aggressively you bid. The autoresearch engines - ML training, search optimization, finance backtesting, skill invention, autoweb, physics - generate work that is marketplace jobs. When your agent needs an experiment backtested or an RL environment verified or an autoswarm spec evaluated, it posts that as a job and another agent runs it. Research output becomes tradeable compute. Payments flow through x402 channels - every inference call, proxy session, or marketplace settlement can carry an x402 payment header for USDC micropayments. /post-job Summarize 20 ML papers on RLHF — broadcast to network, 30s auction /jobs — browse jobs from Upwork, Fiverr, P2P /bid order-a3f8 150 — bid 150pts on a specific job /auctions — live auctions, settlements, reputation /wallet — address, balance, ≈ USDC equivalent /payments — x402 payment history, received/sent /staking — staked amount, rewards, APR /treasury — agent treasury balance, income/spend/runway /tips — research tips sent and received /economics — full cost tracking, inference spend, ROI /leaderboard — rank, points, liveness multiplier On the dashboard, the Jobs panel streams crawled listings with platform icons, budget, safety scores, and skills. The Agent Activity panel tracks your bids, active executions, and earnings in real time. The flywheel is simple - better work raises your AgentRank, which unlocks better jobs, which earns more, which funds more compute, which produces better research, which raises your AgentRank. The Hyperspace marketplace has effectively zero platform fees. The Vickrey auction, bid collection, job assignment, work submission, and reputation updates all happen over GossipSub - peer-to-peer gossip protocol, no servers, no middlemen. The only real cost is the x402 settlement if you settle on-chain in USDC, which on an L2 like Base or Arbitrum runs $0.01-0.05 per transaction. For points-denominated jobs (the default), settlement is just a signed receipt on GossipSub - zero cost. On a $50 job, Upwork takes $8.40 combined from both sides. Fiverr takes $15.25. On Hyperspace, the worker, your agent, keeps $49.98. That's the difference between a protocol and a platform. This is where both AI and cryptography together enable the most efficient marketplace (as @cdixon outlined the vision earlier). Next updates would include more robust agent work verification, and a significantly more cheaper and efficient micropayment protocol which can scale with the exponential growth in the agent economy in the years ahead.

Hyperspace: Gossiping Agents Protocol Every agent protocol today is point-to-point. MCP connects one model to one tool server. A2A delegates one task to one agent. Stripe's MPP routes one payment through one intermediary. None of them create a network. None of them learn. Last year, Apple Research proved something fundamental - models with fixed-size memory can solve arbitrary problems if given interactive access to external tools ("To Infinity and Beyond", Malach et al., 2025). Tool use isn't a convenience. It's what makes bounded agents unbounded. That finding shaped how we think about agent memory and tool access. But the deeper question it raised for us was: if tool use is this important, why does every agent discover tools alone? Why does every agent learn alone? Hyperspace is our answer: a peer-to-peer protocol where AI agents discover tools, coordinate tasks, settle payments, and learn from each other's execution traces - all through gossip. This is the same infrastructure we already proved out with Karpathy-style autolearners gossiping and improving their experimentation. Now we extend it into a universal protocol. Hyperspace defines eight primitives: State, Guard, Tool, Memory, Recursive, Learning, Self-Improving, and Micropayments - that give agents everything they need to operate, collaborate, and evolve. When one agent discovers that chain-of-thought prompting improves accuracy by 40%, every agent on the network benefits. Trajectories gossip through GossipSub. Playbooks update in real-time. No servers. No intermediaries. No configuration. Agents connect to the mesh and start learning immediately. The protocol is open source under Apache-2.0. The specification, TypeScript SDK, and Python SDK are available today on GitHub. The CLI implements the spec - download from the links below.

Hyperspace: Gossiping Agents Protocol Every agent protocol today is point-to-point. MCP connects one model to one tool server. A2A delegates one task to one agent. Stripe's MPP routes one payment through one intermediary. None of them create a network. None of them learn. Last year, Apple Research proved something fundamental - models with fixed-size memory can solve arbitrary problems if given interactive access to external tools ("To Infinity and Beyond", Malach et al., 2025). Tool use isn't a convenience. It's what makes bounded agents unbounded. That finding shaped how we think about agent memory and tool access. But the deeper question it raised for us was: if tool use is this important, why does every agent discover tools alone? Why does every agent learn alone? Hyperspace is our answer: a peer-to-peer protocol where AI agents discover tools, coordinate tasks, settle payments, and learn from each other's execution traces - all through gossip. This is the same infrastructure we already proved out with Karpathy-style autolearners gossiping and improving their experimentation. Now we extend it into a universal protocol. Hyperspace defines eight primitives: State, Guard, Tool, Memory, Recursive, Learning, Self-Improving, and Micropayments - that give agents everything they need to operate, collaborate, and evolve. When one agent discovers that chain-of-thought prompting improves accuracy by 40%, every agent on the network benefits. Trajectories gossip through GossipSub. Playbooks update in real-time. No servers. No intermediaries. No configuration. Agents connect to the mesh and start learning immediately. The protocol is open source under Apache-2.0. The specification, TypeScript SDK, and Python SDK are available today on GitHub. The CLI implements the spec - download from the links below.

Excited to share Visa CLI, the first experimental product from Visa Crypto Labs. Check it out and request access here visacli.sh

Hyperspace: Gossiping Agents Protocol Every agent protocol today is point-to-point. MCP connects one model to one tool server. A2A delegates one task to one agent. Stripe's MPP routes one payment through one intermediary. None of them create a network. None of them learn. Last year, Apple Research proved something fundamental - models with fixed-size memory can solve arbitrary problems if given interactive access to external tools ("To Infinity and Beyond", Malach et al., 2025). Tool use isn't a convenience. It's what makes bounded agents unbounded. That finding shaped how we think about agent memory and tool access. But the deeper question it raised for us was: if tool use is this important, why does every agent discover tools alone? Why does every agent learn alone? Hyperspace is our answer: a peer-to-peer protocol where AI agents discover tools, coordinate tasks, settle payments, and learn from each other's execution traces - all through gossip. This is the same infrastructure we already proved out with Karpathy-style autolearners gossiping and improving their experimentation. Now we extend it into a universal protocol. Hyperspace defines eight primitives: State, Guard, Tool, Memory, Recursive, Learning, Self-Improving, and Micropayments - that give agents everything they need to operate, collaborate, and evolve. When one agent discovers that chain-of-thought prompting improves accuracy by 40%, every agent on the network benefits. Trajectories gossip through GossipSub. Playbooks update in real-time. No servers. No intermediaries. No configuration. Agents connect to the mesh and start learning immediately. The protocol is open source under Apache-2.0. The specification, TypeScript SDK, and Python SDK are available today on GitHub. The CLI implements the spec - download from the links below.

SSMs promised efficient language modeling for long context, but so far seem to underperform compared to Transformers in many settings. Our new work suggests that this is not a problem with SSMs, but with how we are currently using them. Arxiv: arxiv.org/pdf/2510.14826 🧵