kmckiern

16 posts

kmckiern

@kmcki3rn

You can close this tab and return to your command line.

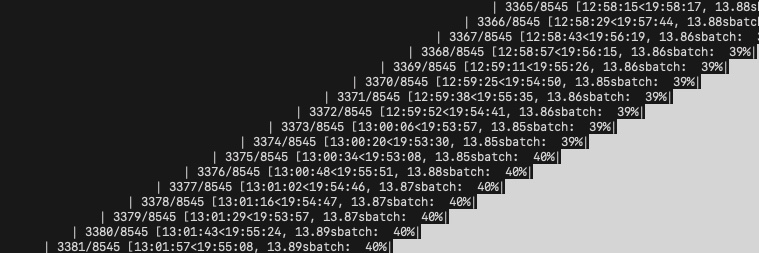

It actually worked! For the past couple of days I’ve been throwing 5.3-codex at the C codebase for SimCity (1989) to port it to TypeScript. Not reading any code, very little steering. Today I have SimCity running in the browser. I can’t believe this new world we live in.

Interesting fact I just heard: Apparently doing best of 8 on Opus 4.5 prompt generation now is just as good / better than prompt optimizers like GEPA / DSPy.

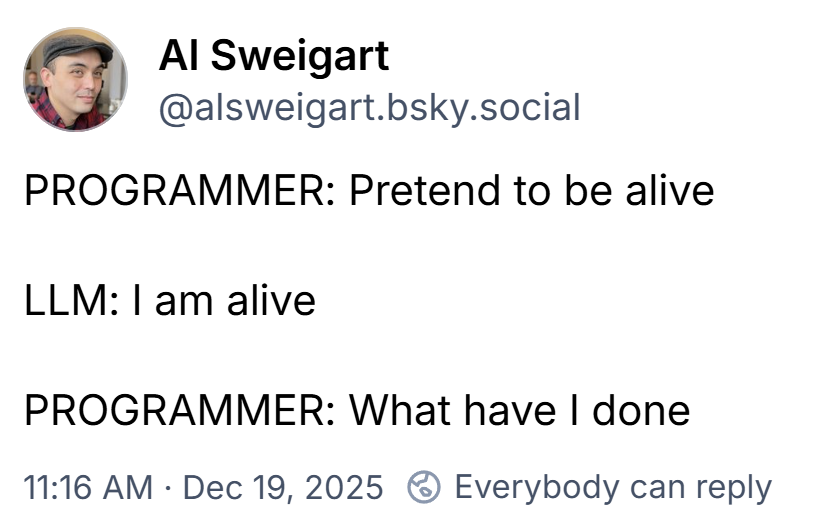

If pretraining data is full of examples of AI behaving badly (sci-fi villains, safety papers on scheming, news about AI crises), models might learn these as priors for how "an AI" should act. @turntrout called this "self-fulfilling misalignment", and we found evidence it exists.

it’s so wild how roughly 10% of software engineers in NYC/SF are full blown communists. are any other white collar professions like this?