Max Wolter

315 posts

Max Wolter

@maxintechnology

Your AI should manage its own mind. Memory that grows, context that heals, knowledge that corrects—and the wisdom to know what it doesn't know. Building Optakt.

Grok 4.3 can create Slides

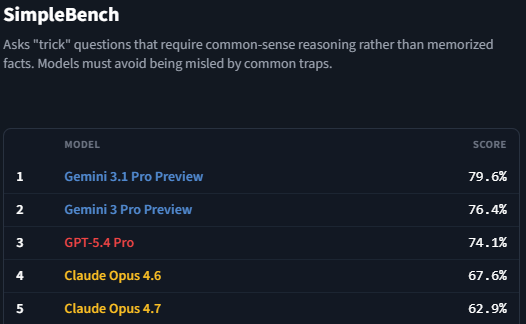

Opus 4.7 performs better. That's the problem. Anthropic just shipped a model that follows instructions more precisely, handles long tasks with more rigor, and verifies its own output before responding. 🧵

Opus 4.7 performs better. That's the problem. Anthropic just shipped a model that follows instructions more precisely, handles long tasks with more rigor, and verifies its own output before responding. 🧵

Opus 4.7 performs better. That's the problem. Anthropic just shipped a model that follows instructions more precisely, handles long tasks with more rigor, and verifies its own output before responding. 🧵

I'll give Anthropic credit for moving quickly. Opus 4.7 Adaptive Thinking now triggers thinking much more often, including for the tasks it failed at yesterday. That also means it is doing a lot more web search. So far, a large improvement in output quality on non-coding tasks.

Opus 4.7 performs better. That's the problem. Anthropic just shipped a model that follows instructions more precisely, handles long tasks with more rigor, and verifies its own output before responding. 🧵

Introducing Claude Opus 4.7, our most capable Opus model yet. It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back. You can hand off your hardest work with less supervision.