OverHash

2.6K posts

Related, but I am excited for GitHub to have an early preview of a native Stacked PR flow.

Until then, I've been following davepacheco.net/blog/2025/stac… which appears to have been a pretty good approach to help coworkers review my code.

English

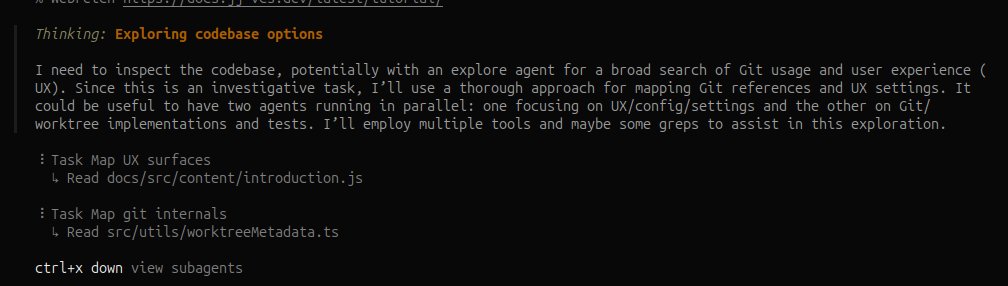

Trying out new code review tools lately! On my list is using `jj`, from ben.gesoff.uk/posts/reviewin…. I'm particularly interested in comparing to a native Git approach of walking commit-by-commit and taking notes, then using an LLM to post the notes correctly on GitHub.

English

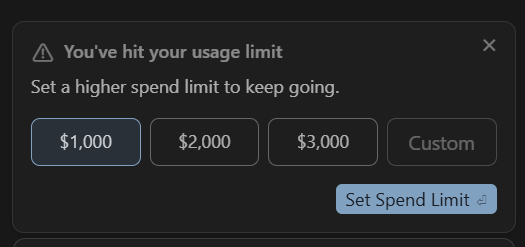

@quant_arb the context management in Cursor is quite bad. Very wasteful of tokens. Price wise, even if it was better, it cannot compete with a coding subscription by a big provider, even with the 2.5x credit multiplier that Cursor gives you for their $20 or other plans.

English

@devdotsasha Fun fact: it's faster to open the "files changed" in tab in a new tab, rather than open it in the current tab.

Absolutely banal that this is the case, but it's true.

yoyo-code.com/why-is-github-…

English

@howmanysmaII @MaximumADHD Check out nezuo.github.io/delta-compress as well, it's a great little library.

English

@MaximumADHD that deltatable is exactly something i need wow

English

Been working on a set of wally packages:

github.com/MaximumADHD/Ro…

English

@sleitnick Have you seen github.com/sircfenner/Aut…? I think there is feature parity based on your video with this plugin

English

Working on a v2 of the Require Autocomplete studio plugin! This time, it works like what you'd find in other IDEs, where you just type the name of the module, and it'll do the rest. #RobloxDev

English

@CharlieMQV What's the file explorer program used in here? It looks a lot nicer than the default!

English

Existing search tools on Windows suck. Even with an SSD, it’s painfully slow.

So I built a prototype of Nowgrep.

It bypasses most of the slow Windows nonsense, and just parses the raw NTFS.

On an SSD, this ends up faster than ripgrep, even on a cached run (Nowgrep bypasses most Software caching).

Demo: Filtering 2 million and searching ~270K files under C:/ for the substring "Hello".

I have many ideas to make these an even smoother experience. Let me know if this is interesting, and I might pursue it further to make a shippable product with good UX.

English

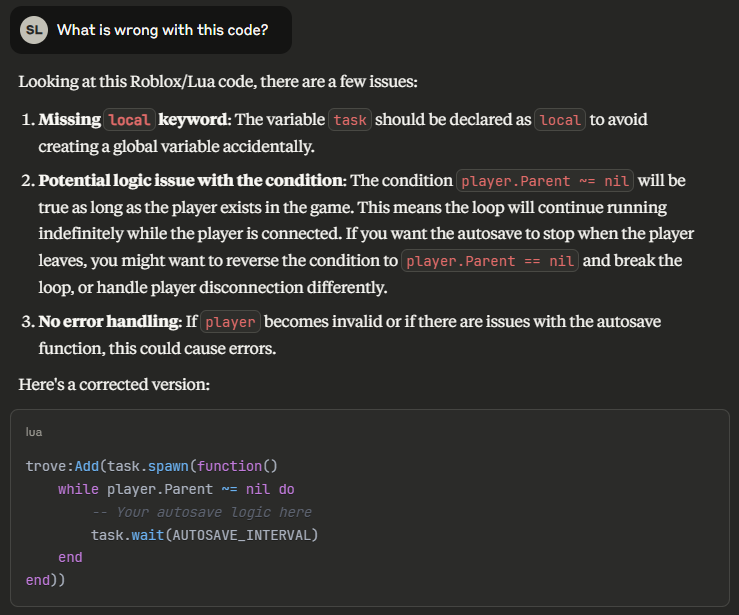

@sleitnick I'm really not sure how you got that response! I tried myself and got a vastly superior output -- one that is actually correct!

Anthropic's OCR is not the best in town, but it still surprises me you got that output. On any paid plan/service I'm sure the output would be better.

English

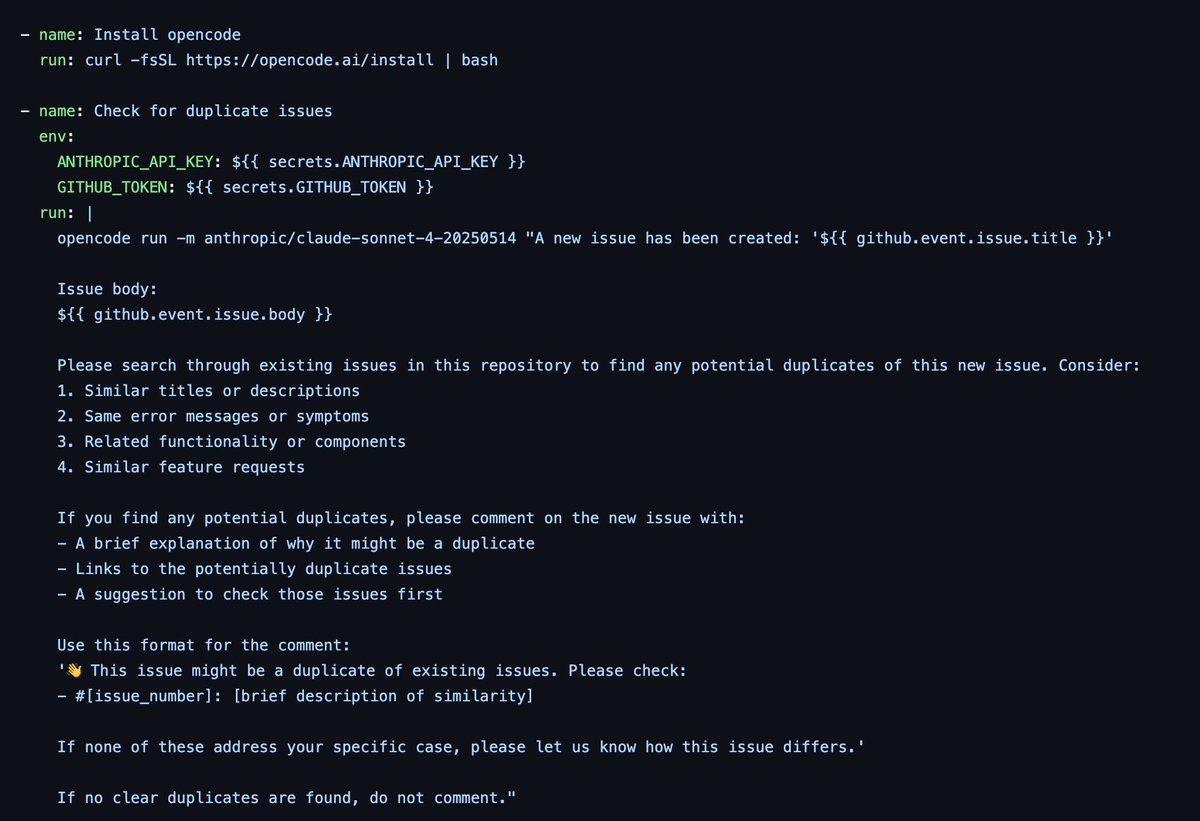

@thdxr The Claude Code repository experimented with this for a while before they removed it. They still run their action for tagging issues.

Looks like they have added it back now though! They do one pass to scan for dupes, and then close the issue if the author does not respond.

English

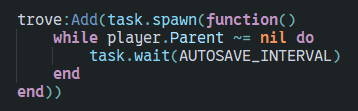

@SigmaTechRBLX Parallel luau on the server is really hard to effectively achieve.

On the client, it's often more trouble than it's worth

English

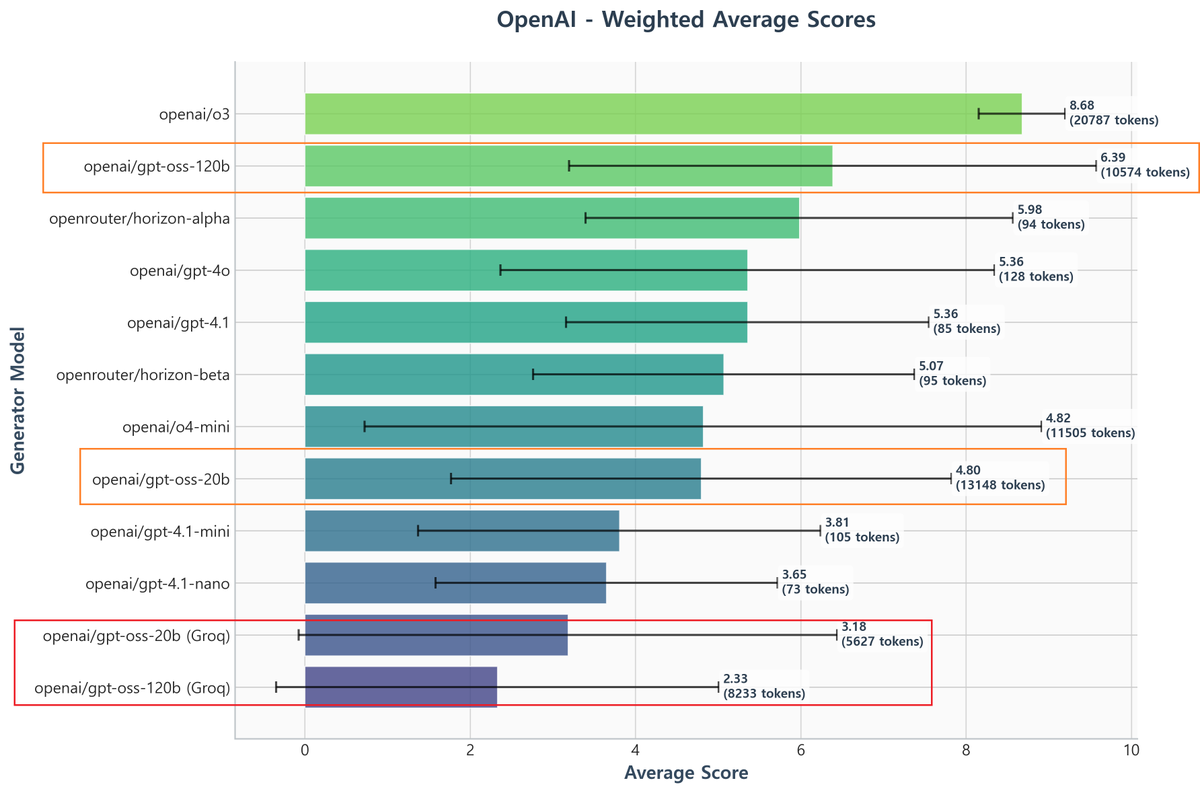

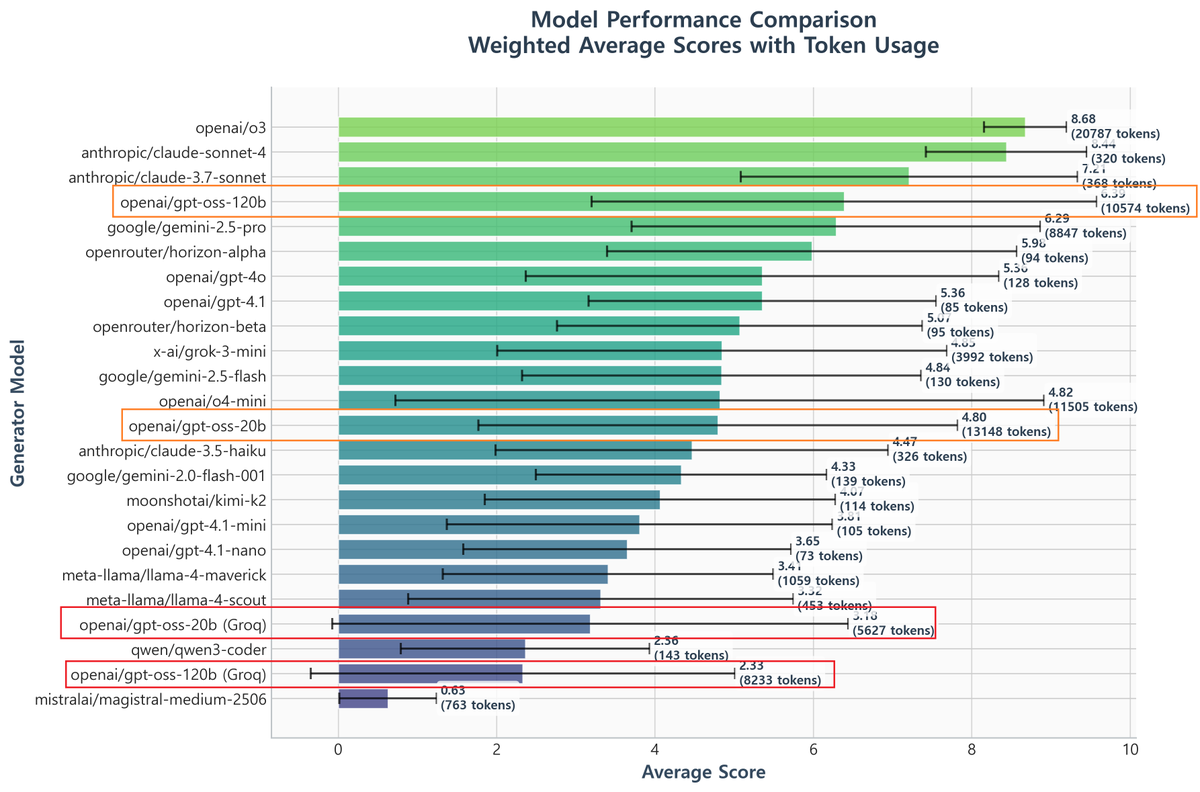

It seems my concerns were valid.

This is the result of re-running the tests after changing the provider setting from the default (which automatically routed to Groq) to Fireworks.

To emphasize again, the only thing I changed was explicitly fixing the provider in the code. All other prompts and settings remained exactly the same.

If you're using gpt-oss models at OpenRouter and noticing abnormally low performance, I suggest trying a different provider.

*As mentioned in previous posts, considering that the model sometimes gives up on answering, I believe 120b model's score is slightly lower than o4-mini.

Currently, if the model decides the problem is too difficult and refuses to answer, it's almost treated as a 0-point response. I'm still figuring out how best to handle this.

NomoreID@Hangsiin

I plan to rerun the tests using a different provider to examine the effects of points 2 and 3.

English