evil malloc

84 posts

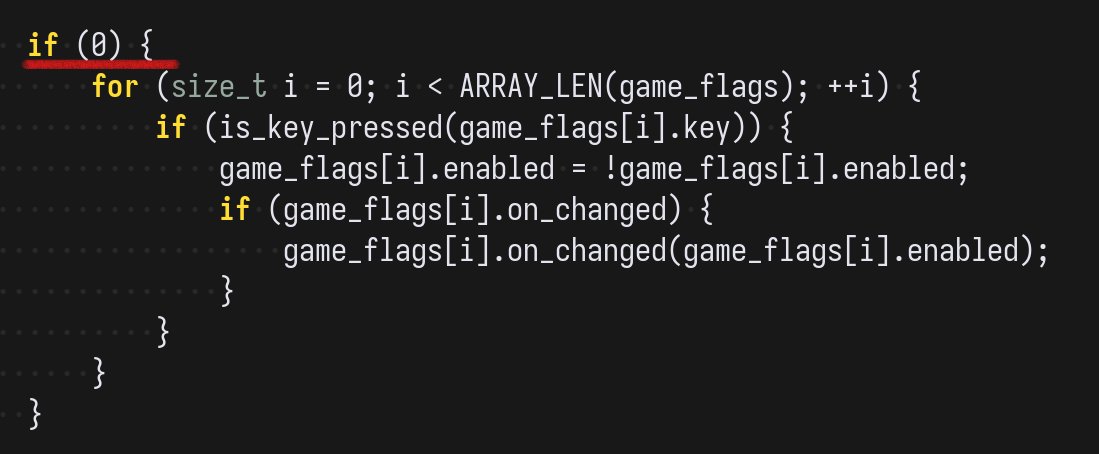

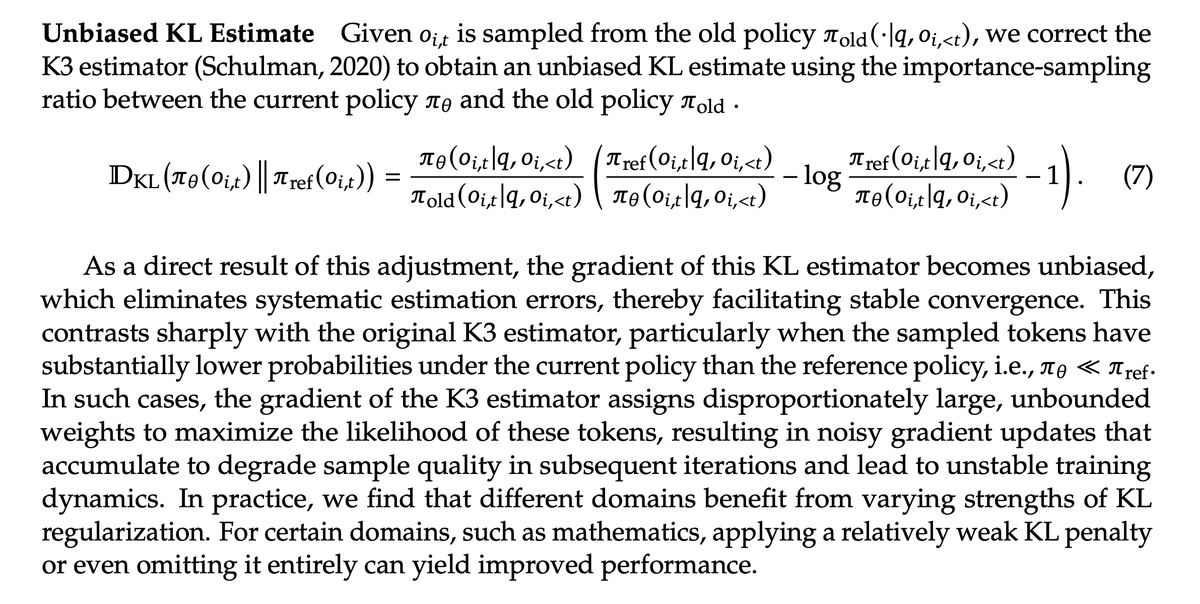

What is tinygrad? tinygrad is a formalist project. It attempts to capture the full gamut of software 2.0 in a non leaky abstraction. The methods on Tensor class create a directed graph of immutable RISC UOps defining what the computation is. Tensor is a frontend, in addition we have an ONNX frontend and a PyTorch frontend. Whether you code in tinygrad, torch, or import ONNX models, it all boils down to the same very simple UOp graph, which you can see with VIZ=1 This graph contains nothing like matmul or conv, it's just movement ops, elementwise ops, and reduction ops. Seriously, try VIZ=1. Below that, there's a scheduler which breaks that graph up into kernels. Then we do more graph transforms on each kernel subgraph until we have code which can run on an accelerator. See the kernels with DEBUG=2. Then we have runtimes capable of running that code. For AMD, our runtime goes all the way to the physical hardware; we are mmaping the PCIe bus and peeking and poking it. It's all in Python, but it is fast because once you have the graph compiled, you are running the same graph over and over; just ringing a doorbell. The hope is that, similar to Linux and LLVM, we will prevent a major source of rent seeking in our AI future. By clearly and simply specifying the job, being able to precisely spec what is bought and sold, you can have a fair marketplace for compute. By the end of the year, we should be similar in speed on NVIDIA to the existing torch CUDA backend, except without CUDA. We will also have a test cloud up where you can run jobs from any of the three frontends. You don't want to rent a GPU per hour on a machine, you want to rent a couple FLOPS in a lambda function. That's what the OpenAI API is. Now offer it decoupled from the specific model.