Most Agentic AI systems break in production.

Not because of models, but because of missing system design.

Everyone is building agents.

Few are building complete systems.

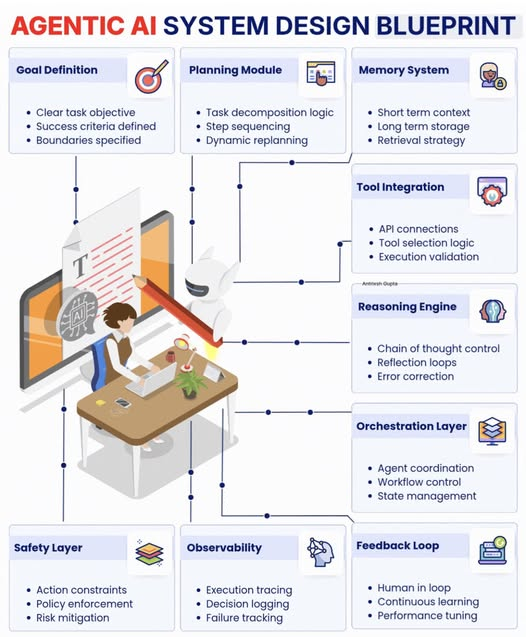

𝐈𝐧 𝐭𝐡𝐢𝐬 𝐢𝐧𝐟𝐨𝐠𝐫𝐚𝐩𝐡𝐢𝐜 𝐈 𝐛𝐫𝐞𝐚𝐤 𝐝𝐨𝐰𝐧 9 𝐜𝐨𝐫𝐞 𝐜𝐨𝐦𝐩𝐨𝐧𝐞𝐧𝐭𝐬:

• Goal Definition

• Planning Module

• Memory System

• Tool Integration

• Reasoning Engine

• Orchestration Layer

• Safety Layer

• Observability

• Feedback Loop

𝐄𝐚𝐜𝐡 𝐜𝐨𝐦𝐩𝐨𝐧𝐞𝐧𝐭 𝐬𝐨𝐥𝐯𝐞𝐬 𝐚 𝐝𝐢𝐟𝐟𝐞𝐫𝐞𝐧𝐭 𝐟𝐚𝐢𝐥𝐮𝐫𝐞 𝐦𝐨𝐝𝐞.

→ Without clear goals, agents drift.

→ Without planning, they stall.

→ Without memory, they repeat mistakes.

→ Without tools, they stay limited.

→ Without reasoning, they hallucinate.

→ Without orchestration, they break at scale.

→ Without safety, they create risk.

→ Without observability, you stay blind.

→ Without feedback, they never improve.

Agentic AI is not a prompt problem.

It is a systems engineering problem.

The teams that understand this will ship reliable AI.

The rest will keep debugging demos.

P.S. Which of these components is missing in your current AI system?

#RAG #AIEngineering #LLMSystems

English