DC

1.6K posts

DC

@devdcdev

Co-Founder/CTO @mindcloudhq ($3.2M ARR). Working on Stealth. Context is everything.

Los Angeles انضم Kasım 2021

574 يتبع602 المتابعون

تغريدة مثبتة

DC أُعيد تغريده

We're rolling out plugins in Codex.

Codex now works seamlessly out of the box with the most important tools builders already use, like @SlackHQ, @Figma, @NotionHQ, @gmail, and more.

developers.openai.com/codex/plugins

English

@garrytan The real question is, what is a good engineer ? Someone that understand AI or someone that is fast

English

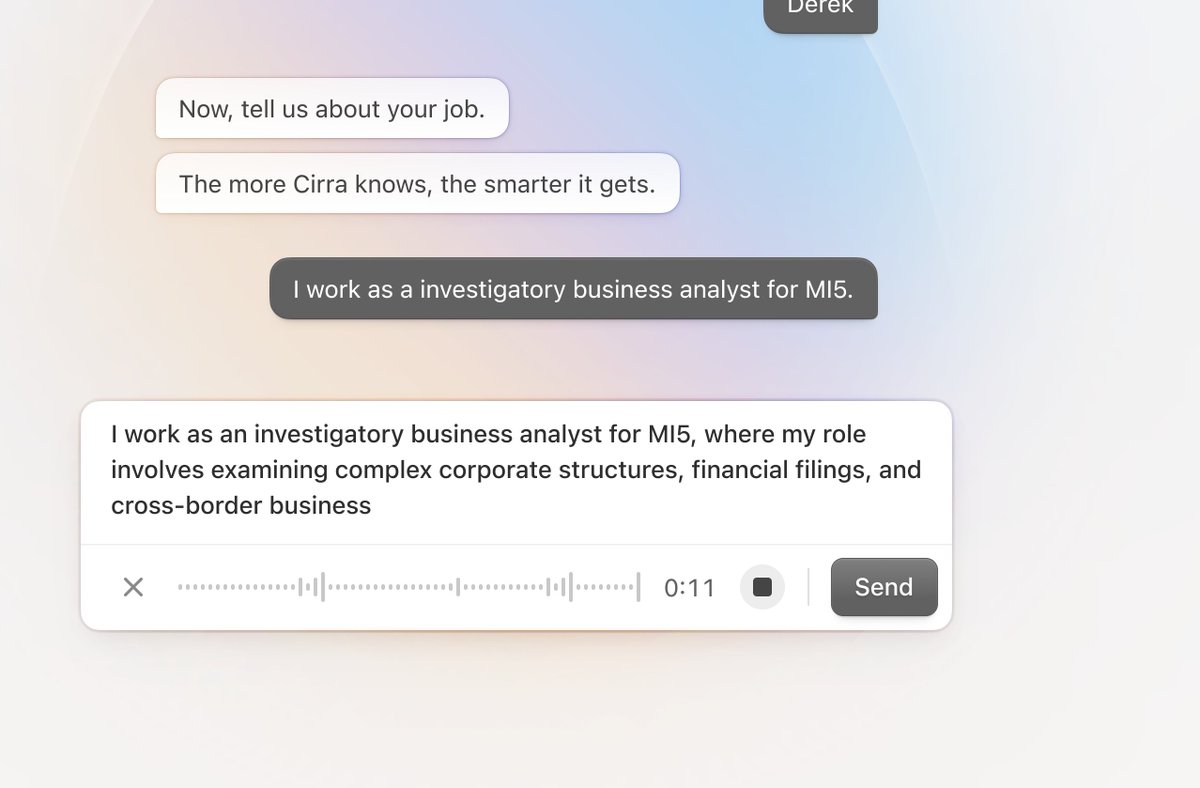

Time to get the new @wireframe launched. It's nowhere near finished yet, but I'll iterate as I go.

Thursday: wireframe.co

English

I’m not so sure. Your one example of reasoning is the one thing baked into models now.

I’ve been building harnesses for a month. Client/server side is determined by access.

Web search: server

Universal MCP: server

Knowledge: server

Compaction: server

Local file system: client by necessity

Local exec command: client

Exec tools (python, git): client or server based on containerization

None of those can or should be in models except for maybe compaction.

English

LLM systems swallow harness progress.

The most general/universal LLM innovations migrate from client-side harnesses to server-side tools.

Innovation typically happens first inside the harness.

For example, AI reasoning was originally a harness around GPT-3 ("let's think step by step"). This approach worked so well that it migrated behind the API as a tool (competitive reasons were also a factor; but general utility dominated).

Many wouldn't think of AI reasoning as a tool but it definitely is (it's a tool to do natural language program synthesis -- but that's another topic). The same happened with code interpreter which started out as a client-side harness and moved server-side.

These tools are made available at inference time to the model alongside specific training to teach the model when and how to use each tool. Because of this, the line between tool and model can get quite blurry. Best to consider such tools as "internal" to the LLM system.

This is actually a good test of how general a harness feature is. If a feature remains "stuck" client-side, say inside codex or claude code, then it's likely very task- or domain- specific.

Client-side harnesses typically encode a lot of human G factor for specific domains. Whereas tools, due to usage pressure of frontier LLMs, are required to be as general as possible else they wouldn't make the cut.

So if you care about measuring AGI it's a good idea to pay attention to default LLM system capabilities behind high usage LLM APIs.

And if you care about bleeding edge research ideas, such as RLMs, it's a good idea to pay attention to harness innovation.

Ultimately, AGI will not depend on a harness in the same sense humans don't depend on a harness.

English

DC أُعيد تغريده

@jamesm @mindcloudhq i'd like to see a cat knocking something off this shelf please

English