Ryan Wang 🇹🇼

6K posts

Ryan Wang 🇹🇼

@ryanwang

An individual Investor from Taiwan who focus on Tech, AI, autonomy, and robotics.

Taipei, Taiwan انضم Mayıs 2018

1.6K يتبع1.2K المتابعون

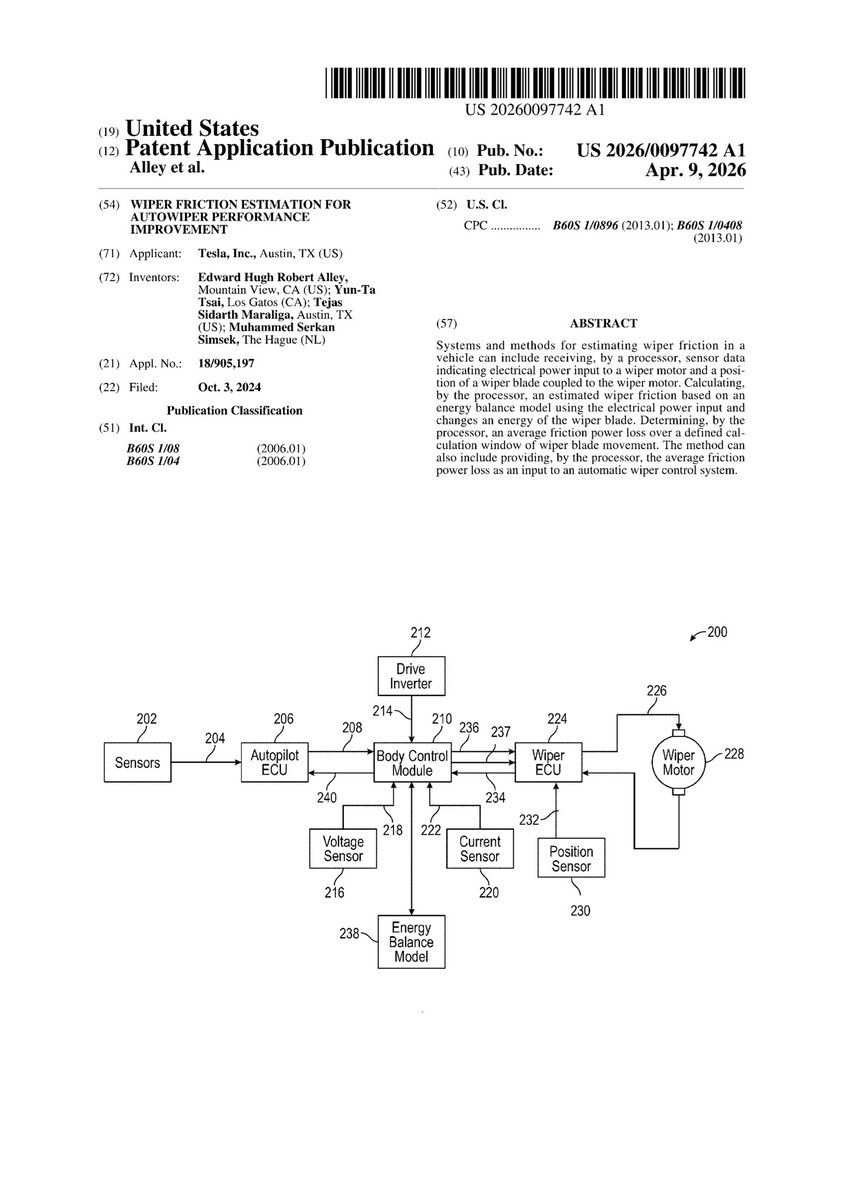

@seti_park @yunta_tsai Visual-based automatic wipers are indeed more difficult to solve than visual-based autonomous driving.😆😆😆

English

Tesla FSD의 악명 높은 엣지 케이스인, wiper 문제가 조만간 해결될 것 같습니다.

Yun-Ta Tsai님(@yunta_tsai)께서 이와 관련된 특허에 참여하셨네요. 👀

Xber J@bogusjack

제일 해결안되는 FSD 엣지케이스 : Auto Wiper

한국어

To all EU Tesla owners refreshing your feeds for the RDW approval today 🇪🇺🇳🇱

You are not the only ones holding your breath! 🇯🇵

Did you know? Japan's FSD release is completely dependent on your RDW approval.

Since Japan and the EU share the UN-R171 (WP.29) framework, the moment you get the green light, the final regulatory domino falls for Japan too! 🤝

Actually, our Japanese RHD HW4 vehicles just secretly downloaded FSD v14.2.2.5 in the background yesterday. We are literally just waiting for YOUR unlock key! 🔑

Let's make history today. Sending massive support from Japan! ⚡️

#Tesla #FSD #RDW

English

Ryan Wang 🇹🇼 أُعيد تغريده

@PeymanAbedirad @OG_Yogi @ICannot_Enough @TheCTStud @DavidMoss Yes. It's the first FSD version that adds reasoning. Reasoning can address issues that are otherwise very hard to solve. FSD is already superhuman in it's perception and reflexes. Reasoning is a way to give it superhuman judgement as well.

English

@YorkDoc @tavi_chocochip It’s fair. Although the direction is correct, you can criticize his overly optimistic timeline and expectations.

English

@tavi_chocochip @ryanwang Things evolve in AI front so fast… you can’t blame him . 6 months ago things were different. Look at how claude models are improving

English

The release of FSD v14.3 and discussions from insiders have given me a clearer picture of why Tesla is not rushing to deploy a 10x larger parameter model with version 14.

Under the hardware constraints of existing HW4, Tesla’s engineering team must make a trade-off between MPI (Miles Per Incident, a safety metric) and inference latency.

By thoroughly overhauling the underlying technical architecture—including a full MLIR rewrite of the compiler and runtime, along with upgrades to RL training—they are attempting to shift the entire “autonomous driving efficiency curve” outward, rather than simply moving along the existing curve.

Increasing model size (Large Model) typically improves MPI (theoretically making the system safer with fewer incidents). However, on fixed hardware, a larger model often increases inference latency (slower response). If latency rises too much, even if MPI gets better, overall real-world safety may actually decline—because delayed reactions can allow small errors to compound into serious problems.

Simply forcing a Large Model would likely push the curve toward “higher MPI but significantly higher latency,” which in practice would be a poor trade-off.

The essence of v14.3 is to achieve an “outward shift of the curve.” It is not just about making the model bigger or smaller. Instead, through the MLIR reconstruction of the entire compiler and runtime, Tesla enables the same HW4 hardware to deliver higher performance at lower latency. At the same time, they use RL to optimize for hard examples, improving MPI without a noticeable increase in latency. This effectively pushes the entire “MPI–Latency efficiency curve” up and to the right—achieving a better trade-off.

Elon Musk@elonmusk

@Chansoo Our rate of advancement with the small model has been so fast that the large model has not yet caught up. V15 will be the large model.

English

@DaySamual39307 No, they're optimizing FSD with a much better underlying architecture. It's like replacing your kitchen's old wood-burning stove with a microwave.

English

@ryanwang Is it fair to say they are trying to slim down the software so it can operate within a brain that’s too small? Metaphorically speaking

English

@rlloken @JoeTegtmeyer Tape out first half, test production 2nd half and mass production 2027

English

@ryanwang @JoeTegtmeyer What is the timeline for launching HW5?

English

@ryanwang "insiders" - so the rest of us is relegated to read posts on X.

English

Ryan Wang 🇹🇼 أُعيد تغريده

Tesla FSD v14.3: The Removal of a Bottleneck

Most people looking at FSD v14.3 see a familiar story: incremental improvement. A bit faster, a bit smoother, a bit more refined. The headline number - roughly 20% faster reaction time - sounds like a solid upgrade, but nothing revolutionary.

That interpretation misses the point entirely.

v14.3 is not about improving the model. It’s about replacing the system underneath the model.

To understand why this matters, you have to separate two parts of Tesla’s AI stack.

First, there is the training environment. This is where Tesla uses massive compute clusters to build increasingly powerful neural networks. In this environment, the models can be as large and as sophisticated as Tesla wants.

Second, there is the runtime environment inside the car. This is where those models actually have to operate - in real time, under strict constraints of compute, memory, and latency.

Historically, the gap between these two worlds has been a major constraint.

Tesla could train a highly capable model on the server side, but when it came time to deploy that model into the vehicle, compromises were unavoidable. The model had to be compressed, simplified, and optimized to fit within the limitations of the vehicle hardware. In the process, some of its capability was inevitably lost.

The result was not a lack of intelligence, but a bottleneck in how that intelligence was delivered.

With v14.3, Tesla rebuilt both the compiler and the runtime from the ground up using MLIR (Multi-Level Intermediate Representation).

The compiler is responsible for taking a trained model and translating it into a form that can run efficiently on the vehicle. The runtime is responsible for executing that model in real time inside the car.

By rewriting both layers, Tesla has fundamentally improved how models are converted and how they are executed.

This is why the improvements show up not just in raw speed, but in qualitative behavior. Early testers are reporting smoother responses, more natural decisions, and a noticeable increase in responsiveness. These are not just signs of a better model - they are signs of a better system delivering that model.

For the past several versions - v12 through v14 - progress was largely driven by improving the model itself. But the underlying inference framework remained largely the same.

That meant progress was increasingly constrained. Even as the model improved, the system responsible for running it became the limiting factor.

So, v14.3 marks a shift in approach.

Instead of continuing to push only on model performance, Tesla upgraded the entire stack. The focus is no longer just on how smart the model is, but on how efficiently that intelligence can be translated and executed in the real world.

Elon Musk has referred to this kind of change as a “final piece of the puzzle.” That phrasing can be misleading if interpreted as an endpoint.

In reality, this is a reset.

By replacing the underlying system, Tesla has removed a key constraint that was limiting future progress. The implication is not that FSD is complete, but that future versions - v15, v16, and beyond - can advance much more rapidly and with fewer compromises.

In practical terms, this means larger, more capable models can be deployed more effectively. It means improvements made in training are more likely to carry through to real-world performance in the vehicle. And it means iteration cycles can accelerate.

One of the more underappreciated aspects of this change is its potential impact on existing vehicles, particularly those running HW3.

The new MLIR-based system is designed to take better advantage of available hardware through techniques like quantization, operator fusion, and heterogeneous optimization. In simple terms, it allows Tesla to extract more performance from the same physical chips.

A potential “v14 Lite” for HW3 vehicles: With a more efficient runtime, older hardware may be able to run more advanced capabilities than previously thought possible.

So, the real story here is that Tesla has addressed a structural limitation in its AI system. It has improved the way intelligence is packaged, delivered, and executed. This is not just an upgrade. It is the removal of a bottleneck.

v14.3 should not be viewed as the culmination of Tesla’s FSD efforts. The visible changes today may seem incremental. The invisible changes beneath them are anything but. Tesla did not just make the system faster. It made it ready for what comes next.

Elon Musk@elonmusk

Tesla V14.3 self-driving review. The point releases will bring polish. V15 will far exceed human levels of safety, even in completely unsupervised and complex situations.

English

@tavi_chocochip @ryanwang Average users may not appreciate the difference of the RL rewrite yet, but internally it must have been a huge task and a new foundation to build on. The full weight of inference is yet to show up in this stack

English

@ryanwang @JoeTegtmeyer Just curious, then why not quickly roll out AI5 then?

English

@tavi_chocochip It’s like you’re putting together a massive jigsaw puzzle. The difference in one key piece determines whether you can smoothly complete the rest of the puzzle afterward.

English

@ryanwang If the situation is as described, obv. the Tesla AI engineers were fully aware of it all this time; the alternative is they're incompetent.

So why did Elon keep pushing the narrative of the 10x parameter model (incl. reasoning, "last piece of the puzzle") in 14.3 for months?

English

@OptimusUpRyan69 I think it’s still the same — safety remains the top priority.

English

@JayBarlowBot It can, but it approaches it’s limit and needs optimization. So they chose to adjust the old compiler architecture first.

English

FSD v14.3的推出與內部人員的討論讓我可以比較清晰的推測為什麼特斯拉沒有急著在 v14 推出 10x 參數大模型。

在 HW4 既有的硬體條件限制下,特斯拉工程團隊必須在 MPI(Miles Per Incident,安全里程) 與 inference latency(推論延遲) 之間進行取捨。

他們透過徹底修正底層技術架構(MLIR 重寫 compiler + runtime、RL 訓練升級等),試圖讓這兩者組成的『自動駕駛效率曲線』向外推移(outward shift),而不是單純沿著現有曲線移動。

增加模型規模(Large Model)通常能提升 MPI(理論上更安全、更少事故)。但在固定硬體上,模型變大往往會增加 inference latency(反應變慢)。如果 latency 增加太多,即使 MPI 變好,整體實戰安全性可能反而下降(因為反應不及時,小錯誤更容易累積成大問題)。

直接硬上 Large Model,很可能讓曲線往「高 MPI 但更高 latency」的方向移動,實戰中反而划不來。

v14.3 的本質就是在做「曲線外推」 ,不是單純把模型變大或變小,而是透過 MLIR 重構整個 compiler 和 runtime,讓 同樣的硬體 能以更低的 latency 跑出更高的效能。同時用 RL 針對 hard examples 優化,讓 MPI 在 latency 沒有明顯惡化的情況下得到提升。這等於把整條「MPI–Latency 效率曲線」往右上方推動(更好的 trade-off)

@Tsla99T @raines1220 你們的看法呢?

中文