Ever Upward ☝🚀✨✝⚔️⚡🔛

7.8K posts

Ever Upward ☝🚀✨✝⚔️⚡🔛

@TimBallFL

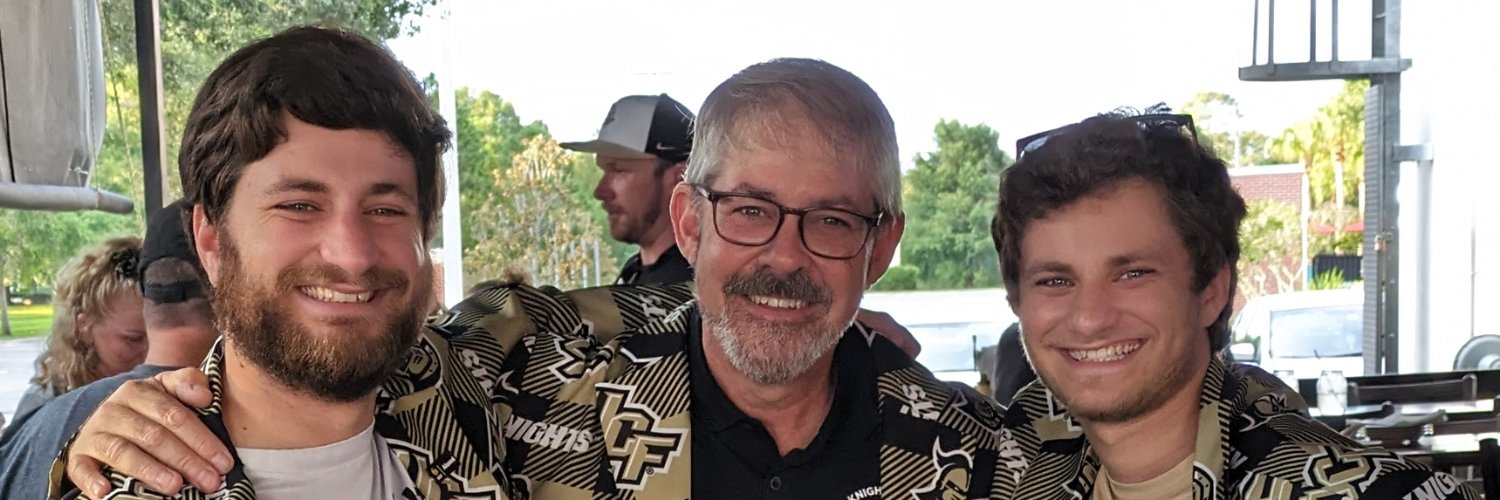

I am not my own. God Loves You. Husband, dad, grandpa, alumnus of UCF, #TheSpaceU. #GKCO ⚔️⚡🔛 #UCFTwitterMafia

@emzanotti I would settle for somebody who can play and sing...

There is a heavenly queen mother. Her name is Mary. And she is our refuge, our sweetness, and our hope.

Like most Americans, I’ve enjoyed watching the Super Bowl. But the halftime shows began pushing moral boundaries and have become more and more sexualized. This year, they’re having Bad Bunny perform. The @NFL leadership is pushing this sexualized agenda. Thank you, @TPUSA and @MrsErikaKirk for providing an alternative—“The All-American Halftime Show” with the agenda of celebrating family, faith, and freedom! tpusa.com/live/tpusa-s-a…

why is he pointing away from the rocket

I love this school so much man I’m proud to be an AlumKnight Side note that entrance was awesome. Football needs the light sticks. And mannn let the hockey team do space!