will harris

7.1K posts

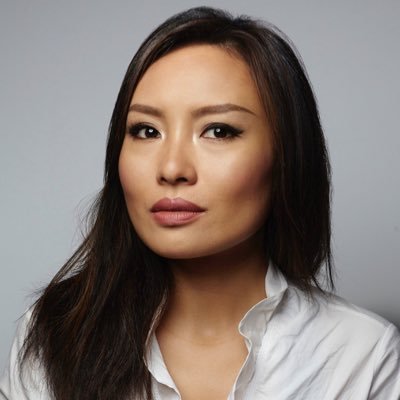

will harris

@boujeehacker

fullstack swe prev @linkedin , @joinodf OD50-1, @opensea, 💻 disapora ✈ #atl, #aus, #sf, #mia, #nyc, 🛬

I've always used fetch instead of axios because that's what's used in the browser like why would you even use axios in the first place?

unpopular opinion: 16GB is plenty if software engineers actually cared about memory efficiency. chrome eating 4GB for 12 tabs is not a hardware problem its a software disgrace. docker consuming 2GB idle is not a feature its laziness. we live in an era where people optimize every single token to save $0.001 on API costs but happily ship electron apps that eat 500MB to display a todo list. if the industry treated RAM the way we treat inference compute - obsessively measuring every byte - 16GB would feel luxurious. the hardware isnt the problem, the software is @adxtyahq

Absolutely insane week for agentic engineering 37K LOC per day across 5 projects Still speeding up

unpopular opinion: 16GB is plenty if software engineers actually cared about memory efficiency. chrome eating 4GB for 12 tabs is not a hardware problem its a software disgrace. docker consuming 2GB idle is not a feature its laziness. we live in an era where people optimize every single token to save $0.001 on API costs but happily ship electron apps that eat 500MB to display a todo list. if the industry treated RAM the way we treat inference compute - obsessively measuring every byte - 16GB would feel luxurious. the hardware isnt the problem, the software is @adxtyahq

The best part is that the America that Japanese people adore the most… is the same one that coastal elites call “flyover trash” They’re not autistically LARPing NY cynicism or SF polycule / LA wellness culture. They’re drawn to the heartland of the American South and all its trappings - the jacked-up trucks, backyard BBQs, country radio, big skies and the friendly "yes ma'am" drawl.

your company’s ci/cd pipeline.

there was a moment in 2023 when your team was using Figma, Linear, VS Code, Typescript, React 18, esbuild or Next.js with the pages router. Peak software in every category. wonderful stack. you were young and happy and in love

would you rather: - have $10M today - have chatgpt in 2012

Okay let's see who can reply to this

Engineering job openings are at the highest levels we’ve seen in over 3 years There are over 67,000 (!!!) eng openings at tech companies globally right now, with 26,000 just in the U.S. We don’t know if there would have been more open roles if not for AI or if AI is actually leading to more open roles, but since the start of this year, the increase in open eng roles is accelerating even more.

I need a GitHub too! Is it like that or nah?

Terence Tao put it plainly: there is no evidence that LLMs exhibit genuine creativity. Yes, they have solved some Erdős problems. But these are low-hanging fruit, questions that attracted little attention and that yield once the right existing techniques are applied. That is not creativity. That is search plus recombination. Yes, LLM outputs can look impressive. But look at who is impressed: typically non-experts. Experts know very well that LLM performance gets terrible when you approach the frontier of human knowledge. And this is not a temporary gap. It reflects a structural limitation. We do not fully understand human creativity. But we do know a key property: Conceptual leaps: the ability to generate new representations, not just recombine existing ones. LLMs do not do this. They interpolate in representation space. They operate within existing conceptual frameworks; they do not create new ones. This is why we haven’t “yet seen them take the next step”.

I need a GitHub too! Is it like that or nah?

This cannot be Atlanta, I was told there's no demand for walkable neighborhoods in the metro area.

Atlanta has magic in the air ✨