c. mcdonnell

125 posts

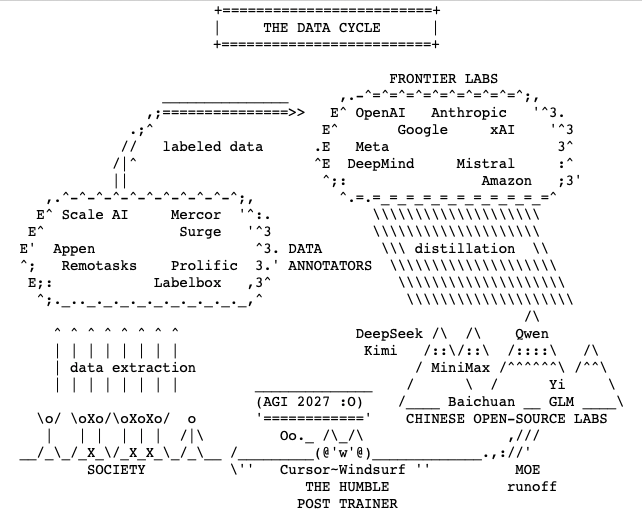

i did quick 71 experiments for 500 out of 13,000 steps for OpenAI's challenge 1. Mixture of Experts is absolute WINNER (very surprising as it shouldn't be for small LLMs) > Expert count matters most. 4 (best) > 3 >> 2. 2. UNTIED Embeddings work, tied are disaster 3. Depthwise Convolution - DEAD END Insights: 1. 4-expert MOE + leaky ReLU -> -0.048 BPB, clear winner 2. Untied factored embeddings (bn128) -> -0.031 BPB, worth combining with MOE 3. MOE + QAT combo -> preserves quantized quality for submission dead ends 1. Depthwise convolution -> every variant hurts, bigger kernels hurt more 2. Tied factored embeddings -> catastrophic, especially at small bottlenecks 3. Weight sharing -> not competitive with MOE for quality 4. Conv + anything combos — compounds the damage Next Steps 1. Validate MOE 4e + leaky at 2000-5000 steps, multiple seeds 2. Test MOE 4e + leaky + untied bn128 — the two biggest wins may stack 3. Full run (13780 steps) of best combo to see if it beats 1.2244 BPB leaderboard 71 experiments, 3 GPUs, ~500 steps each. Vuk Rosić 500 step training mainly helps us eliminate VERY BAD losers, winners need to be tested on longer training. Thank you @novita_labs for compute!

The more you look at actual nutrition and health science, the more fixated you become on fiber intake

films set the ideas and narratives that kick-off passions and careers