Jayjun Lee

133 posts

Jayjun Lee

@jayjunleee

Robotics Research Intern @NvidiaAI; Robot Learning PhD student @UMRobotics; Prev @imperialcollege

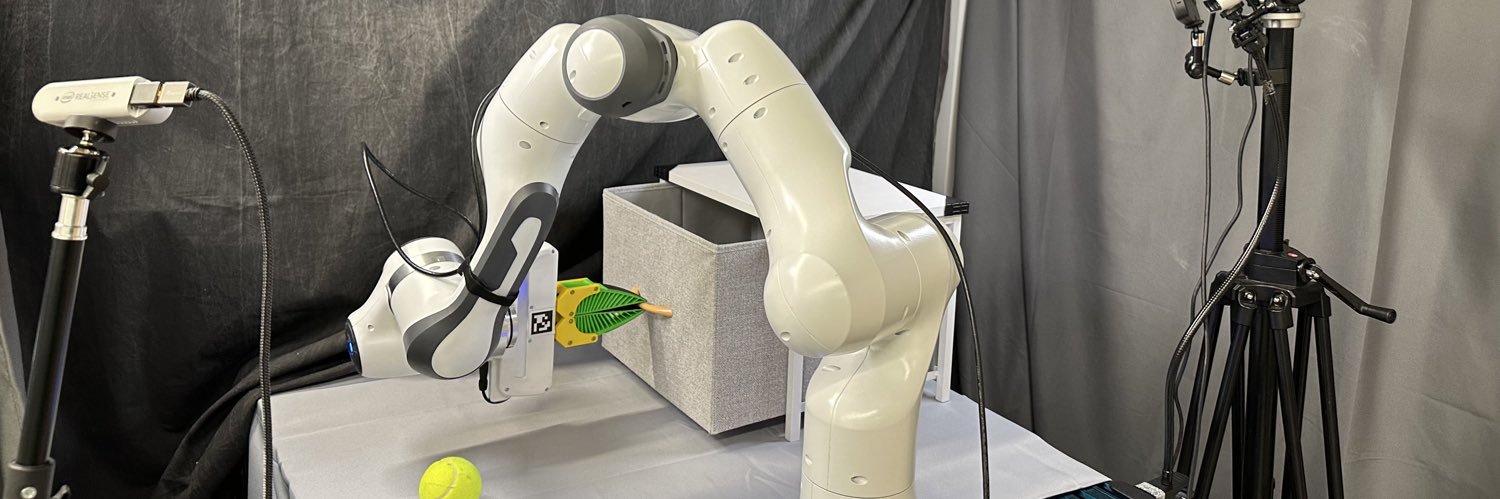

Robot memory methods are growing fast, but systematic evaluation is largely lacking. 📉 Introducing RoboMME: a new benchmark for memory-augmented robotic manipulation! 🤖🧠 Featuring 16 tasks across temporal, spatial, object, and procedural memory 🔗 robomme.github.io

Has visual fidelity outpaced dynamic fidelity in tactile simulation for sim-to-real transfer of policies? Introducing HydroShear 🏄♂️ : a hydroelastic tactile shear simulation for training zero-shot sim-to-real tactile policies in contact-rich tasks where fingertip force and shear matter most! Webpage: hydroshear.github.io Robot videos in 1x 🧵👇

T-5 to CoRL'25! 🇰🇷🤖 Join us on Sep 27th for the 1st Human to Robot (H2R) workshop, where we discuss the future of sensorizing, modeling, and (robot) learning from humans. sites.google.com/view/h2r-corl2…

AimBot A Simple Auxiliary Visual Cue to Enhance Spatial Awareness of Visuomotor Policies

AimBot A Simple Auxiliary Visual Cue to Enhance Spatial Awareness of Visuomotor Policies

How can robots feel and localize extrinsic contacts through both vision👀and touch🖐️? #RSS2025 @RoboticsSciSys Introducing ViTaSCOPE: a sim-to-real visuo-tactile neural implicit representation for in-hand object pose and extrinsic contact estimation! jayjunlee.github.io/vitascope/