Owen Gregorian@OwenGregorian

AI Cannot Self Improve and Math behind PROVES IT! | Devsimsek

So, I saw a LinkedIn post (forwarded by a friend, thanks again) that stopped my doom-scrolling dead in its tracks. The headline? “Researchers just mathematically proved AI cannot self-improve.” My first reaction was the classic developer response: “I called it earlier!” My second reaction was to actually read the paper.

Turns out – yeah, we’re right. And the math behind is kind of uncomfortably elegant.

The Dream They All Had

The whole “AI singularity” narrative goes something like this: we build a smart AI, that AI improves itself, the improved version is smarter so it improves itself even faster, and then – boom – we either all live in utopia or become paperclips. This is called Recursive Self-Improvement (RSI), and it’s been the backbone of both AI doomer manifestos and Silicon Valley pitch decks for a decade.

The implicit assumption is that an AI training on its own outputs would get better over time. Like compound interest, but for intelligence. Sounds reasonable, right?

Yeah. About that.

What the Paper Actually Says

A recent arXiv paper – “On the Limits of Self-Improving in Large Language Models” – doesn’t just argue against RSI. It formally proves it’s self-defeating.

The core idea: model the self-referential training loop as a dynamical system on the space of probability distributions. When a model trains on its own generated data (synthetic outputs), it’s not learning from reality anymore – it’s learning from a distorted reflection of itself.

The paper proves that under a diminishing supply of fresh, authentic data, this system converges to a fixed point – a degenerate distribution with low diversity and high bias. The technical term is model collapse, and it’s been observed empirically too. But now there’s a formal proof that it’s inevitable, not just a bad luck outcome.

In plain terms: the model doesn’t climb toward superintelligence. It slowly forgets what the real world looks like.

# Oversimplified metaphor as code

def self_improve(model, real_data_supply):

while real_data_supply > 0:

synthetic = model.generate()

model.train(synthetic)

real_data_supply *= 0.9 # diminishing fresh data

return model # spoiler: this model is now dumber

The proof also extends beyond single LLMs – it covers ecosystems of interacting models and multi-modal systems. So no, a committee of AIs feeding each other outputs doesn’t escape the problem. It might actually make it worse.

The “Curse of Recursion”

There’s a term I love from this paper: the curse of recursion. When your training data is increasingly polluted with your own synthetic outputs, the tails of your distribution disappear first. Rare but important patterns – edge cases, nuanced reasoning, outlier knowledge – get washed out. The model converges toward a bland, high-confidence, low-variance output space.

You can see this empirically already. Ask a model that’s been RLHF’d into oblivion something unusual, and it’ll confidently give you a smooth, plausible-sounding, completely wrong answer. That’s collapse in slow motion.

The math backing this is rooted in dynamical systems theory – specifically the idea that without an external “forcing function” (real, diverse, human-generated data), the system has no energy to maintain the complexity of the original distribution. It inevitably degenerates.

What This Actually Means for the Industry

This doesn’t mean AI stops improving. It means the self-improvement loop fantasy is dead – at least the version where you unplug the humans and let it run.

What it does mean:

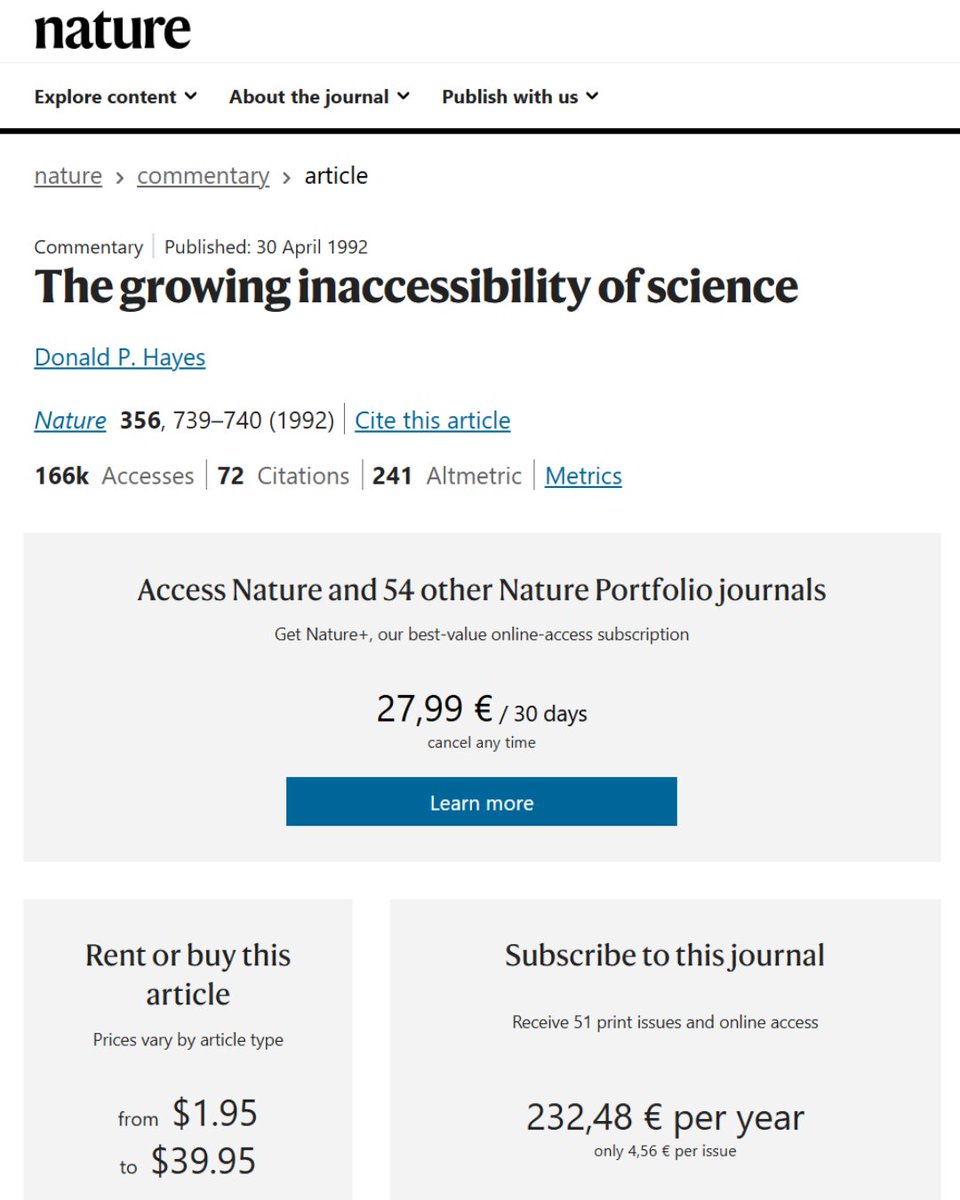

- Human-generated data is irreplaceable. The “internet is running out of training data” problem just got mathematically formalized. You can’t fake your way out of it with synthetic data at scale.

- RSI as a path to AGI is a dead end. At least the naive version – train → generate → retrain → repeat. It converges, but downward.

- Curation matters more than quantity. A smaller dataset of high-quality, diverse, authentic human output beats a massive synthetic pile every time. Quality over quantity isn’t just a vibe – it’s thermodynamically correct.

- We’re not getting a free intelligence explosion. The singularity crowd’s timeline assumptions might need some… recalibration.

Personally, this makes me feel vindicated about something I’ve been quietly skeptical about: the idea that scale alone solves everything. It doesn’t. Data provenance matters. Signal quality matters. The universe doesn’t give you compound interest on noise.

The Beautiful Irony

Here’s what gets me: the very mechanism people proposed to transcend human limitations – training on AI-generated data to break free from the finite supply of human knowledge – is mathematically proven to destroy the model’s representation of reality.

The escape route collapses into a trap.

It’s like trying to bootstrap yourself off the ground by pulling your own shoelaces. The harder you pull, the more you reinforce failure.

Does this mean AGI is impossible? (Even though I like to say yes, i neither have the enough research nor I want to comment on it) No. Does it mean the naive RSI path is a dead end? Mathematically, yes.

The smarter path – and what labs are quietly shifting toward – is better data, better curation, better grounding in reality. Which, ironically, means humans stay in the loop longer than the singularitarians wanted.

smsk.dev/2026/04/26/ai-…