Fabricated Knowledge

11.8K posts

Fabricated Knowledge

@fabknowledge

Simplifying the world of semiconductor investing in the age of AI. Part of the @semianalysis_ gang.

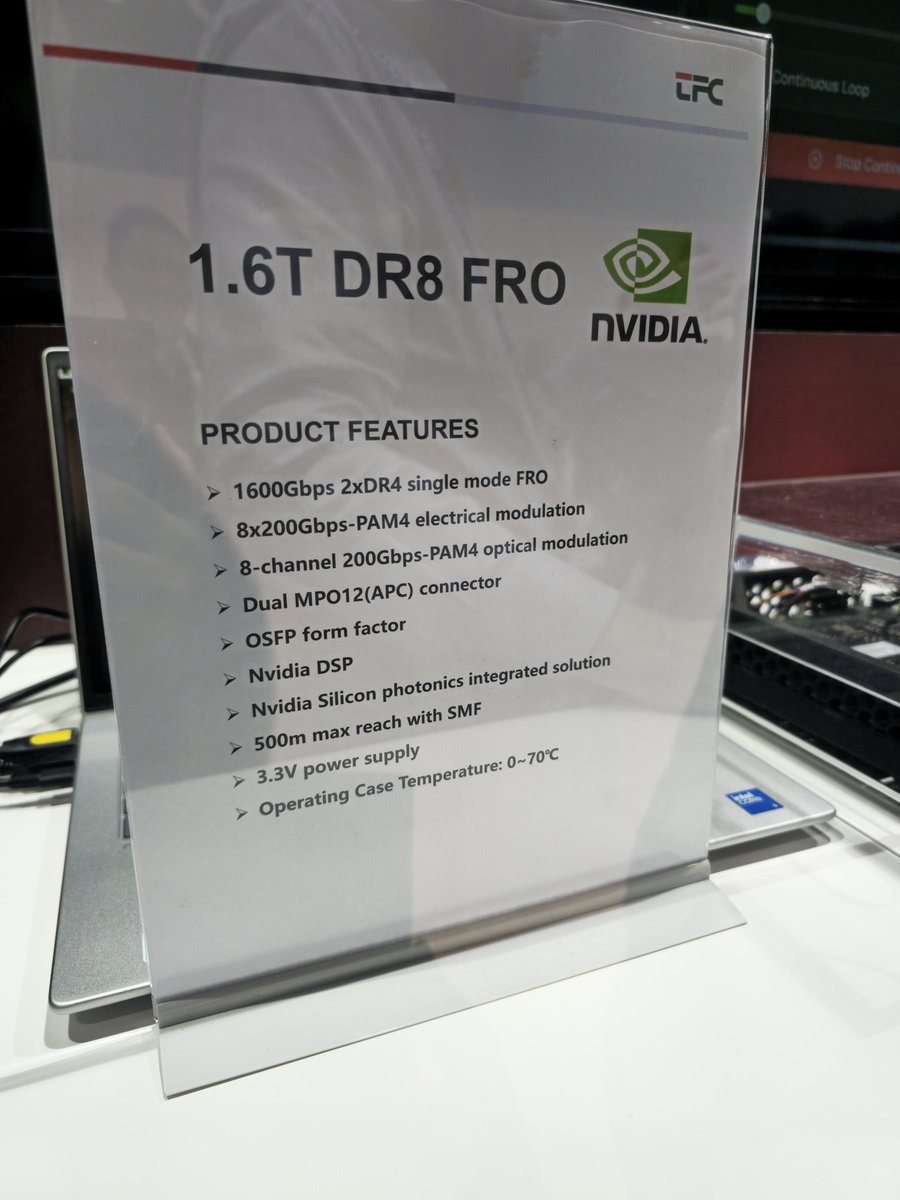

Deep| $TSEM: SiPho Capacity Inflection Drives Multi-Fold Growth Cycle AI data center compute clusters are currently scaling from thousands of GPUs to tens or even hundreds of thousands of nodes. At this magnitude, traditional copper interconnects are reaching severe physical limits; once transmission rates hit 800G and above, transmission reach shortens dramatically while power consumption escalates exponentially. To bypass these constraints, Silicon Photonics (SiPho) is becoming the essential backbone for AI Networking. As of 4Q25, Tower Semiconductor’s Silicon Photonics business has emerged as the company’s primary growth engine. Revenue doubled from $106mn in 2024 to $228mn in 2025, achieving an annualized revenue run rate exceeding $360mn by the end of 2025. As the industry transitions from 400G/800G toward 1.6T, Tower has positioned itself as the lead supplier of 1.6T Silicon Photonics wafers. We believe Tower is currently the premier SiPho PIC (Photonic Integrated Circuit) foundry with a distinct competitive lead. Among major competitors, Malaysia’s Silterra lacks significant expansion capacity, while SiPho offerings from UMC, GlobalFoundries (via the AMF acquisition), and STM still trail Tower by a wide margin. The TDP (Thermal Design Power) of AI server racks, such as the Nvidia GB200 series, has jumped from 700W in the Hopper generation to over 1,200W, necessitating the adoption of liquid cooling and more efficient optical interconnects. Within these environments, SiPho facilitates higher speeds while maintaining system scalability under strict thermodynamic limits. On February 5, NVIDIA and Tower Semiconductor established a strategic partnership focused on high-speed optical interconnects for AI data centers. Tower will leverage its SiPho process platform to manufacture 1.6T-class SiPho optical engines and modules for NVIDIA’s next-generation networking architecture, optimized for NVIDIA’s specific protocols. This collaboration aims to resolve bandwidth and energy efficiency bottlenecks during the Scale-out phase of massive GPU clusters. Separately, we have highlighted the rapid progression of Optical Scale-Up, with volume production expected to commence in 2027. Delivering over 10x the optical bandwidth of traditional Scale-Out, Optical Scale-Up—whether implemented via pluggable modules, NPO, or CPO—will significantly drive demand for SiPho PICs. Alibaba’s UPN512 (a 512-xPU optical scale-up super-node) validates the migration of optics from scale-out networking into the scale-up core domain, as LPO/NPO and other near-packaged solutions achieve system-level economics. Consequently, optics is evolving from a mere bandwidth expansion tool into a foundational infrastructure component for scale-up architectures. For SiPho, this shift directly expands the long-term TAM. Scale-up environments demand higher port densities, extreme bandwidth, and stricter power budgets—requirements natively addressed by high-integration SiPho PICs and linear drive solutions. SiPho’s penetration is moving beyond “incremental replacement” to potentially becoming the default interconnect standard for next-generation AI super-nodes. Detailed Report open.substack.com/pub/fundaai/p/…