Pinned Tweet

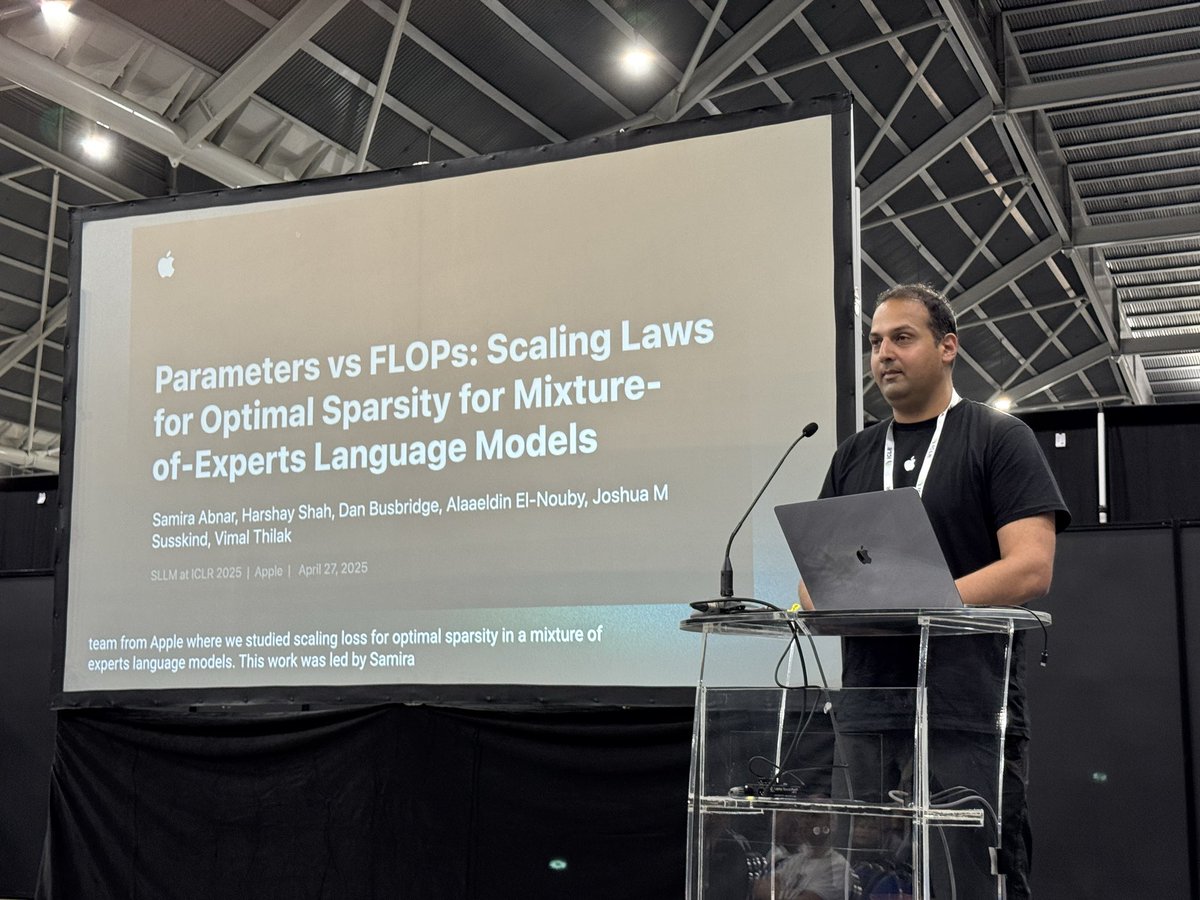

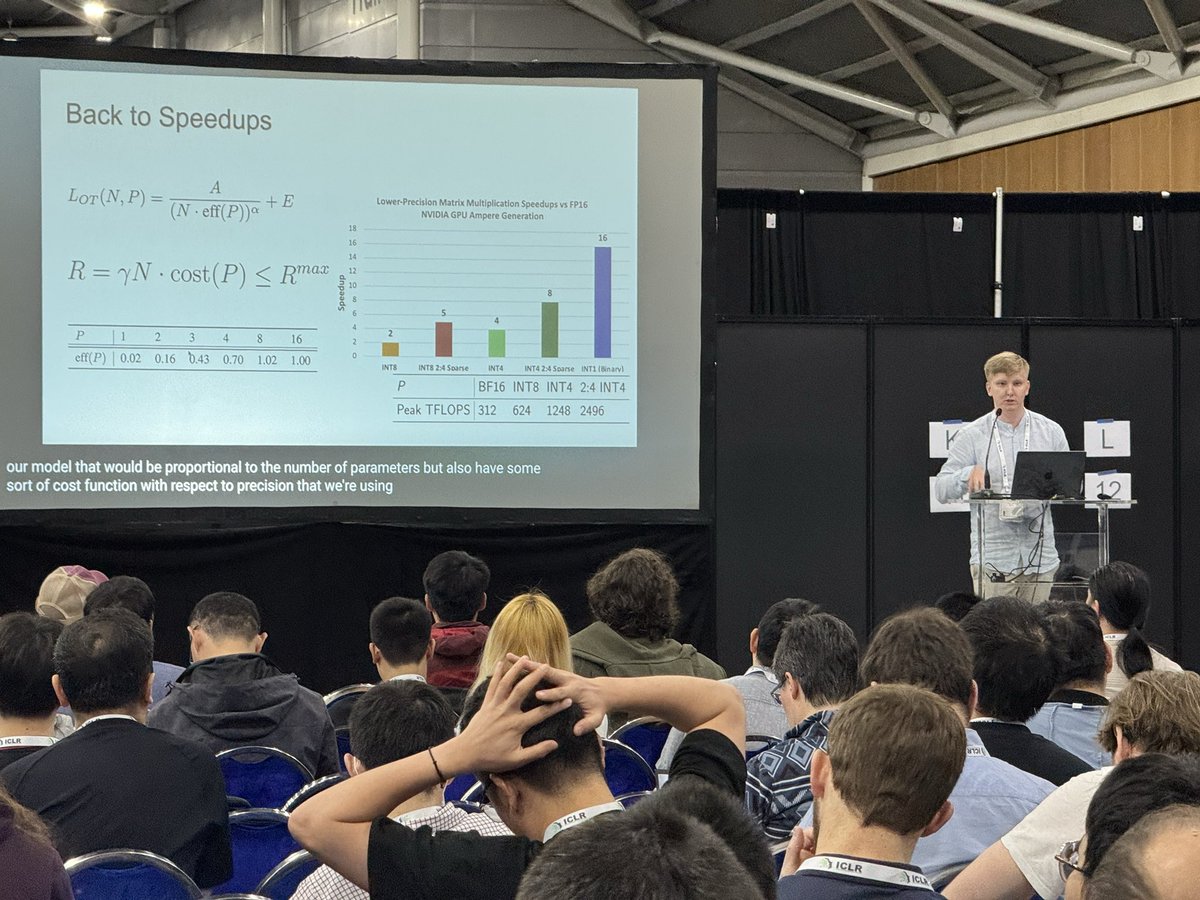

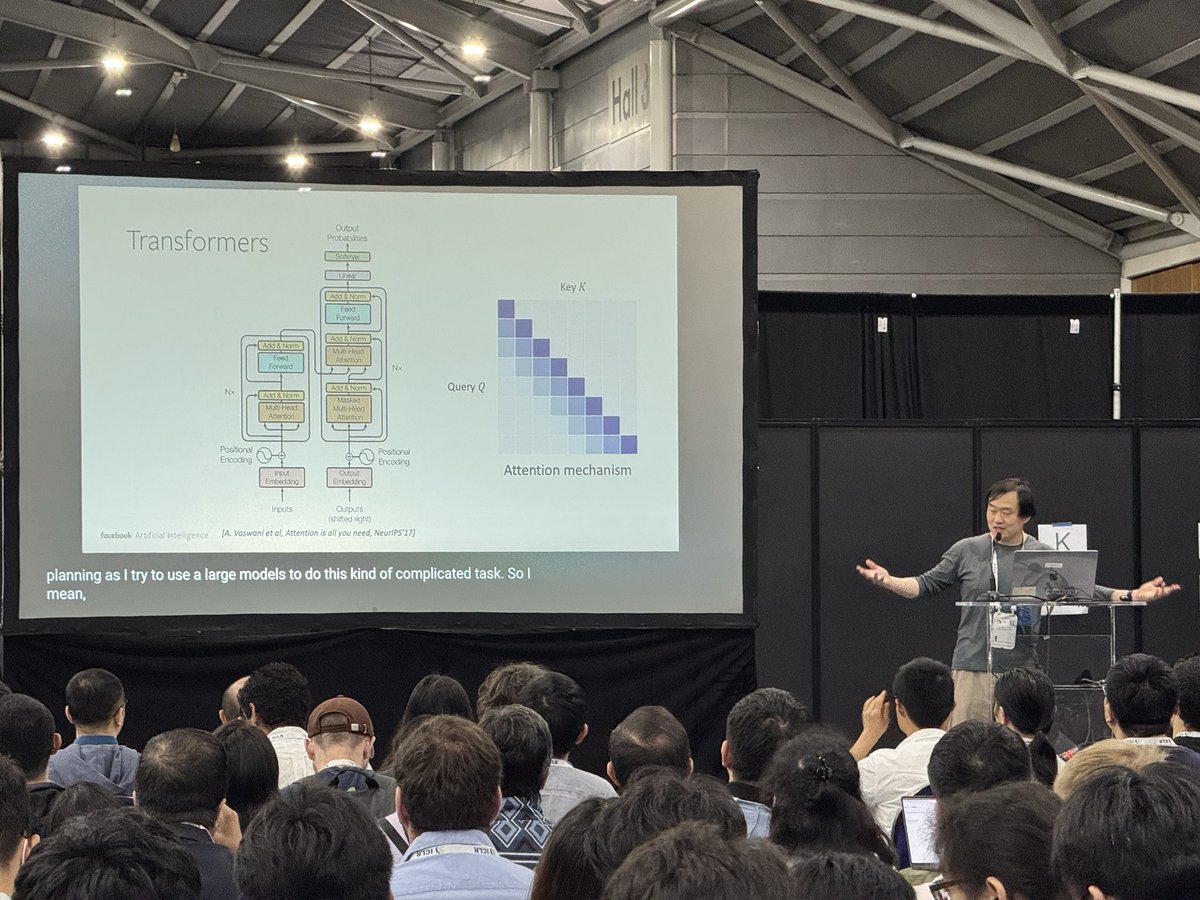

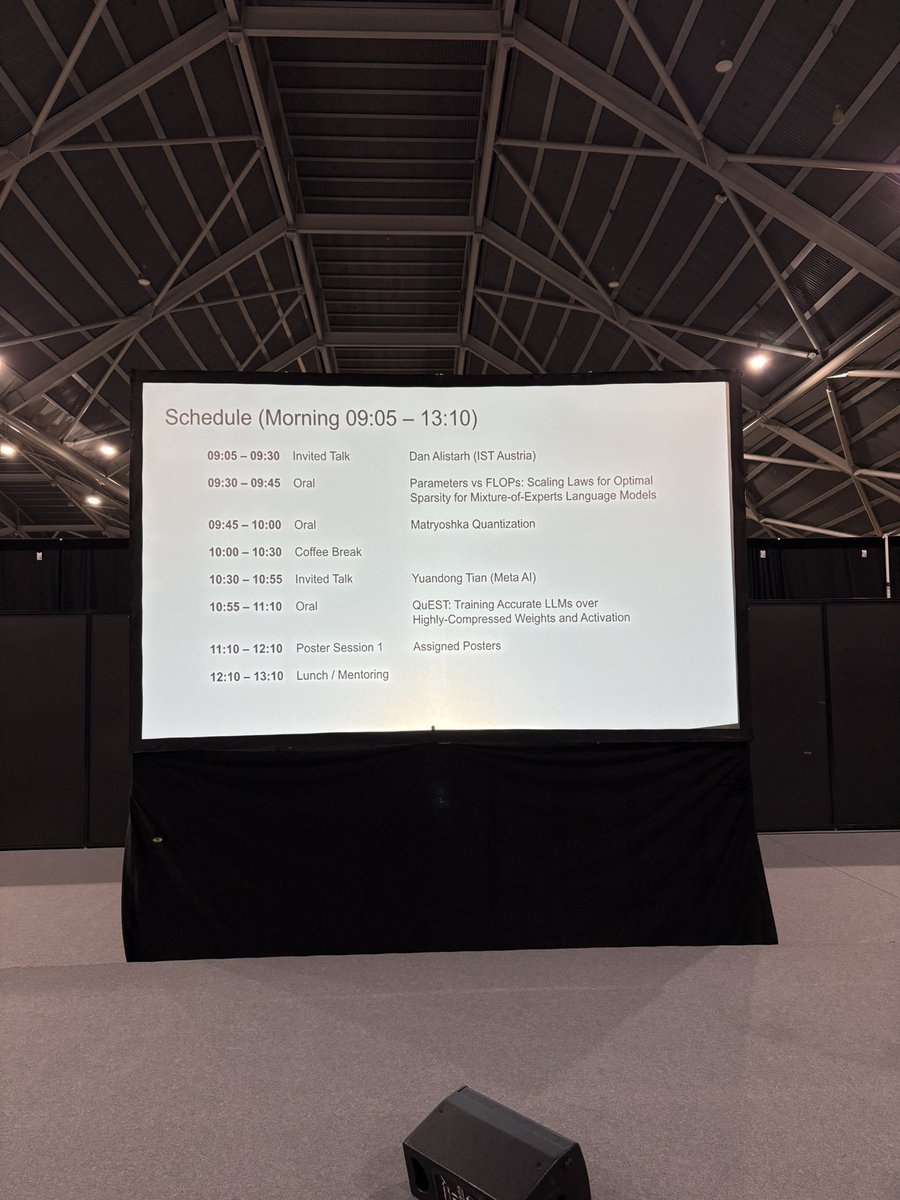

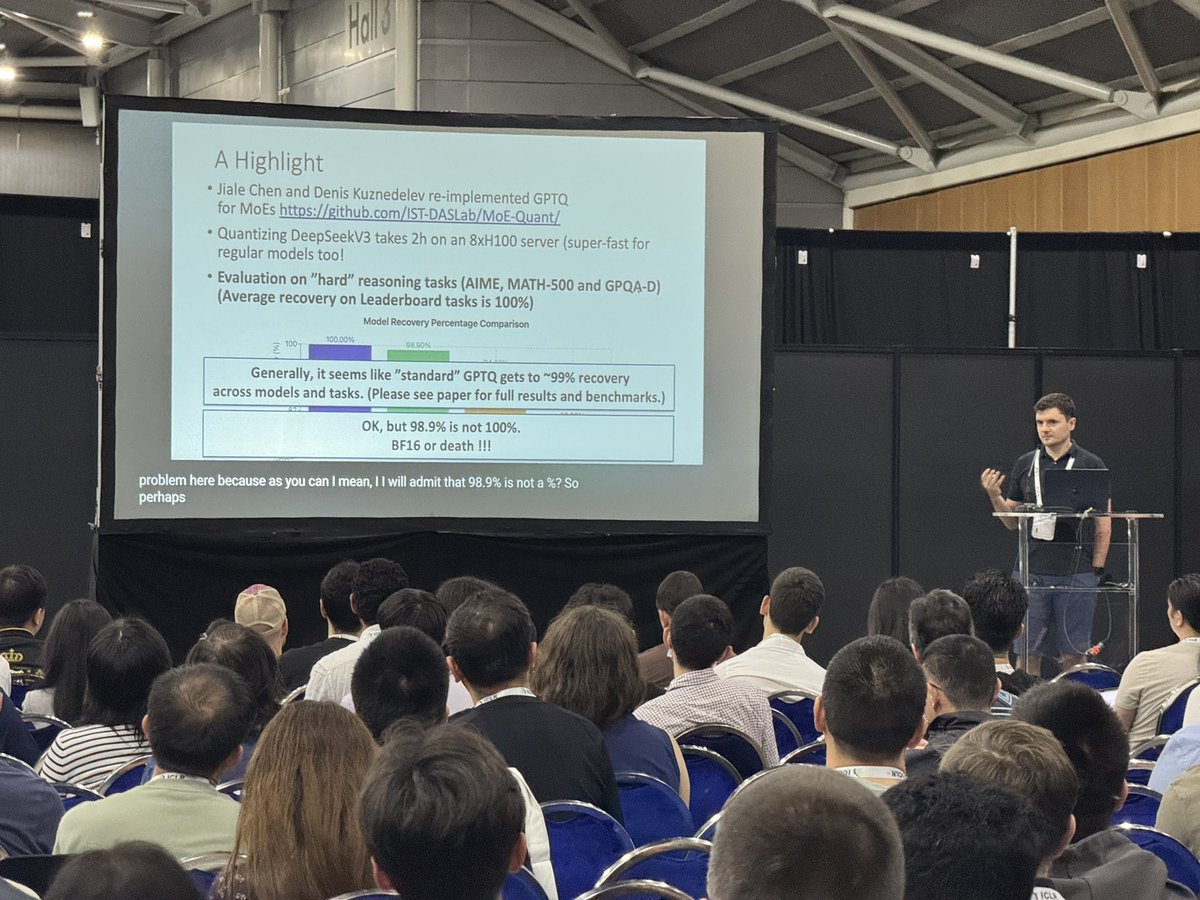

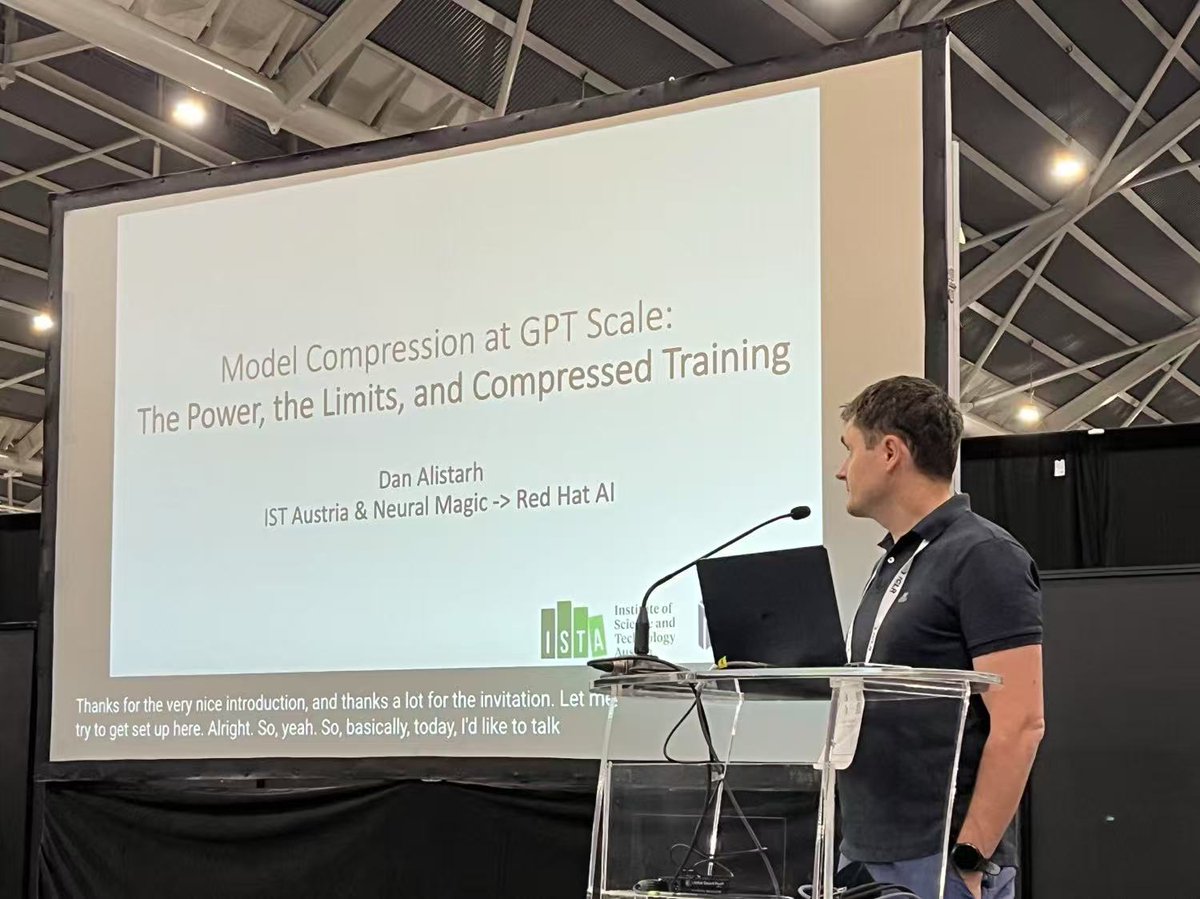

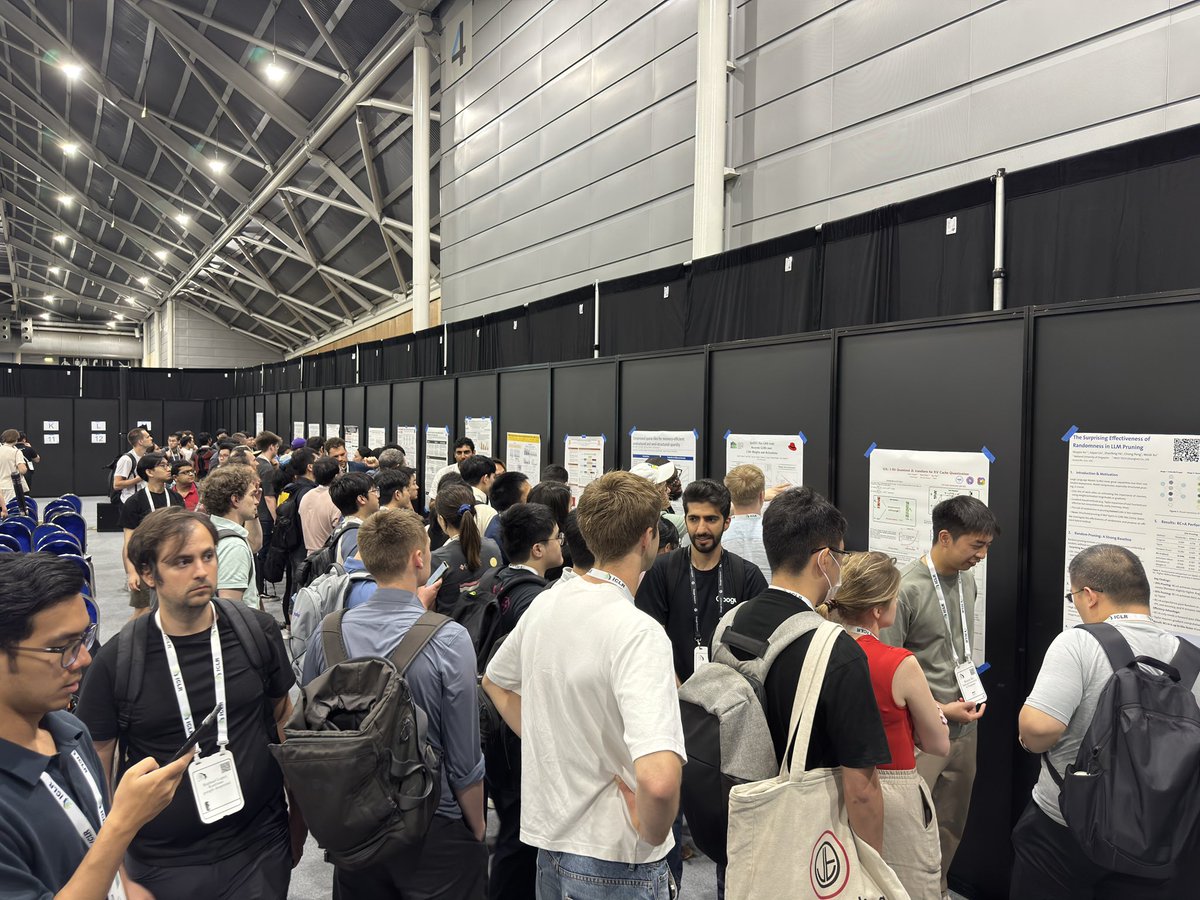

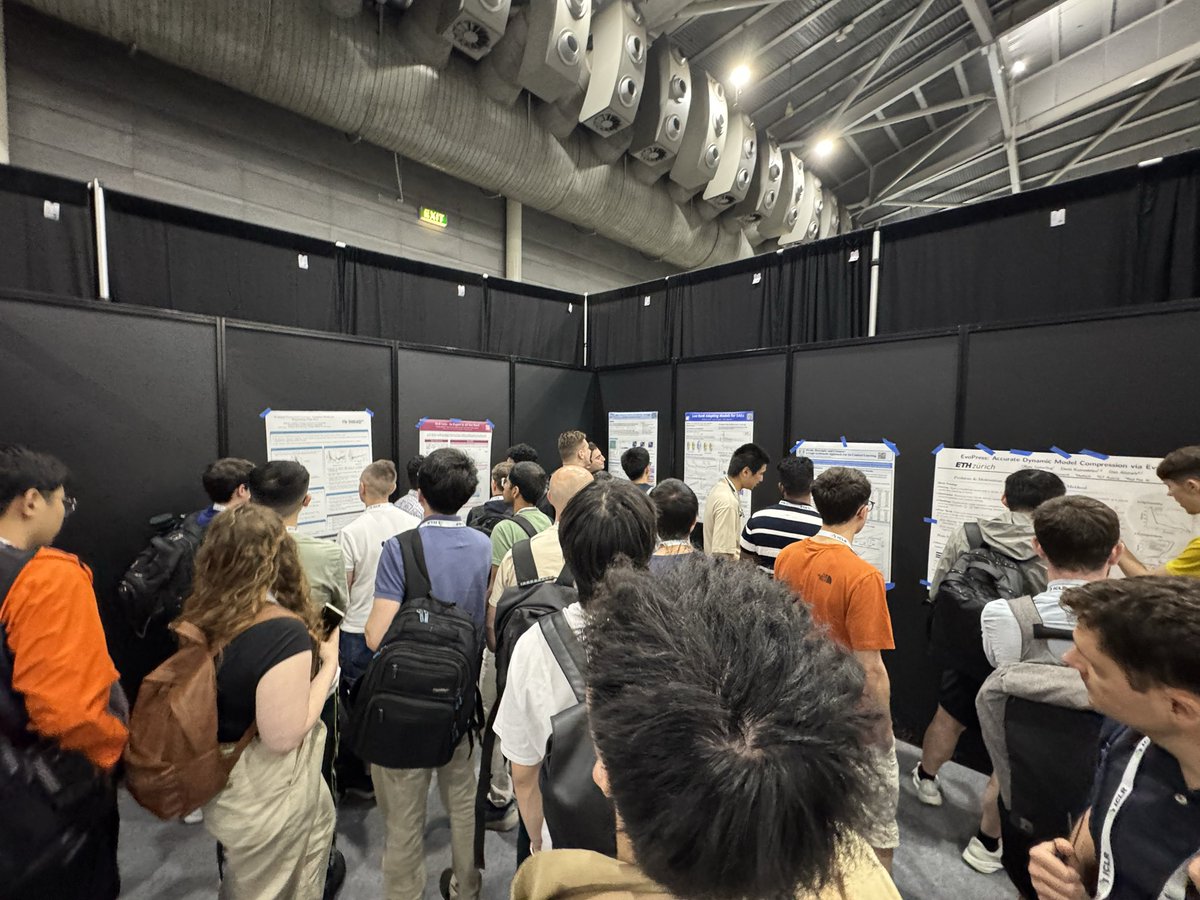

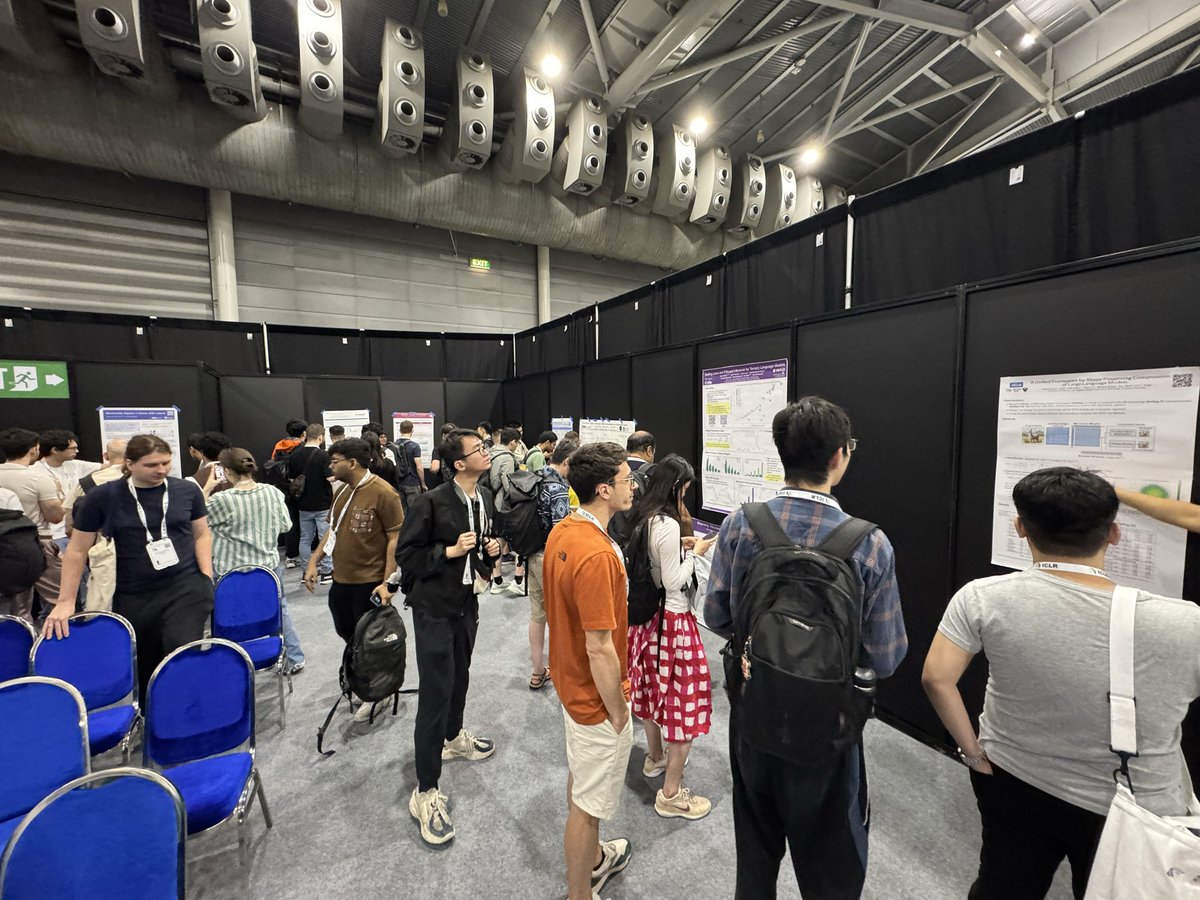

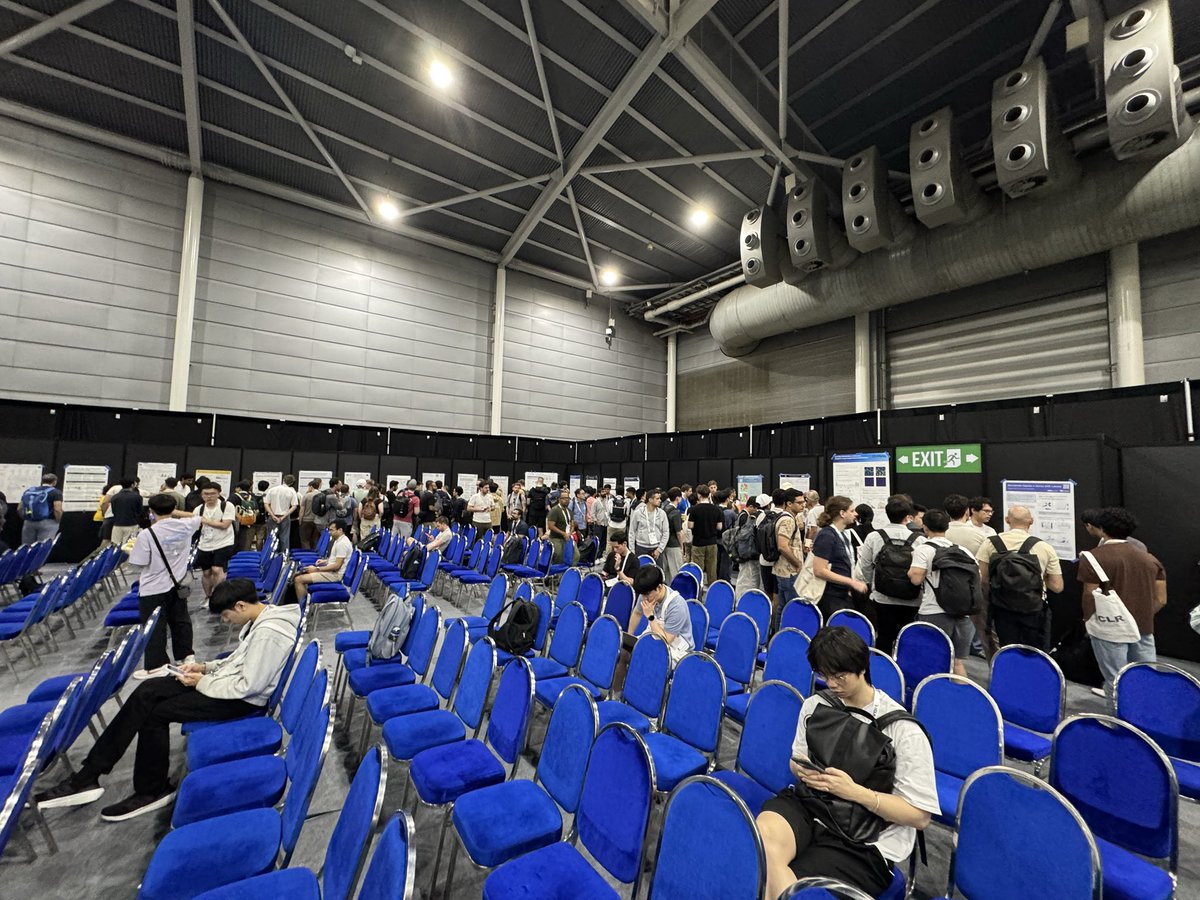

We are happy to announce that the Workshop on Sparsity in LLMs will take place

@iclr_conf in Singapore! For details: sparsellm.org

Organizers:

@TianlongChen4, @utkuevci, @yanii, @BerivanISIK, @Shiwei_Liu66, @adnan_ahmad1306, @alexinowak

English