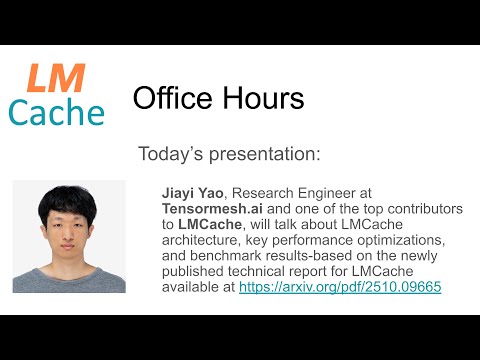

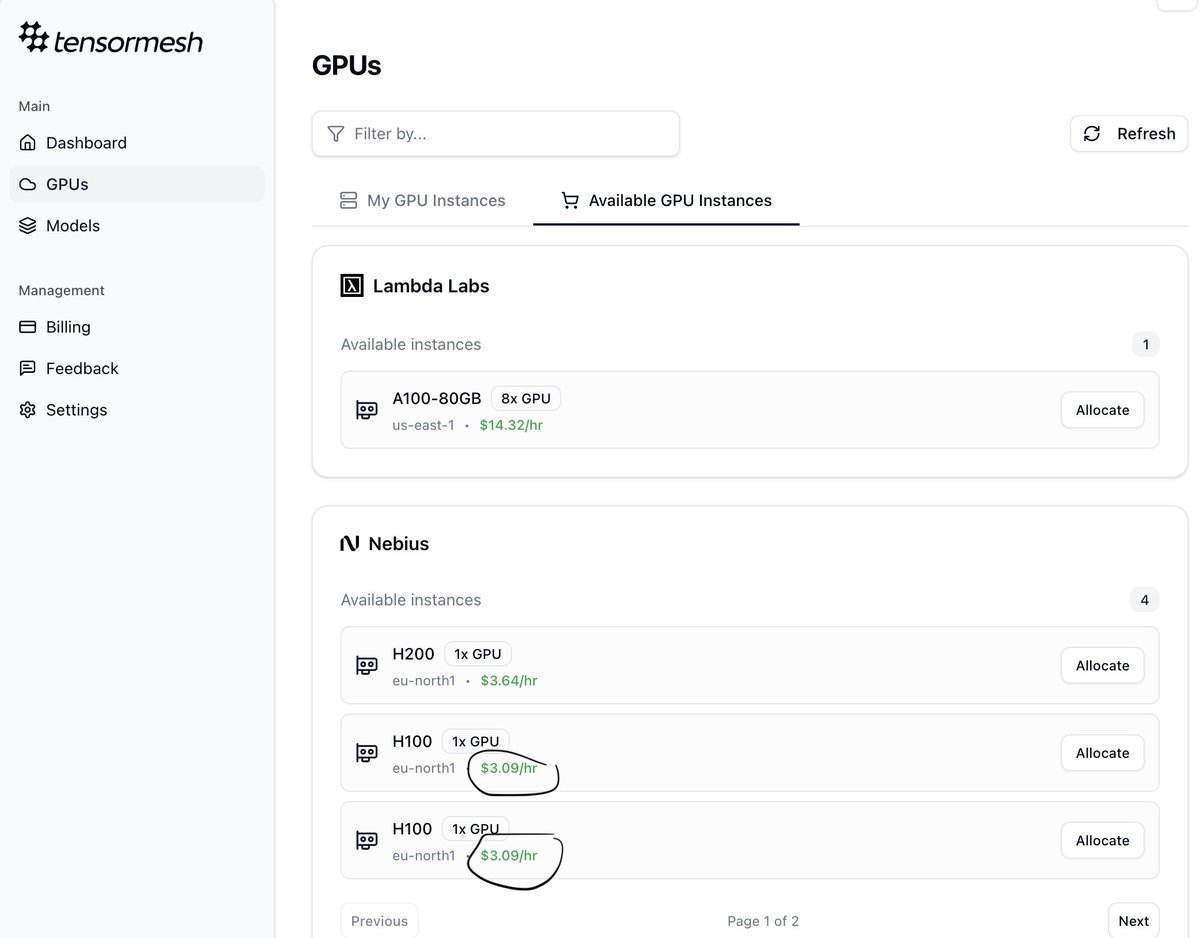

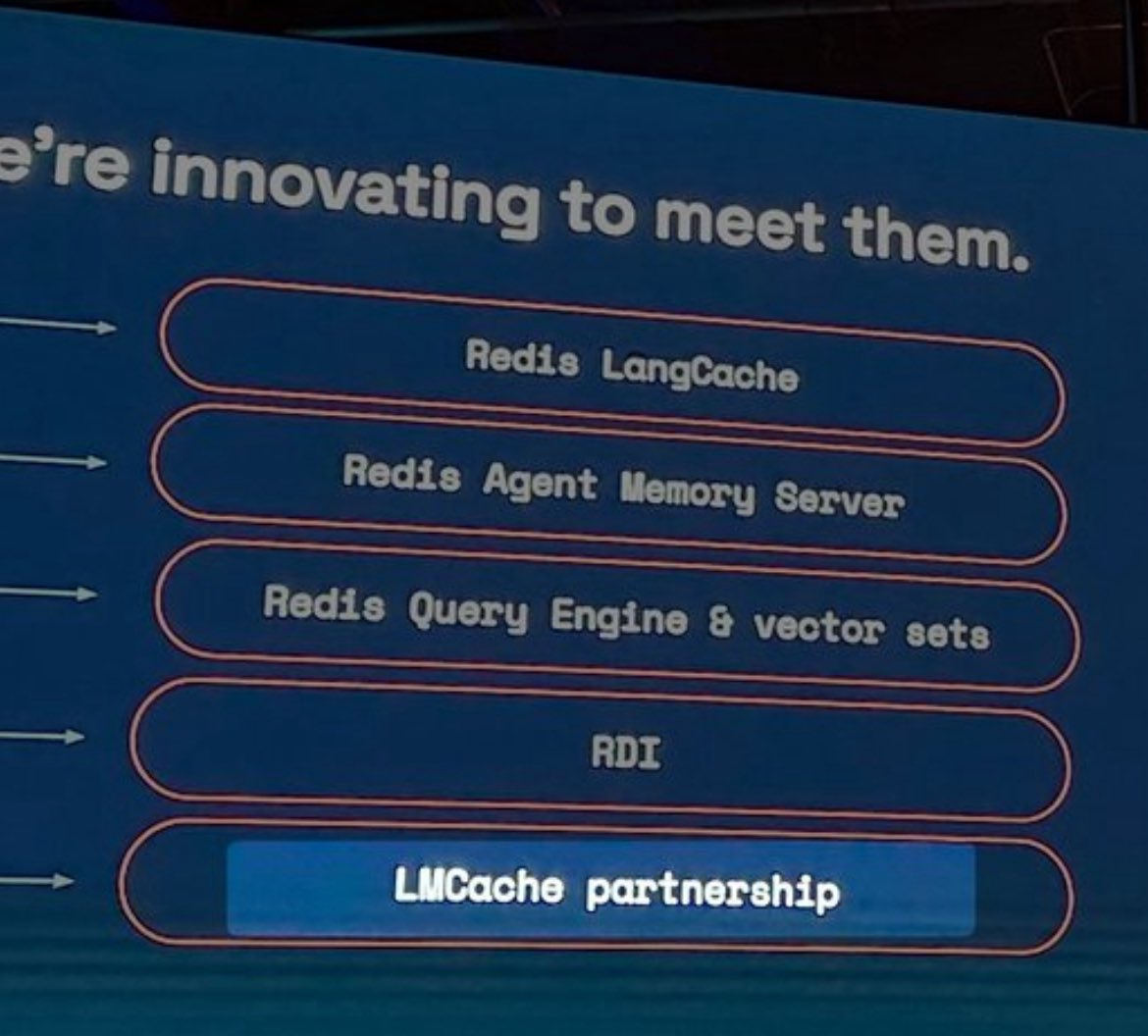

"𝗜𝗻𝗳𝗲𝗿𝗲𝗻𝗰𝗲 𝗰𝗼𝗻𝘁𝗲𝘅𝘁 𝗶𝘀 𝘁𝗵𝗲 𝗻𝗲𝘄 𝗯𝗼𝘁𝘁𝗹𝗲𝗻𝗲𝗰𝗸" — Kevin Deierling, SVP Networking #NVIDIA At his #GTC talk last week, he highlighted 𝗖𝗠𝗫 and 𝗖𝗮𝗰𝗵𝗲𝗕𝗹𝗲𝗻𝗱 from 𝗟𝗠𝗖𝗮𝗰𝗵𝗲 (@tensormesh) were part of the new KV Cache memory stack for agents, and recognized @tensormesh among the 𝗖𝗠𝗫 𝘀𝘁𝗼𝗿𝗮𝗴𝗲 𝗽𝗮𝗿𝘁𝗻𝗲𝗿𝘀. As the stack evolves, @tensormesh keeps building for what's next. ▶️ session Replay: tinyurl.com/GTC-talk