EHpops

446 posts

EHpops

@EHpops

investing my own PA and not providing financial advice. AI and all things cutting edge tech curious. just a bro with a laptop. claude/gemini/grok user

@saylor Something about the words “credit engineered” sounds unsafe bro

Back-of-envelope numbers for 1 gigawatt data center: All-in Capex: ~$50 bn Enterprise revenue generated: ~$25-30 bn/year Electricity cost: $1-2 bn/year ~2 year payback. The boom is real.

*APOLLO HOLDING TALKS TO SELL MIDCAP FINANCIAL INVESTMENT: WSJ all the slop is hitting the market

Back-of-envelope numbers for 1 gigawatt data center: All-in Capex: ~$50 bn Enterprise revenue generated: ~$25-30 bn/year Electricity cost: $1-2 bn/year ~2 year payback. The boom is real.

A retailer with no advertising budget. Four thousand products on the shelf. A hot dog priced the same as it was when Reagan was president. Costco shouldn't be the most powerful brand in retail. But it is. The rule is almost annoyingly simple: cap markups at 14%. Most stores are at 20% or higher. Every dollar of efficiency gets handed back to the customer as a lower price. That's the flywheel. Lower prices bring more members. More members bring better supplier deals. Better deals bring lower prices. Run it for forty years and nobody can catch you. Ninety percent of members renew. Every year. For decades. You don't get loyalty like that from coupons. You get it from making people feel, every single visit, like they got away with something. Strip away the warehouses and the hot dogs and what's left is a simple decision: the customer eats first. Almost nobody has the discipline to actually do it.

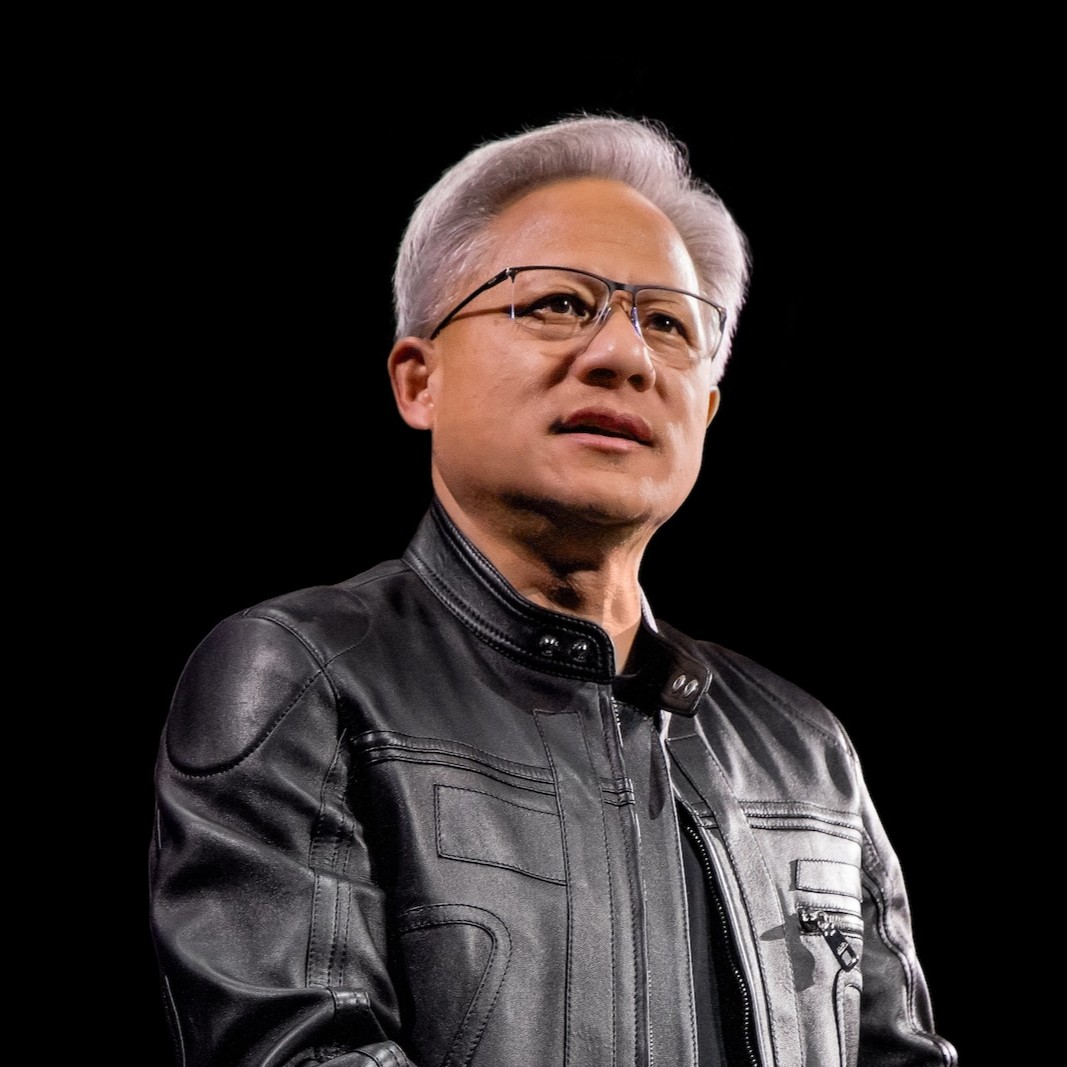

JENSEN SAYS AGENTIC AI NEEDS 1000x MORE COMPUTE. A NEW PAPER INDICATES THAT THIS # IS BLOATED AND COULD USE SOME OZEMPIC AND EXERCISE 😂 😎 Brynjolfsson, Pentland et al. (arXiv, Apr 2026) ran 8 frontier LLMs on SWE-bench Verified. The 1000x is real. The composition of it is the problem. → Same task, same model: up to 30x variance in tokens burned. → More tokens ≠ more accuracy. It peaks at intermediate cost, then flattens. → Kimi-K2 and Claude-Sonnet-4.5 burn 1.5M+ more tokens than GPT-5 on identical work. → Models can’t predict their own spend. Correlation tops at 0.39. They systematically underestimate. If hyperscaler capex is to be sized to current token burn it would include a saturation ceiling, 30x stochastic noise, and a model-to-model efficiency gap wide enough to swallow a quarter’s worth of GPU orders. Every prior compute regime followed the same arc: bloat → competition → compression. CPUs. Mobile. Cloud. The thing that scales fastest in the early innings is waste. Right now, agent providers compete on capability. The GPT-5 vs. Kimi gap says the next axis is tokens-per-task. Whoever wins it eats the margin — and bends the demand curve right when the GPUs land. The bull thesis assumes the baseline holds. The paper says the baseline is the opportunity. arxiv.org/abs/2604.22750