Brian Kurtz

334 posts

Brian Kurtz

@Math4Good

Accelerating inference @positron_ai.

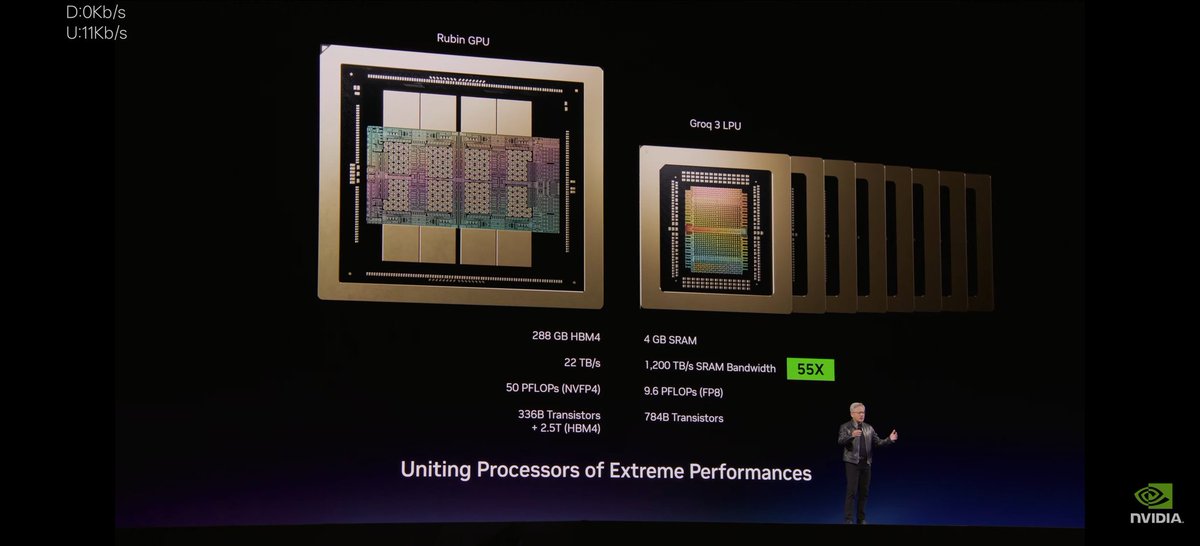

Groq rack is 8 LPU, 1 FPGA, 1 CPU, 1 BF DPU per tray and 32 trays in a rack.

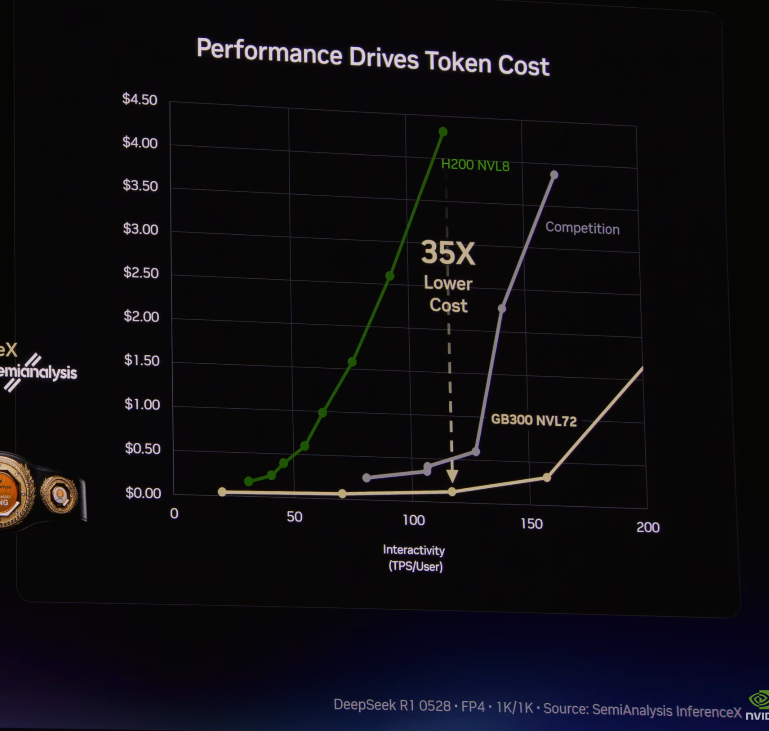

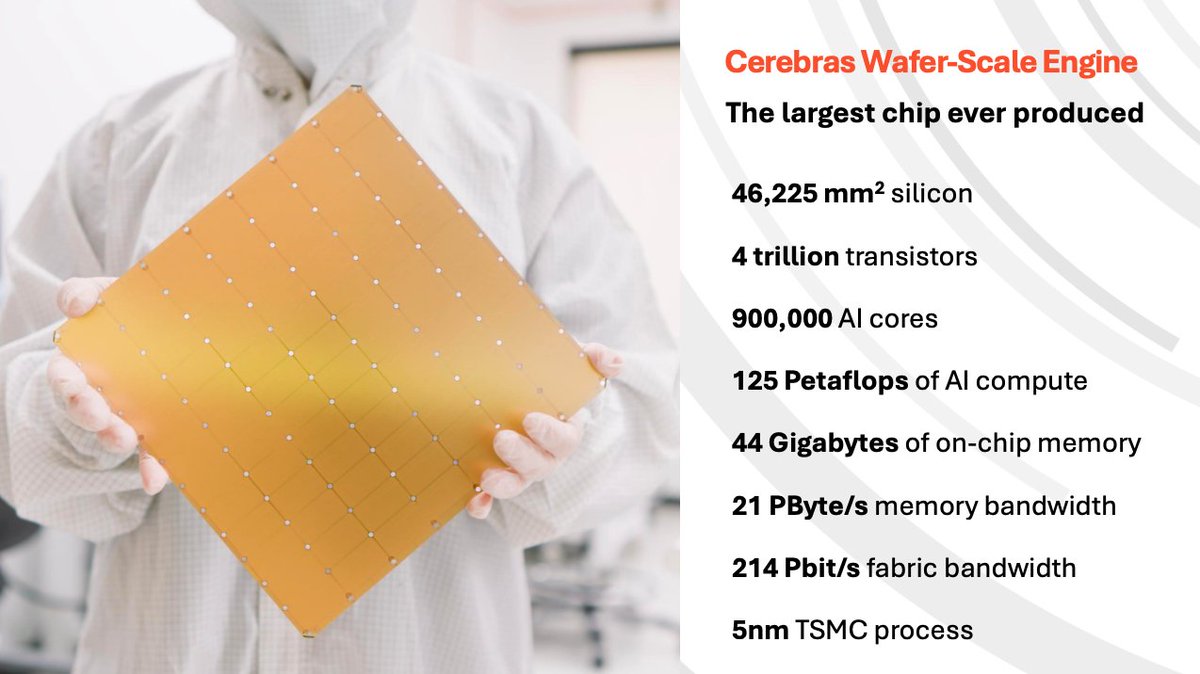

$ORCL CEO WHY DC DON’T NEED TO BE NEAR POPULATION CENTERS, YET: “Inferencing is very rapidly growing everywhere and anywhere... it’s because of higher and higher utilization of the models themselves and also new use cases... inferencing is going to have a huge amount of demand.” “If what you’re doing is asking a question for your business, it’s going to take an AI model several seconds to think about it... an extra 40 milliseconds of latency from New York to Wyoming is not going to hurt you.” “The latency problem right now is not actually the location of the hardware. It’s the type of hardware that’s being deployed, and that’s why you’re seeing so much innovation around these AI accelerators.” “If you look at what Groq does, or Cerebras, or Positron, all of these different types of companies are saying not only how do we reduce the cost of inferencing, but also how can we significantly reduce the latency of it.” “That makes it much more flexible for us to put data centers where power is abundant, land is plentiful, and we can optimize for what’s available to meet this ever-increasing demand.”

$CRDO Q3 call revealed two things the market is sleeping on: Weaver gearbox: 10x memory IO density. Positron building a 2TB inference XPU on it for speed! Lasers are out: ZeroFlap optics 1000x more reliable, half the power. Production ramp Q1 FY27. Listen Credo:

@KalGrinberg @FactoryAI Build a high-fidelity 3d simulation of the earth, outer space, and the moon, and use the codebase for the Apollo 11 Guidance Computer (linked below) to recreate the moon landing within that simulation github.com/chrislgarry/Ap…

Here is my latest iteration of my /plan-exit-review skill for Claude Code. I use this as I exit plan mode and it works super well to shake out all issues,, shake out architecture and code smell issues, perf issues, and finally make sure every part of a PR is tested (I avg 1.3 lines of test to 1 line of real code these days) gist.github.com/garrytan/001f9…

lol WSJ don’t know @insane_analyst GTC gonna be epic 🚀🚀

Proud to partner with @G42ai and @mbzuai (Mohamed bin Zayed University of Artificial Intelligence) to deliver a national-scale AI supercomputer in India with 8 exaflops of compute capacity. This cluster is designed to support researchers, startups, enterprises, and government entities, and will serve as a foundational asset under the India AI Mission, accelerating AI innovation tailored to India’s needs.

24 dedicated people. $30M spent on development. Extreme specialization, speed, and power efficiency. Today we launch Taalas’ first product. Check it out: Details: taalas.com/the-path-to-ub… Demo chatbot: chatjimmy.ai API: taalas.com/api-request-fo…

yesterday we chatted with @martin_casado and @sarahdingwang on the pod and he happened to do basic math™ on the logic of asics today @taalas_inc launched their HC1 asic that can inference 17k tok/s. Sure, it's a shitty 3.1 8B today which is a 1.5 year gap. But read the details to the HC2 this winter, and do the math — this timeline will converge to 0 in the next 2 years. Build accordingly.

24 dedicated people. $30M spent on development. Extreme specialization, speed, and power efficiency. Today we launch Taalas’ first product. Check it out: Details: taalas.com/the-path-to-ub… Demo chatbot: chatjimmy.ai API: taalas.com/api-request-fo…