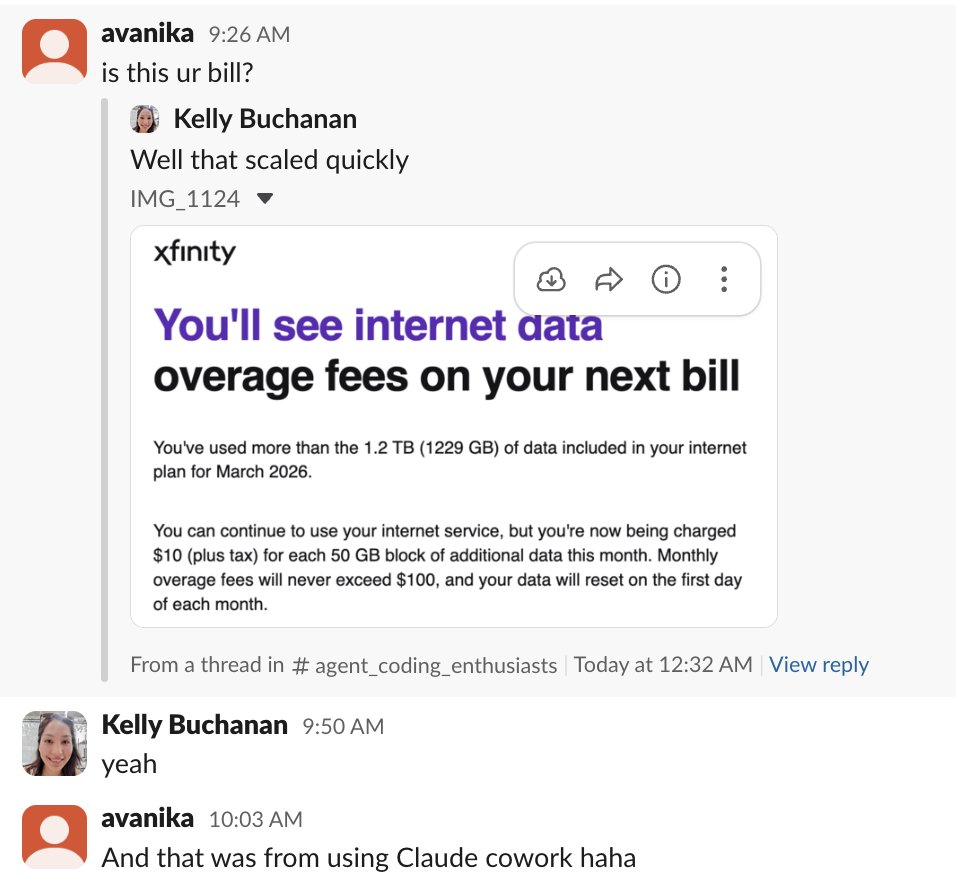

How can we close the generation-verification gap when LLMs produce correct answers but fail to select them? 🧵 Introducing Weaver: a framework that combines multiple weak verifiers (reward models + LM judges) to achieve o3-mini-level accuracy with much cheaper non-reasoning models like Llama 3.3 70B Instruct! 🧵(1 / N)