Mitish Raina

981 posts

Mitish Raina

@mitishraina

21 • India • Researcher • ML Engineer • 4x Hack 🏆 • 2x Startup Intern • ADHD • Learn • Build

Currently on an ML from scratch sprint Rebuilding every ML, DL, NLP algorithm from the ground up.. no libraries first, then with libraries yk just to really understand what’s happening under the hood. From dataset to training to prediction. Almost done with classical ML. Feel free to check out my GitHub if you want last minute prep or to dive into the math behind these algorithms. github.com/mitishraina/Ba…

Currently on an ML from scratch sprint Rebuilding every ML, DL, NLP algorithm from the ground up.. no libraries first, then with libraries yk just to really understand what’s happening under the hood. From dataset to training to prediction. Almost done with classical ML. Feel free to check out my GitHub if you want last minute prep or to dive into the math behind these algorithms. github.com/mitishraina/Ba…

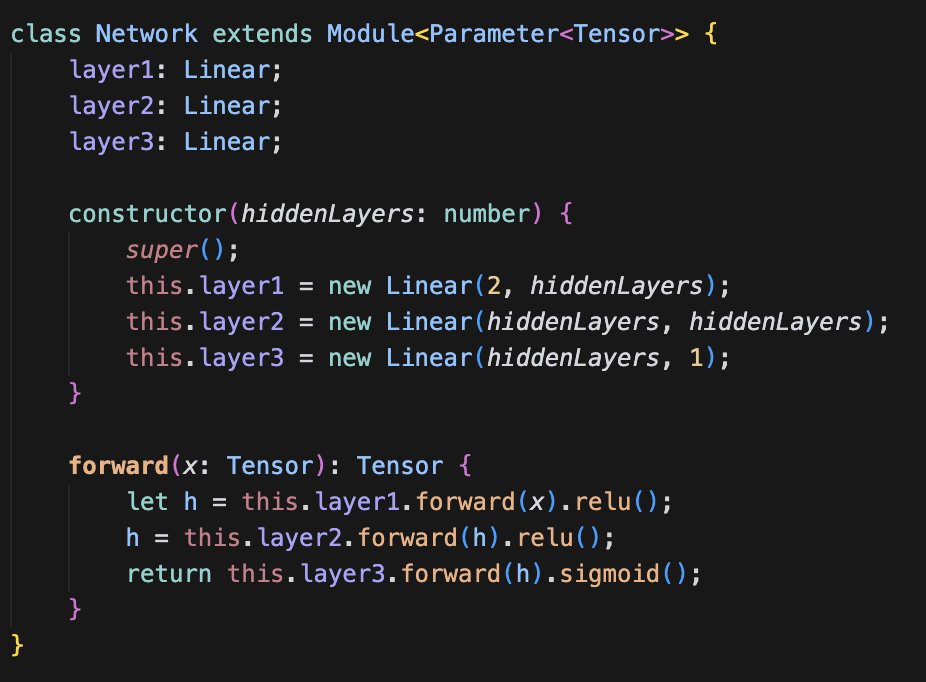

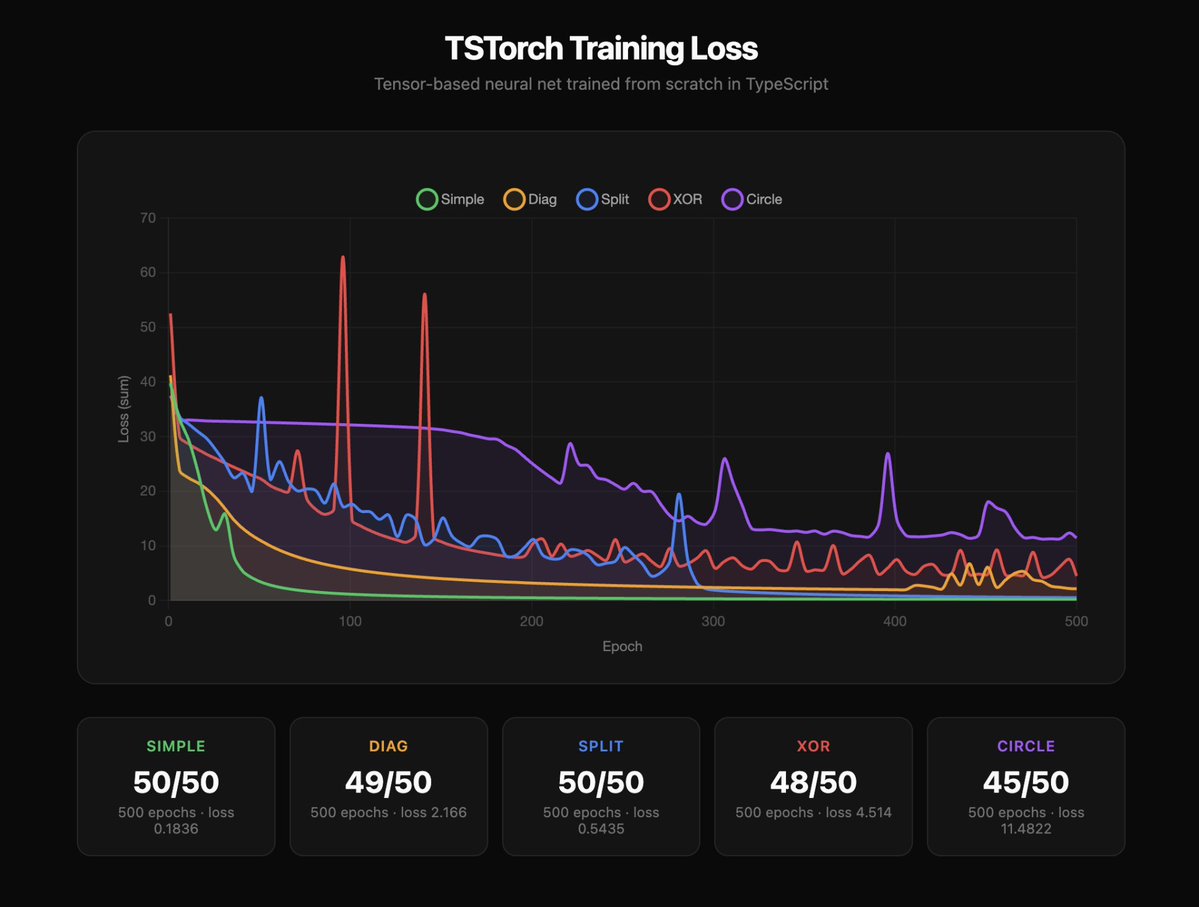

I built a neural network from scratch without using PyTorch, TensorFlow, or any libraries for that matter. Instead, I implemented the core math myself. I'm working on making my own machine learning framework from scratch with my friend @_reesechong. He previously trained a similar neural network, but using just scalars. The next step was to use tensors instead. The benefit of this is clear: when using scalars each data point is looped through separately creating its own node in a computation graph. With tensors, these separate nodes are stored together, allowing one forward and backward pass for the whole batch, greatly improving the efficiency of training. We often hear the saying "don't reinvent the wheel", but in my experience rebuilding technologies that abstract away a lot of complexity gives you a better and more thorough understanding of how the system works. Results of the training are shown below. Feel free to checkout the repo and read through the code, linked in the replies.